Readiness Audit

Quick Answer: Audit workflow repeatability, data access, ownership, and risk constraints before selecting tools.

Think of readiness like surveying land before building. If the ground is unstable, construction speed only makes failure happen faster. Teams should shortlist workflows with clear inputs, measurable outputs, and low initial regulatory risk. This keeps the first pilot focused and decision-ready.

Use this phase to align leadership, operations, and compliance on one common pilot objective. The number one implementation failure we see is unclear ownership, not weak model capability.

Data Preparation

Quick Answer: Data prep should define what data is usable, what is restricted, and how outputs are logged for auditability.

Think of data prep like mise en place in a professional kitchen. If ingredients are not sorted and labeled before cooking, quality collapses under pressure. AI workflows behave the same way. Data boundaries should be explicit before pilots start.

The practical rule is to classify data by sensitivity and map each class to approved usage patterns. This is a mandatory bridge to AI Risks & Legal Compliance for Businesses, not an optional side task.

Vendor Selection

Quick Answer: Select vendors by workflow fit, security controls, and integration depth, then compare pricing only after technical fit is confirmed.

Think of vendor selection like hiring a specialist contractor. The right partner is the one that matches your exact job scope and safety standards, not the one with the biggest ad budget. Build a short scorecard with security, workflow compatibility, and governance features. Then test with real pilot tasks before signing long commitments.

For cost decisions, connect this phase directly to AI ROI Calculator & Business Case Guide so procurement is tied to measured value assumptions.

Pilot Phase

Quick Answer: A strong pilot tests one workflow end-to-end with explicit success metrics, review gates, and exception handling.

Think of the pilot as a dress rehearsal before opening night. You are not testing only model output; you are testing how people, approvals, and tools perform together under real conditions. Most teams should run pilots for 30 to 60 days. That timeline is long enough to expose process issues and short enough to preserve momentum.

The hidden hack is a weekly exception review. It surfaces friction points faster than monthly reporting and helps teams fix process design before scale.

Scaling Phase

Quick Answer: Scale AI by replicating proven workflow patterns, not by launching many new use cases at once.

Think of scaling like opening a second store location. You do not reinvent operations from scratch; you copy the proven operating model and adapt local details. For AI, that means reusing prompt standards, approval logic, and measurement rules. Scaling should feel like controlled replication, not chaotic expansion.

Use cross-functional pages like AI for Marketing Teams and AI for Operations & Automation to sequence expansion based on business priority.

Governance

Quick Answer: Governance sets non-negotiable boundaries for data use, approval ownership, monitoring cadence, and incident response.

Think of governance like guardrails on a mountain road. They do not slow progress; they prevent catastrophic failure when conditions change. This includes model monitoring, approval ownership, and escalation rules for incorrect or non-compliant outputs. Without governance, scale creates exposure faster than value.

Reference frameworks such as NIST AI RMF are useful baselines for this phase.

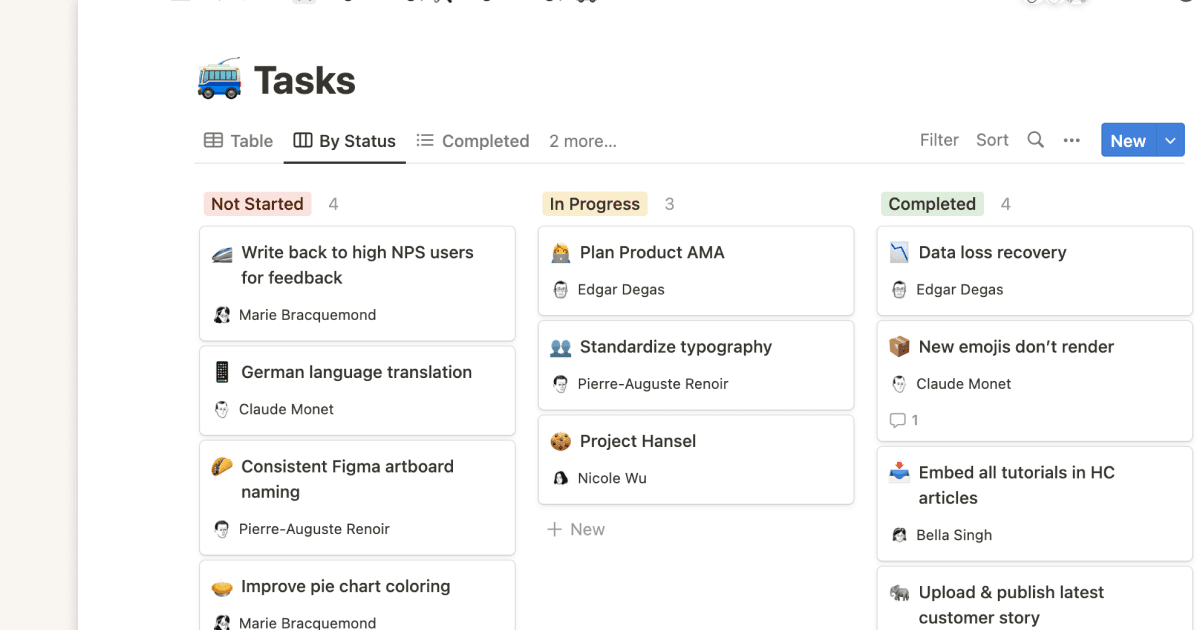

5-Step Framework Diagram

Quick Answer: The roadmap runs in sequence: Readiness -> Data -> Vendor -> Pilot -> Scale + Governance.

Diagram (text version): 1) Readiness Audit -> 2) Data Preparation -> 3) Vendor Selection -> 4) Pilot Validation -> 5) Controlled Scale with Governance.

Readiness Checklist

Quick Answer: Use this checklist to confirm the organization is deployment-ready before pilot launch.

- Executive sponsor and workflow owner are named

- Data boundaries and restricted inputs are documented

- Pilot success metrics and review cadence are approved

- Exception and escalation pathways are operational

- Legal/compliance sign-off criteria are clear

aicourses.com Verdict: Roadmap Discipline Beats Tool Hype

Quick Answer: Companies that follow a staged roadmap consistently outperform teams that jump straight from demo to broad rollout.

Implementation success in 2026 is mostly about operational sequencing, not model novelty. Start small, validate quality, scale proven patterns, and keep governance active throughout the lifecycle. This is how AI becomes infrastructure instead of recurring experiments.

Bridge: now move to AI ROI Calculator & Business Case Guide and AI Risks & Legal Compliance for Businesses to complete your rollout foundation. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!