Most teams already use AI tools. Far fewer teams have a repeatable playbook that reliably improves outcomes. That gap is where productivity wins are lost.

This guide turns AI usage into an operating system for engineering teams, with concrete metrics, workflow design, and governance controls.

Treat each section as a management and engineering checklist you can apply directly in planning, code review, and retrospectives. The objective is to replace ad-hoc usage with team habits that survive hiring changes, shifting deadlines, and tool upgrades.

The playbook approach also helps leadership make better decisions about investment and risk. When metrics, review standards, and adoption phases are explicit, teams can scale AI confidently instead of bouncing between hype and rollback cycles.

Use the content as a living document. Teams should adapt thresholds, examples, and review templates to their own architecture and release cadence, then revisit the playbook monthly so it reflects current reality instead of frozen assumptions.

The immediate win is alignment: engineers, managers, and security stakeholders discuss the same metrics and process checkpoints instead of speaking different operational languages.

That shared language reduces execution friction in planning meetings, review queues, and incident retrospectives, which is exactly where productivity programs usually stall.

How to Measure AI Productivity Impact Without Fooling Yourself

Quick Answer: Measure AI productivity with a balanced scorecard that includes delivery speed, quality, and developer experience to avoid misleading gains.

Productivity measurement is where many AI rollouts quietly fail. It is like evaluating a diet only by weight loss while ignoring strength and sleep quality. Teams that track just output volume often ship more code but inherit more defects and review debt.

A better framework combines DORA metrics, defect escape rate, and subjective developer friction signals. The point is not to produce one perfect number. The point is to see tradeoffs early enough to adjust workflows before bad habits become default practice.

Leadership teams should publish metric intent alongside targets. If engineers know a metric is used for process improvement rather than individual ranking, data quality improves and gaming behavior drops. This cultural detail is often the difference between helpful dashboards and compliance theater.

| Metric Family | Why It Matters | Weekly Decision Prompt |

|---|---|---|

| Delivery speed | Shows whether cycle time is improving | Are we shipping faster without additional rollback events? |

| Quality | Prevents speed-only optimization | Did AI-assisted changes increase post-release defect volume? |

| Team experience | Captures cognitive load and review fatigue | Are engineers spending less time on low-value repetitive work? |

To connect this workflow with your existing stack, link the rollout to the pillar guide, AI debugging workflows, AI code review controls, model behavior mechanics, and prompt templates for developers. That internal map keeps teams from treating each article as an isolated tactic.

The AI-Assisted Day-in-the-Life Playbook

Quick Answer: A strong AI day-in-the-life flow maps each phase of work from planning to merge, with explicit review checkpoints and ownership.

Teams get better outcomes when AI usage is procedural, not ad hoc. Think of it like a race pit crew: each step is timed, owned, and repeatable. Developers should know exactly when AI is allowed to draft, when humans must review, and which checks are mandatory before merge.

A practical flow is: planning prompt, implementation draft, test generation, self-review, peer review, and merge checklist. Each stage has a different tolerance for AI autonomy. Early drafting can be high-autonomy; merge gating should remain strict human ownership with automated checks.

- Planning: ask AI to summarize constraints and propose implementation options.

- Build: generate draft code with explicit acceptance criteria.

- Verify: generate tests and run static analysis.

- Review: compare AI rationale to code diff and architecture standards.

- Merge: enforce policy checks and track outcome metrics.

Technical gotcha: teams often skip the rationale step and review only the diff. That increases subtle architecture drift. The fix is to require a short 'decision rationale' note for every AI-assisted pull request.

KPI Instrumentation: Cycle Time, Defect Rate, Merge Time, Coverage

Quick Answer: Instrumenting key performance indicators is the difference between anecdotal AI success and a credible team productivity program.

KPIs (key performance indicators) should guide coaching, not punish teams. This is similar to strength training metrics: if numbers are used only for judgment, people game the system; if used for feedback, performance improves. AI adoption programs need the same mindset.

Track a compact set first: cycle time, time to merge, escaped defect rate, and automated test coverage on changed files. Add one leading indicator specific to AI usage, such as percentage of pull requests with AI-generated tests accepted after review. That gives visibility into whether AI is helping verification, not just code volume.

Keep metric ownership close to the work. One engineering manager and one senior individual contributor should co-own interpretation so action plans remain practical. Without paired ownership, teams often collect KPI snapshots but postpone corrective changes.

| Technical Requirement | Potential Risk | Learner's First Step |

|---|---|---|

| Consistent event tagging in repository analytics | Cannot separate AI-assisted and non-assisted work | Add optional AI-assist label in pull request templates |

| Shared KPI definitions | Teams optimize different interpretations | Publish a metric glossary in engineering docs |

| Weekly review cadence | Metrics are collected but ignored | Add KPI review to sprint retrospectives |

Worked example: one 26-engineer team introduced AI-assisted testing templates and measured results across two quarters. Time to merge fell from 27 hours median to 18 hours, while escaped defects per release held flat. The key driver was not raw code generation; it was better pre-merge test completeness.

Adoption Phases: Pilot, Rollout, and Governance

Quick Answer: Adoption should move in phases with explicit graduation criteria, so teams expand AI usage only after quality and reliability thresholds are met.

AI adoption maturity behaves like rolling out an internal platform. You start with a pilot, standardize patterns during rollout, and only then lock governance controls. If teams skip phase discipline, early wins are followed by inconsistency and audit pain.

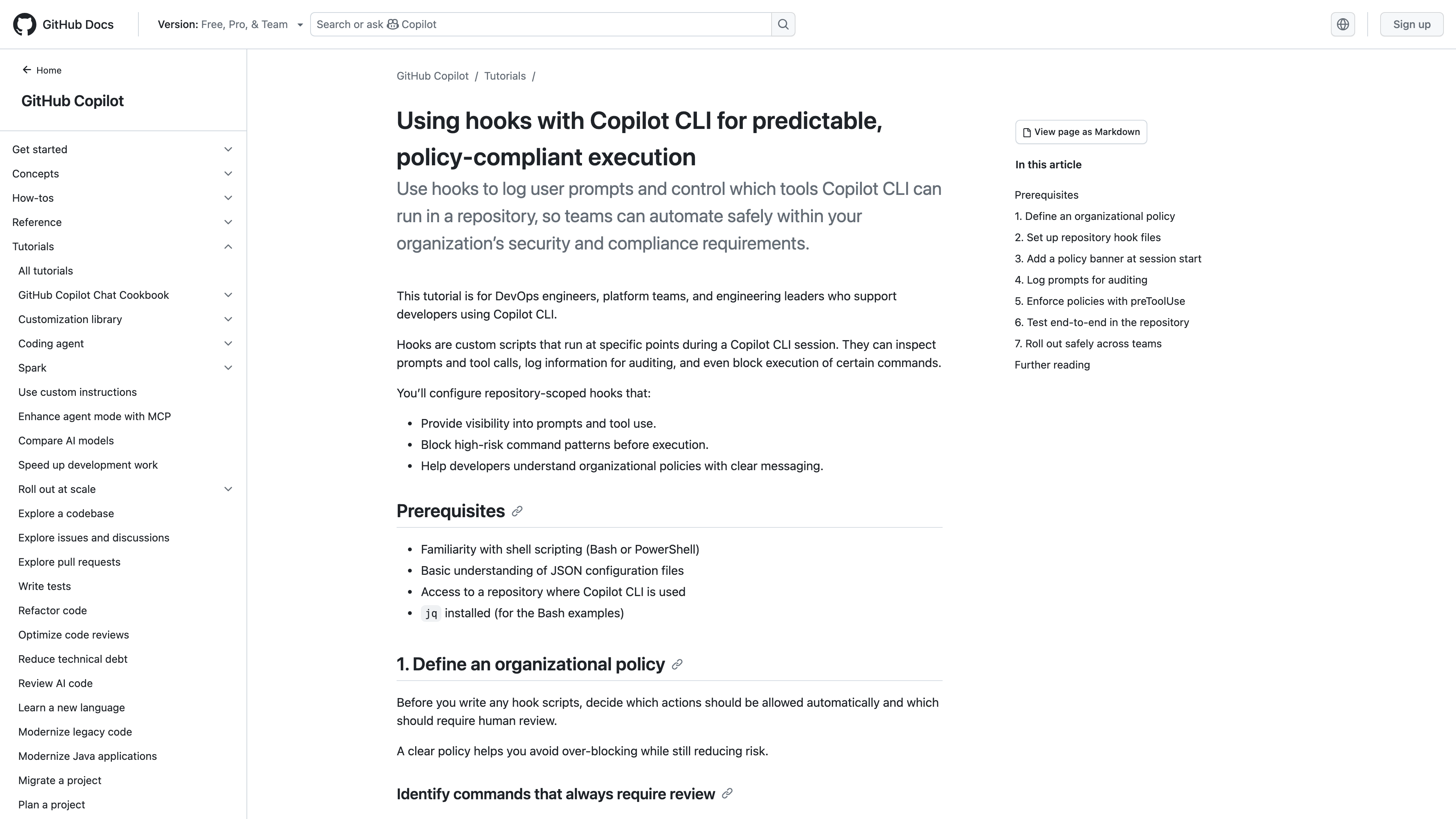

Pilot phase should answer one question: does AI improve a measurable engineering bottleneck? Rollout phase should answer a different one: can we reproduce those gains across teams without quality regression? Governance phase then ensures controls, logging, and policy reviews are durable.

- Pilot exit rule: at least one KPI improves by 15 percent without reliability regression.

- Rollout exit rule: three teams use the same playbook with similar outcomes.

- Governance exit rule: audit trail, ownership model, and security controls verified.

What should you do this week? Write phase exit criteria before expanding to a new team. That one page prevents endless pilots and unclear ownership.

Documentation Standards and Collaboration Patterns

Quick Answer: AI productivity scales when documentation is explicit, prompts are versioned, and collaboration rituals include AI-specific review checkpoints.

Documentation is the hidden engine of AI productivity. It works like mise en place in professional kitchens: when ingredients are prepared and labeled, execution is faster and cleaner. In engineering, that means architecture notes, coding standards, and prompt patterns are discoverable and current.

Collaboration patterns also change. Teams should add AI-focused checkpoints in pull request reviews, such as verifying that generated code follows domain invariants and that generated tests cover failure paths. This turns review into quality amplification rather than style policing.

| Collaboration Pattern | Outcome | Failure If Missing |

|---|---|---|

| Versioned prompt library | Reusable high-quality outputs | Each engineer reinvents prompts |

| AI rationale in pull request template | Faster reviewer understanding | Reviewers cannot evaluate decision context |

| Monthly playbook updates | Continuous improvement | Stale guidance despite tooling changes |

A simple weekly ritual works: spend 20 minutes in team sync reviewing one successful and one failed AI-assisted change. Capture lessons immediately in the playbook.

Change Management and Long-Term Team Performance

Quick Answer: Long-term AI productivity depends on change management: training paths, transparent expectations, and guardrails that preserve engineering standards.

Change management is often dismissed as soft work, but it determines whether technical improvements last. It is similar to introducing a new programming language in a legacy codebase: tooling alone does not create adoption, habits do. Teams need clear expectations about where AI should help and where human judgment remains non-negotiable.

Start with role-based enablement: junior engineers focus on guided prompt templates and review discipline, while senior engineers focus on architecture-level validation and governance. Pair that with transparent communication about metric goals and acceptable risk boundaries. When people know what success looks like, resistance drops and quality rises.

The practical outcome is steadier performance rather than short-term spikes. Teams that invest in training and governance usually see fewer reversal cycles, because improvements are integrated into daily practice instead of driven by one-off enthusiasm.

A simple governance ritual is a monthly AI playbook review with representatives from engineering, security, and product. Review one high-impact success and one costly miss, then update standards immediately. This keeps the playbook alive and prevents policy drift as tools evolve.

Recommended Next Reads

Use these related guides to turn the workflow from this article into a team-level operating model.

aicourses.com Verdict

Quick Answer: These workflows produce the best outcomes when teams treat AI as a reliability and delivery multiplier, not a replacement for engineering judgment.

AI productivity is not a tool problem anymore. It is an execution problem. Teams that define playbooks, metrics, and ownership structures consistently outperform teams that rely on individual heroics.

Start with one measurable workflow and one documented review loop. Expand only after quality and reliability metrics confirm the gains are real.

For the operational side of this system, continue with AI DevOps and SRE Automation for Developers. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the questions teams typically ask when they move from experiments to production adoption and governance.

What is the first KPI to track for AI productivity?

Cycle time is often the clearest first KPI because teams can measure it immediately and compare trend lines.

How do I avoid vanity metrics?

Pair speed metrics with defect and reliability metrics so gains cannot hide quality regressions.

Should every engineer use the same prompts?

Use shared baselines, then allow personal variants as long as output quality standards are met.

How long should an AI pilot run?

Four to six weeks is usually enough to detect whether improvements are durable.

What if senior engineers resist the playbook?

Involve them in metric design and review standards so the system reflects real engineering constraints.

Can small teams benefit from formal playbooks?

Yes. Smaller teams often gain faster because workflow changes propagate quickly.

Sources

The guidance above is grounded in primary documentation and engineering references:

SEO Metadata

Title: AI Developer Productivity Playbooks

Meta Description: A team-focused playbook for measuring and scaling AI developer productivity with KPIs, adoption phases, documentation standards, and change management.