Developers usually reach for AI in the middle of a bug incident, not during calm planning. That is why a fixed debugging workflow matters more than a clever one-off prompt.

This guide gives you a repeatable system for AI-assisted debugging that improves speed while preserving engineering quality.

Real Broken Code Example

Quick Answer: Start AI debugging with a reproducible failure, full error output, and expected behavior, not with a vague request like 'fix this bug.'

Think of debugging prompts like incident reports: no clear symptoms means no clear fix. A complete bug report includes stack trace, environment, and a minimal failing test.

# Broken behavior

# Expected: retries API call on HTTP 429

# Actual: crashes on first timeout

Traceback (most recent call last):

TimeoutError: upstream timed outPrompt Structure Template

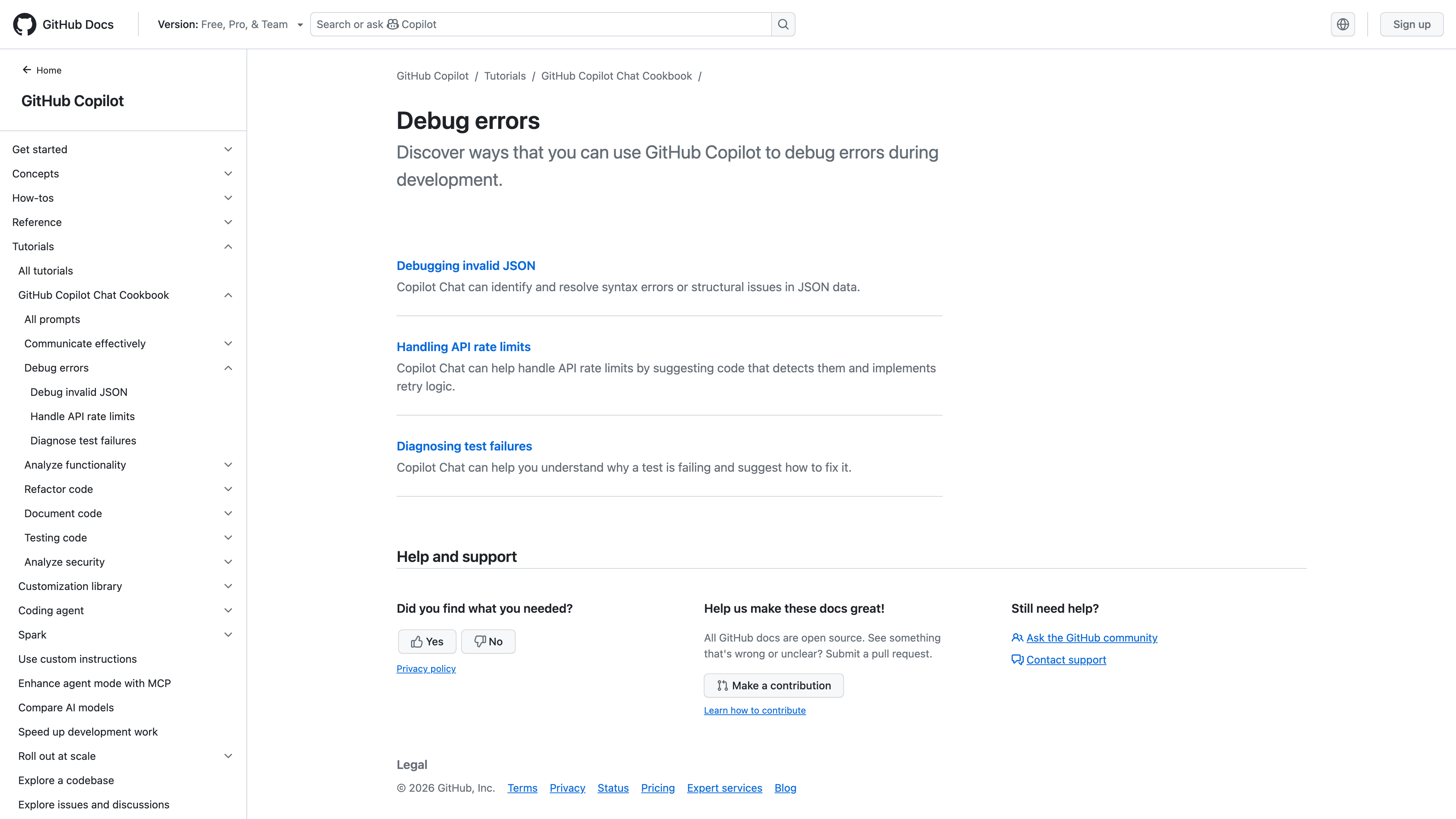

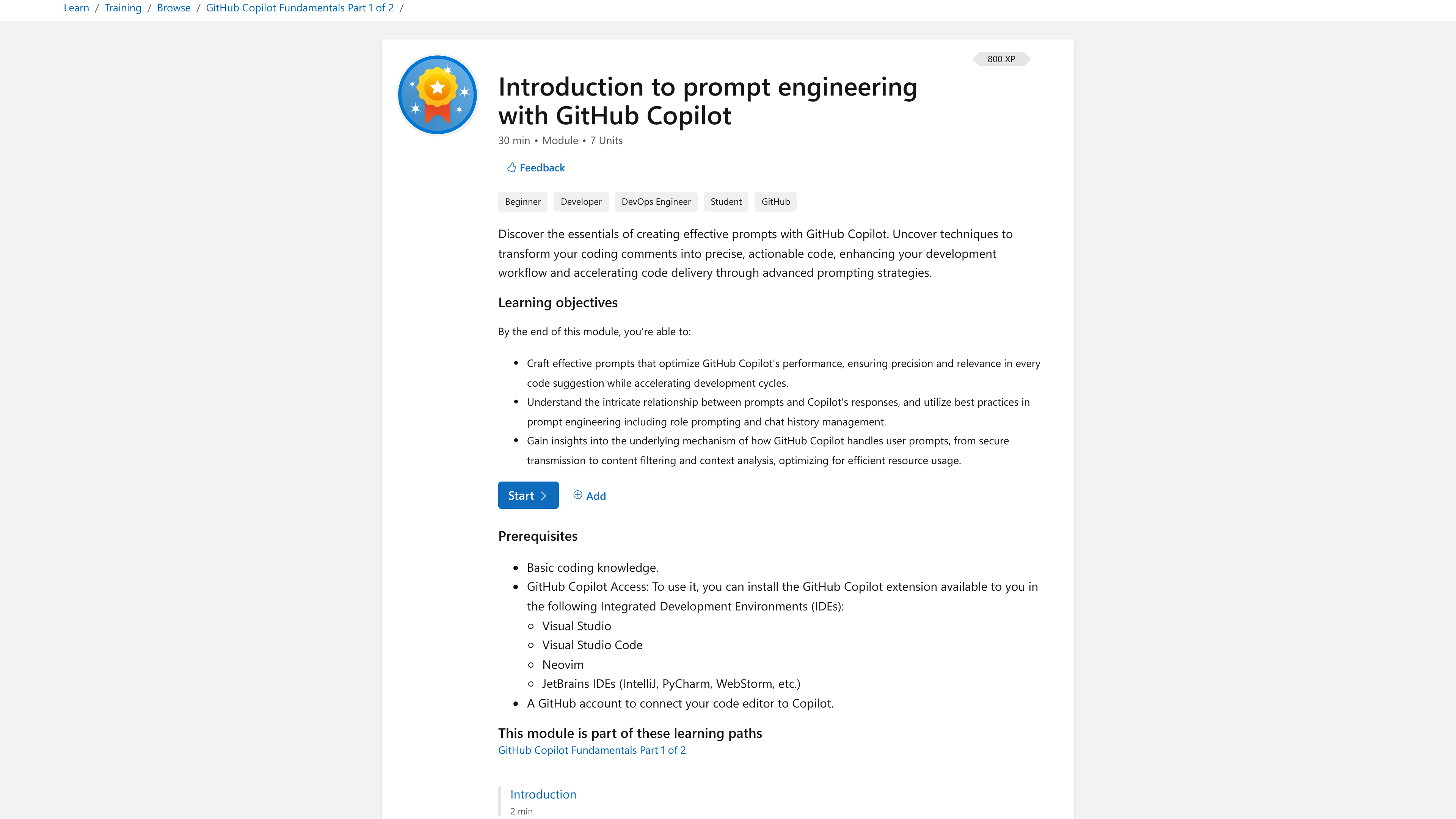

Quick Answer: Use a fixed prompt structure with role, bug context, constraints, and expected output format to reduce hallucinated fixes.

Think of this template as a debugger command sequence: consistent input yields consistent output. We use the same shape for runtime bugs, integration failures, and flaky tests.

Role: Senior SRE (site reliability engineer)

Context: Python service, async requests, timeout under load

Bug: retries are not executed on timeout

Constraints: no API contract changes, keep logging format

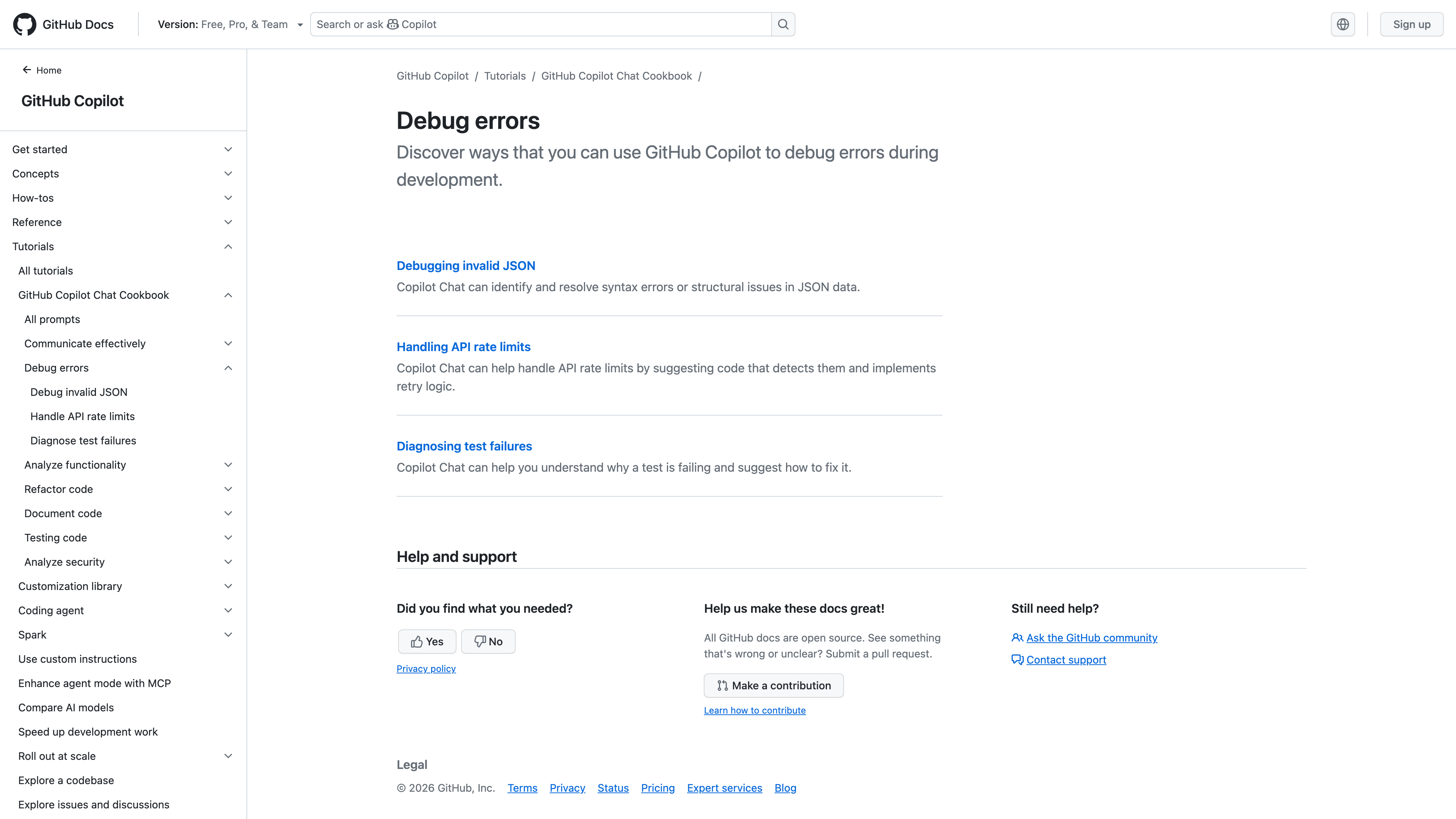

Output: patch + test cases + risk notesDebugging Workflow

Quick Answer: The practical loop is reproduce, isolate, propose patch, run tests, and document root cause before merge.

Think of workflow discipline like unit test discipline: skipping one step creates expensive surprises later. A reliable loop is: capture failing test, ask assistant for a targeted patch, rerun tests, then request a root-cause summary.

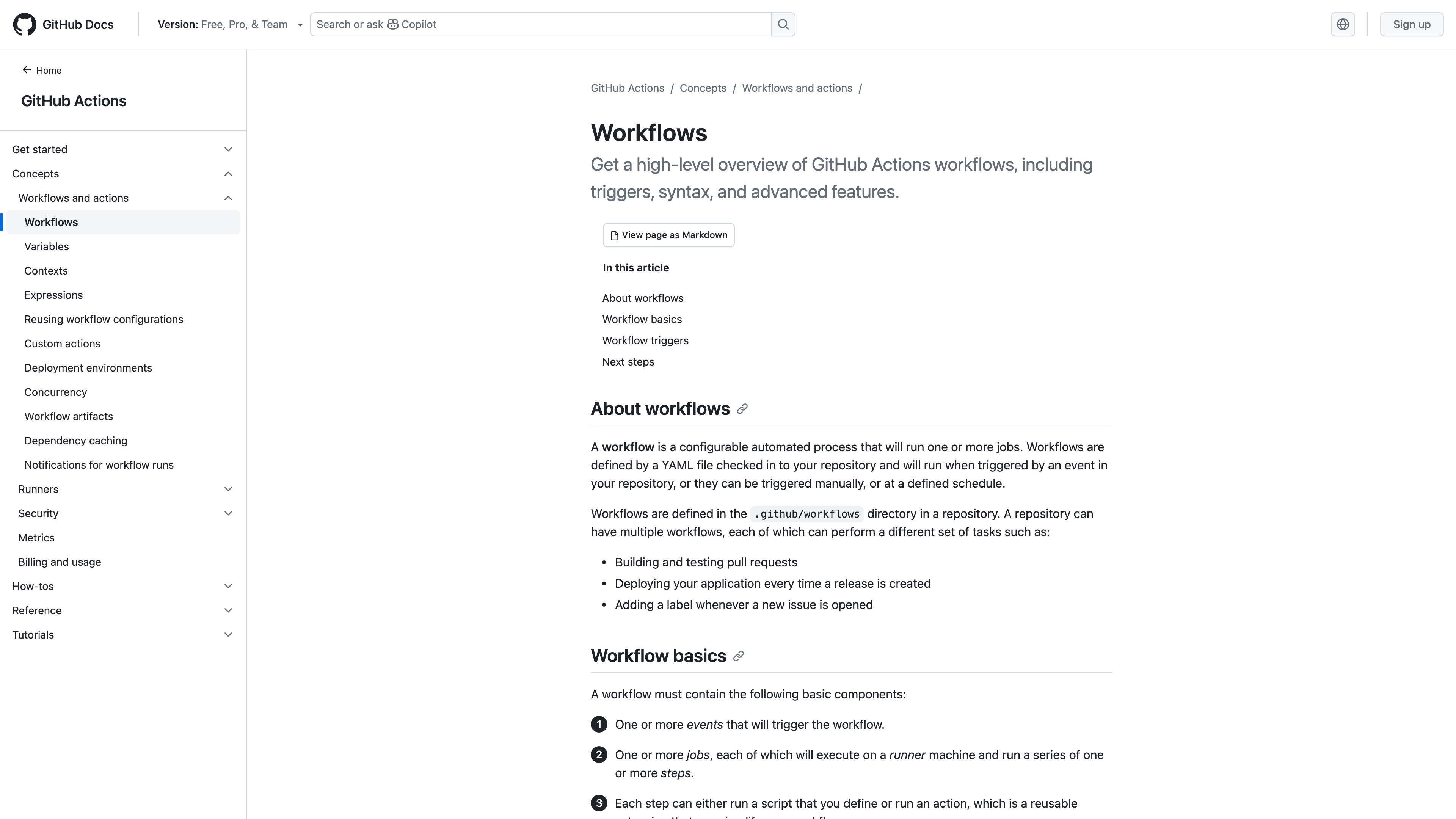

For team rollout, connect this workflow to your CI/CD (continuous integration and continuous delivery) policy from AI Code Review Tools Explained.

Common Mistakes

Quick Answer: The biggest mistakes are missing reproduction data, accepting first output blindly, and skipping regression tests after a generated patch.

Think of blind acceptance as running `git push` straight to production. Even good model suggestions can introduce hidden edge-case failures.

If you want reusable prompts for debugging and tests, continue to Best AI Prompts for Developers.

Verdict

Quick Answer: AI debugging works best as a structured assistant loop, not an unbounded chat thread.

When teams standardize prompts and require test evidence, debugging speed rises without increasing defect risk. When teams treat AI output as final truth, regressions rise quickly.

Bridge to next article: harden review with AI Code Review Tools Explained. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the practical questions developers ask before rolling an AI coding tool into real projects, teams, and delivery pipelines.

Can AI fix production bugs reliably?

It can accelerate diagnosis and patch drafting, but reliability depends on your test and review process.

What should I include in a debugging prompt?

Error trace, environment, expected behavior, constraints, and desired output format.

Should I let AI rewrite entire files to fix one bug?

Usually no. Ask for minimal diff patches first, then widen scope only if needed.

How do I avoid hallucinated fixes?

Provide concrete evidence and require tests plus root-cause explanation.

SEO Metadata

Title: AI for Debugging: Step-by-Step Workflow

Meta Description: Learn a practical AI debugging workflow with real broken code examples, prompt templates, common mistakes, and verification steps.