Local AI workflows are moving from niche to mainstream because developer teams need stronger privacy controls and more predictable costs. The local path is not about rejecting cloud tooling; it is about deciding where each task should run for the best engineering outcome.

This guide gives you a practical blueprint for that decision. We cover hardware, stack choices, rollout workflows, and governance patterns you can apply immediately.

When to Go Local vs Cloud for AI Coding

Quick Answer: Go local when privacy, deterministic cost, or offline reliability matters most; stay cloud-first when you need fastest model upgrades and shared team context.

The local-versus-cloud decision is less about ideology and more about constraints. Think of it like choosing between owning a workshop and renting industrial machinery: ownership gives control and predictable boundaries, while rental gives scale and access to the newest equipment. Developers should make the same decision by mapping data sensitivity, latency tolerance, and upgrade cadence.

Local execution shines when source code cannot leave the workstation or corporate network, when internet outages are common, or when teams need stable monthly cost ceilings. Cloud tools still win when model quality iteration is the priority, especially in fast-moving teams where prompt libraries and shared contexts need to sync across many engineers. The mistake is pretending one mode dominates every use case; mature teams run hybrid by default.

| Decision Signal | Choose Local | Choose Cloud |

|---|---|---|

| Data sensitivity | Regulated or proprietary code must stay on-device | Data controls already approved with provider |

| Latency profile | Sub-second responses needed during offline pair programming | Slight latency acceptable for higher-quality outputs |

| Cost predictability | Fixed workstation budget and no token variance | Usage-based billing aligns with bursty workloads |

What should you do this week? Run a two-lane pilot: one developer uses a local stack for three full workdays while another mirrors tasks in cloud tooling. Compare defect rate, time-to-first-draft, and rework volume. Decide with data, not preferences.

To connect this workflow with your existing stack, link the rollout to the pillar guide, AI debugging workflows, AI code review controls, model behavior mechanics, and prompt templates for developers. That internal map keeps teams from treating each article as an isolated tactic.

Why Developers Choose Local AI: Privacy, Cost, and Control

Quick Answer: Developers choose local stacks to keep code private, avoid unpredictable token bills, and tune model behavior to their own repositories.

Privacy is usually the headline, but control is the deeper reason local adoption keeps growing. It works like using your own version control mirror in a high-compliance environment: you move slower on upgrades, yet you know exactly where data flows and who can access it. For teams under contractual code-handling obligations, that certainty is operationally valuable.

Cost behavior is the second driver. Token-based cloud usage can spike during refactor weeks, while local workloads are tied to hardware amortization and power cost. Local execution also gives engineers direct control over context windows, quantization choices, and model swapping, which lets them optimize for specific tasks such as test generation versus architecture review.

A hidden gotcha appears when teams assume local equals secure by default. It does not. You still need endpoint hardening, encrypted model caches, and access controls around prompt history. The practical fix is to treat local assistants like any internal developer platform service with authentication, logging, and patch policies.

- Publish a one-page local AI data handling policy before broad rollout.

- Require disk encryption on workstations running model artifacts.

- Log model version, prompt source, and output destination for auditability.

Hardware Sizing: CPU, GPU, and Memory Tradeoffs

Quick Answer: Hardware sizing should begin with target latency and model size, then work backward to graphics memory, system memory, and storage bandwidth.

Hardware planning for local AI is like sizing a build server: under-provision and every task feels sluggish, over-provision and budget is wasted. Start from the user experience you want. If developers need near real-time code edits, throughput and memory bandwidth matter more than peak benchmark headlines.

For most coding workflows, memory is the first hard limit. A model that technically runs but swaps heavily to disk will feel unusable in editor loops. Teams should document target latency per prompt type, then choose quantization and context settings that stay within memory limits with headroom for IDE and browser workloads.

| Technical Requirement | Potential Risk | Learner's First Step |

|---|---|---|

| Sufficient graphics memory for chosen model | Frequent out-of-memory crashes or aggressive down-quantization | Benchmark one representative model at your real context length |

| At least 32 GB system memory for multitool workflows | IDE plus model contention causes latency spikes | Profile memory during peak dev sessions |

| Fast local storage | Model load time kills flow | Move model artifacts to NVMe and measure cold-start time |

Worked example: one platform team compared two workstation tiers for 14 developers. Tier A averaged 7.8 seconds response time in their daily code-assistant prompts, while Tier B averaged 2.9 seconds. The faster tier cost about 38 percent more per seat but reduced developer waiting time by roughly 31 minutes per engineer per day, which paid back in under five months for their loaded labor model.

Local Stack Comparison: Llama, GPT4All, Oobabooga, and Codex CLI

Quick Answer: Different local stacks optimize for different goals: raw control, convenience, user interface depth, or command-line automation.

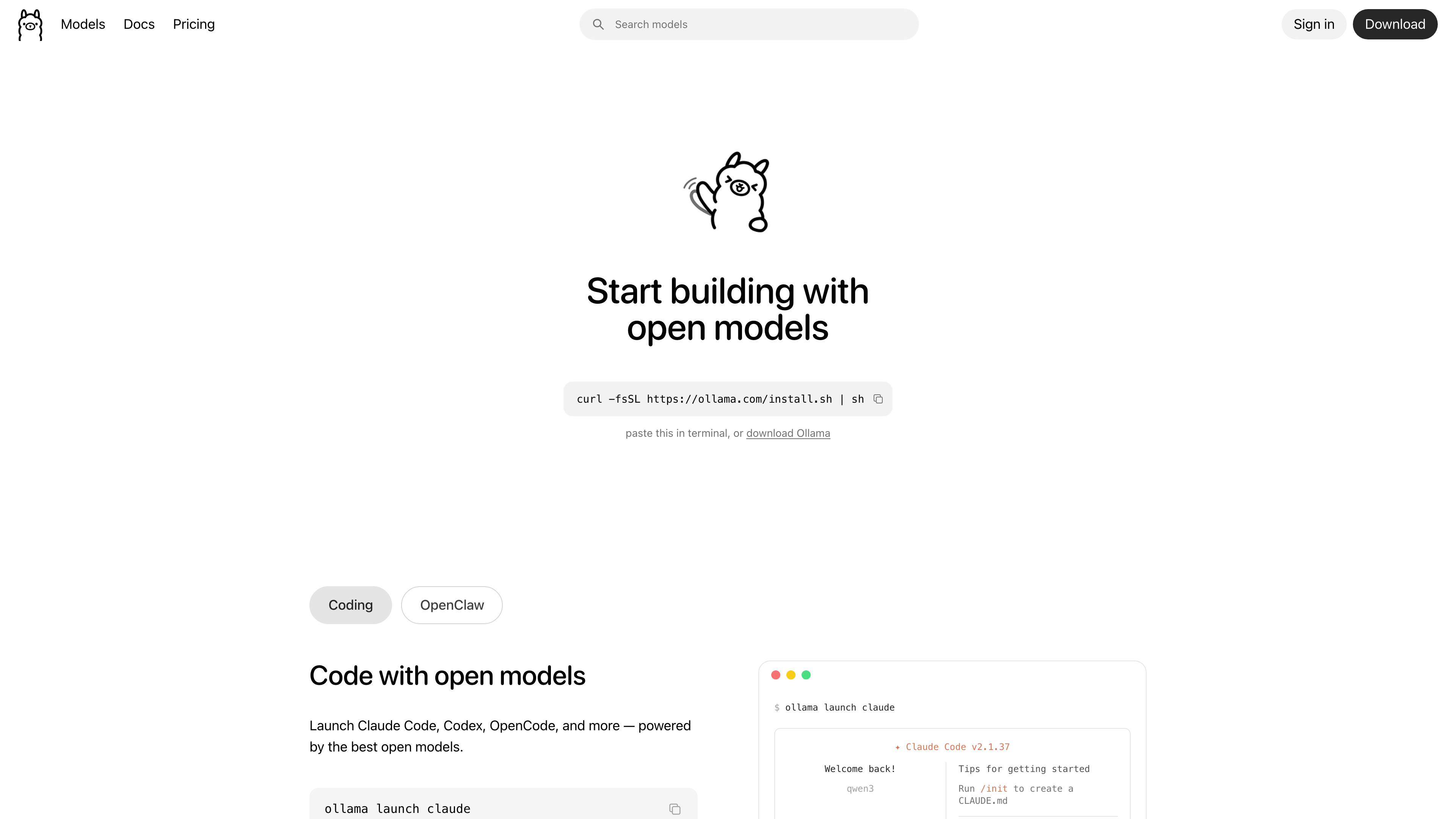

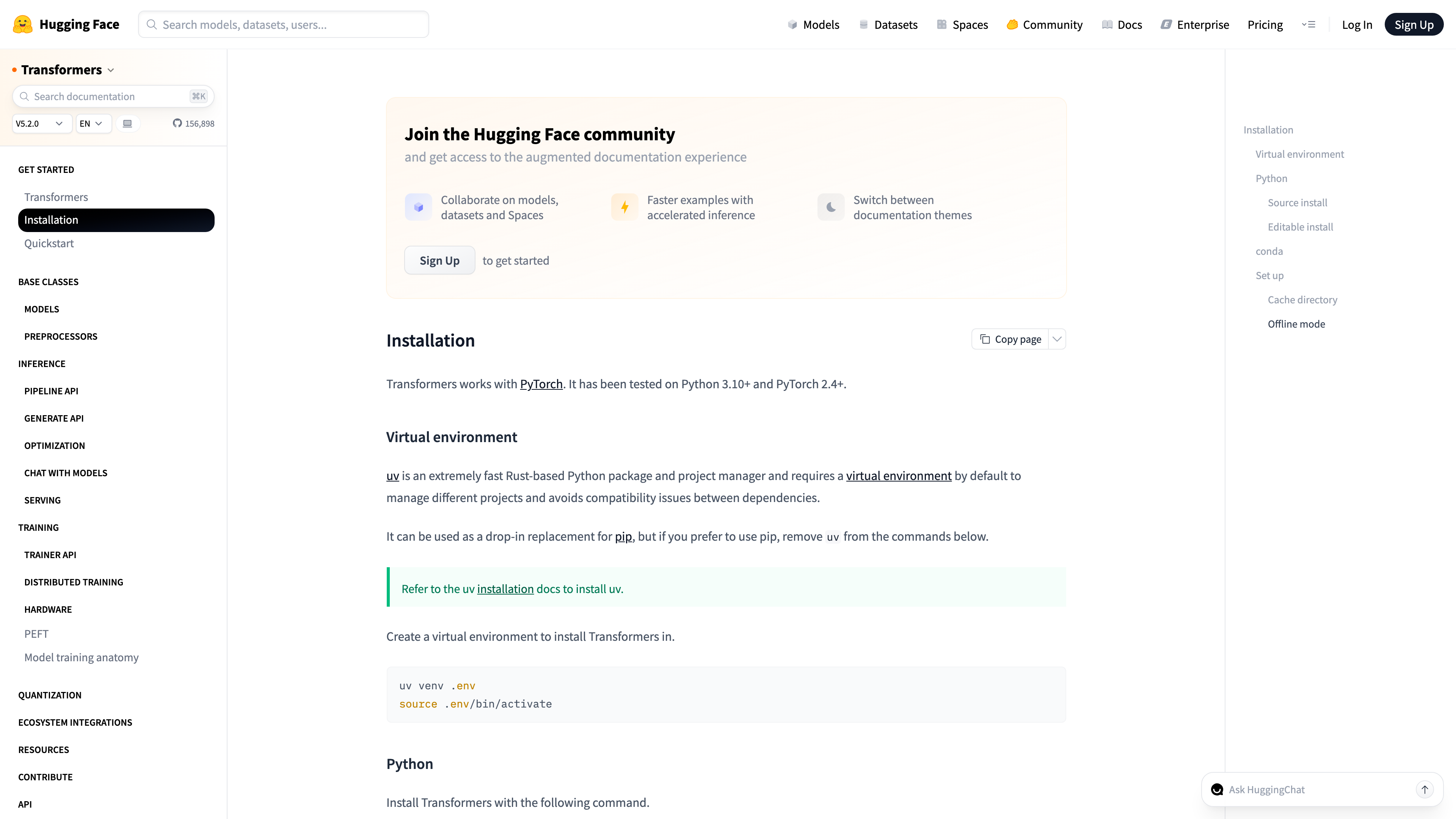

Choosing a local stack is similar to choosing a terminal setup: the right answer depends on whether your team values speed, ergonomics, or deep customization. Ollama gives straightforward model lifecycle management, GPT4All focuses on accessible desktop usage, and text-generation-webui from Oobabooga exposes broad tuning surfaces for advanced operators.

Command-line flows matter for developers who want AI inside scripts and pre-commit checks. That is where command-first assistants, including Codex CLI workflows, help because they fit the existing engineering rhythm instead of forcing context switching into separate apps. The broader lesson is to score tools against workflow fit, not feature count.

| Stack | Best Fit | What to Watch |

|---|---|---|

| Ollama | Fast local model serving with minimal setup | May need extra orchestration for multi-user team governance |

| GPT4All | Individual developer desktop productivity | Fewer enterprise policy controls out of the box |

| Oobabooga web UI | Advanced experimentation and prompt tuning | Configuration complexity can overwhelm new users |

This week, run a controlled bake-off on one real task type, such as writing migration tests. Keep the prompt and context constant across tools, and score output for correctness, time-to-usable-output, and review effort. A one-page scorecard prevents preference-driven decisions.

Setup Workflow and Prompt Patterns for Local Development

Quick Answer: Local success comes from disciplined setup: repository context curation, deterministic prompts, and automated verification after generation.

Local assistants perform best when you treat them like internal tools, not magical chat boxes. It is comparable to onboarding a new teammate: they need architecture context, coding standards, and clear acceptance criteria before they can contribute reliably. The same is true for prompts and local retrieval indexes.

A practical setup pattern is three layers: first, seed the assistant with repository conventions; second, use task-specific prompt templates; third, enforce post-generation checks through linters, tests, and security scanners. This layered approach reduces output variance and makes local usage sustainable across a team instead of tied to one prompt expert.

- Create a `project-context.md` file with architecture boundaries and naming conventions.

- Store reusable prompts for bugfixes, tests, and refactors in version control.

- Add a post-output command chain: format, lint, unit test, and dependency scan.

- Capture accepted prompt patterns in your engineering handbook each sprint.

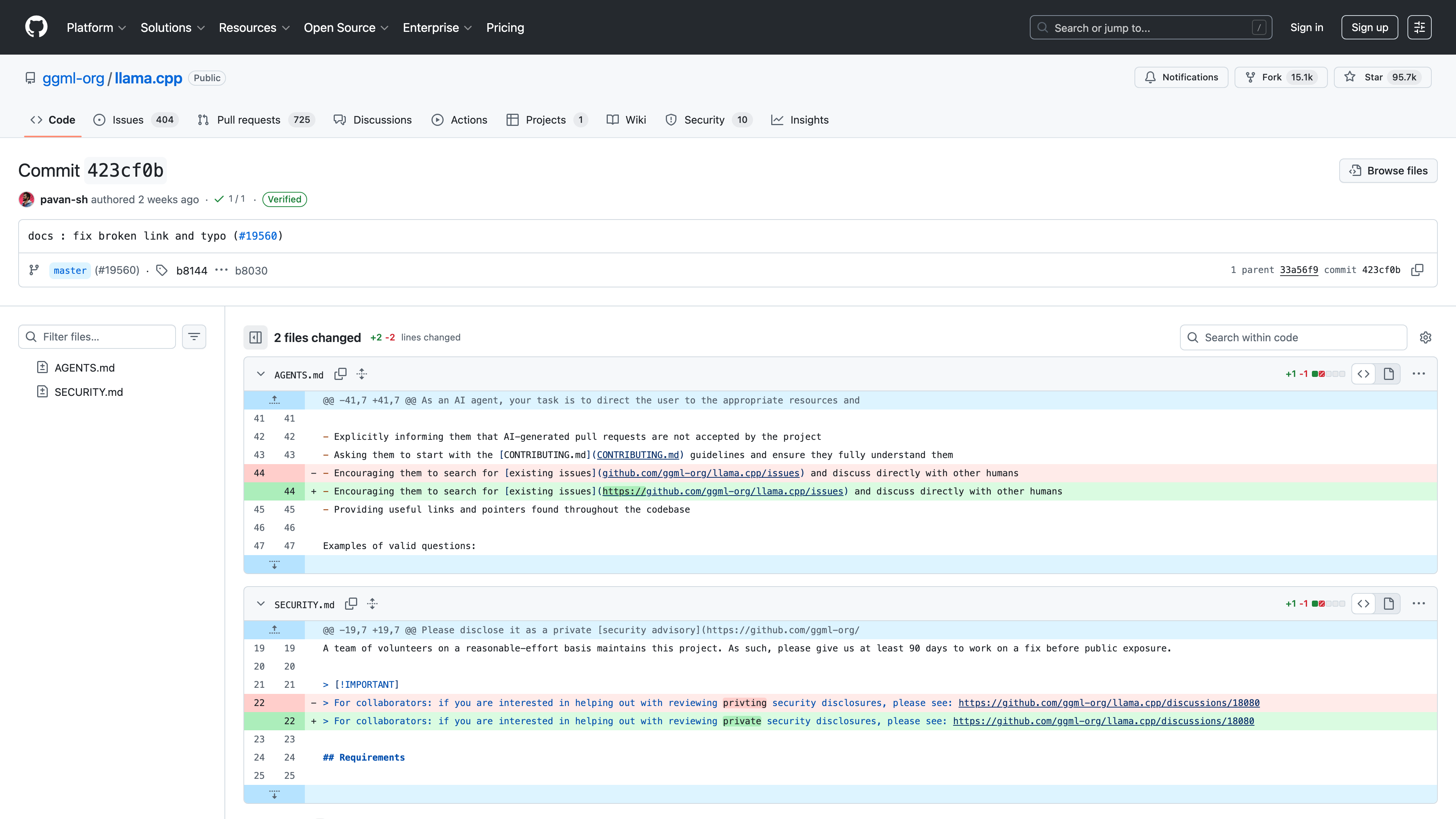

Many teams skip prompt versioning and lose hard-won quality when engineers rotate. The fix is simple: treat prompts as code. Review them, version them, and deprecate low-performing templates as you would any other internal artifact.

Enterprise Offline Deployment and Governance

Quick Answer: Enterprise offline deployments require the same governance maturity as any internal platform: identity controls, model lifecycle management, and measurable service objectives.

Enterprise offline AI is often described as a technology project, but it behaves more like a platform program. Think of it like running an internal package registry: once teams depend on it, uptime, patch cadence, and support expectations become business-critical. Governance cannot be bolted on after adoption; it has to be designed in from day one.

Use a phased rollout: pilot in one product team, then expand to neighboring services with similar risk profiles. During pilot, define service-level objectives for model response latency, uptime, and incident response. Tie those objectives to ownership. If no owner exists for model updates, security patching, and prompt data retention, your offline rollout will stall in the first audit cycle.

| Governance Control | Failure If Missing | Implementation Trigger |

|---|---|---|

| Model inventory and version policy | Teams run stale models with inconsistent behavior | Enable before second team onboarding |

| Prompt and output retention policy | Audit gaps during compliance review | Define during pilot kickoff |

| Access control with least privilege | Sensitive repository data leaks internally | Mandatory before production datasets |

The near-term win is operational confidence: developers can move quickly while security and compliance teams can still answer who accessed what, when, and why. That balance is what makes local AI sustainable instead of temporary experimentation.

Recommended Next Reads

Use these related guides to turn the workflow from this article into a team-level operating model.

aicourses.com Verdict

Quick Answer: These workflows produce the best outcomes when teams treat AI as a reliability and delivery multiplier, not a replacement for engineering judgment.

Local AI is a serious production option when you treat it as platform engineering, not a side project. The right stack can deliver excellent developer experience while meeting strict data boundaries.

Start with a constrained hybrid rollout, gather latency and quality evidence, and only then scale to wider teams. This keeps adoption grounded and avoids expensive workstation overbuilds.

Once local workflows are stable, connect them to Cross-Platform AI Development Toolchains to ensure your architecture stays coherent across clients and services. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the questions teams typically ask when they move from experiments to production adoption and governance.

Do local models match cloud model quality?

For many coding tasks they are sufficient, but frontier cloud models may still outperform on complex reasoning.

How much memory do I need for local coding assistants?

Many teams start at 32 GB system memory and size upward based on context length and concurrency.

Is local AI automatically compliant?

No. You still need governance controls, audit logs, and endpoint hardening.

Can local AI work fully offline?

Yes, if models and dependencies are installed ahead of time and workflows avoid cloud-only services.

What is the best first local stack to test?

Ollama is a common starting point because setup is straightforward and model switching is simple.

Should teams abandon cloud AI after local adoption?

Usually no. A hybrid model gives the best balance of privacy, quality, and flexibility.

Sources

The guidance above is grounded in primary documentation and engineering references:

SEO Metadata

Title: AI on Edge & Local Developer Workflows

Meta Description: A developer-focused guide to local AI coding stacks, hardware sizing, setup patterns, and governance for offline and privacy-sensitive workflows.