Developer teams in 2026 are not asking whether to use AI (artificial intelligence) coding tools anymore. They are asking which assistant gives measurable speed without creating security debt, merge noise, or policy chaos.

We reviewed product docs, pricing pages, and published productivity evidence from official sources, then mapped those findings into a practical decision framework. The goal here is not hype. The goal is helping you pick a tool that survives real sprint pressure.

What Is AI for Developers?

Quick Answer: AI for developers means tools that generate, review, and explain code while keeping humans in control of architecture, security, and release decisions.

Think of an AI coding assistant like a strong junior pair programmer who never sleeps but still needs supervision. It can draft boilerplate, suggest fixes, and explain unfamiliar APIs (application programming interfaces), which compresses the blank-page phase of development.

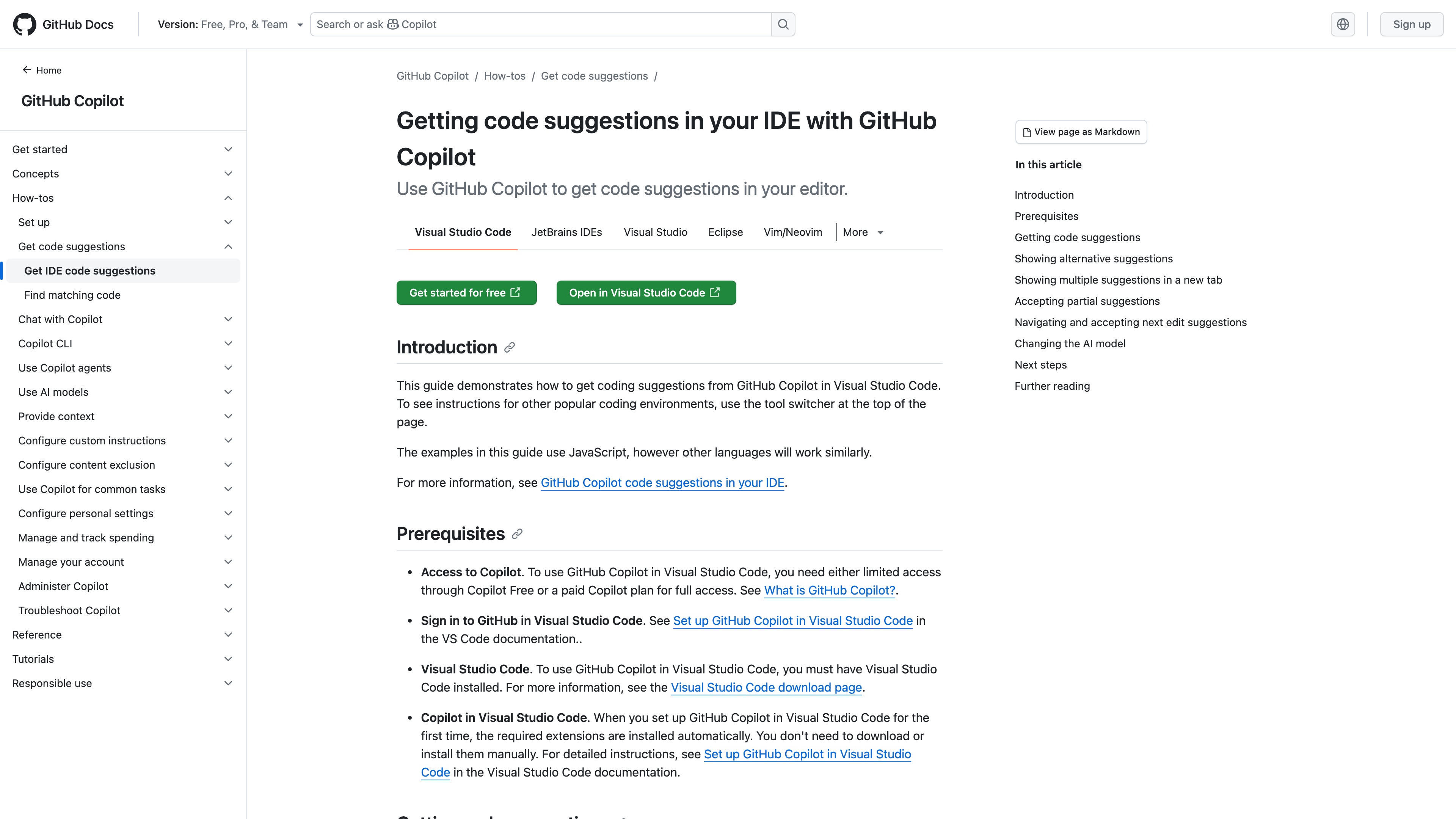

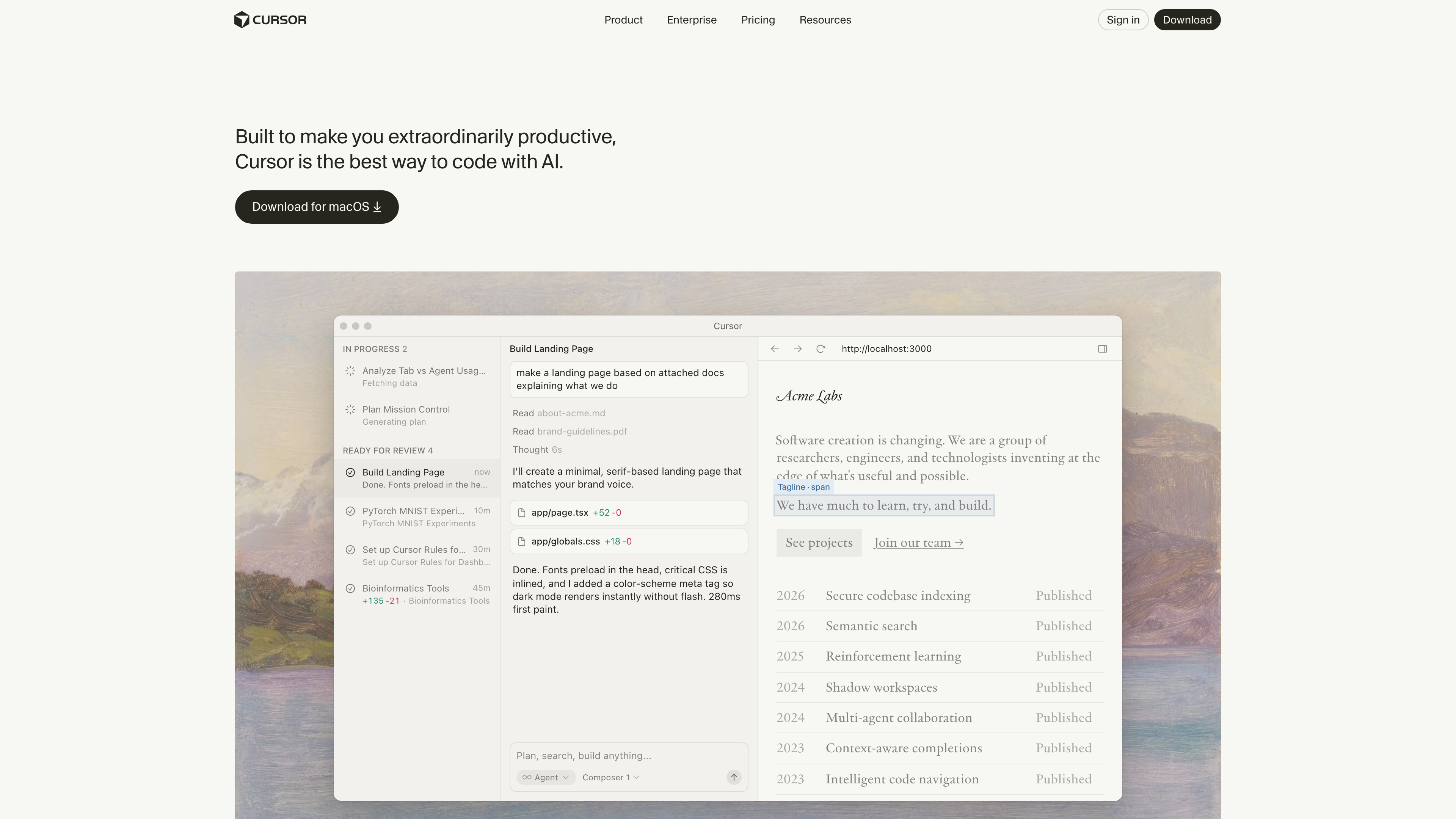

According to GitHub’s Copilot documentation and Cursor’s overview docs, modern assistants now blend inline completions, chat-driven edits, and repository-aware actions. The practical shift for teams in 2026 is moving from one-off autocomplete to workflow-aware coding support.

If you want tool-level detail after this pillar, continue with Cursor AI Complete Guide, GitHub Copilot Deep Review, and Cursor vs Copilot vs Codeium.

How AI Coding Tools Work (LLMs, Embeddings, Context Windows)

Quick Answer: Most coding assistants combine an LLM (Large Language Model), embeddings (numeric meaning vectors), and context windows (how much text the model can read in one pass).

Think of the system as a three-stage relay: fetch context, reason over context, then propose edits. Embeddings (numeric representations of semantic meaning) help retrieve the most relevant code chunks, while the context window (the model’s current reading space) constrains how much can be considered at once.

The Hugging Face LLM tutorial and Pinecone semantic search docs explain why retrieval quality drives generation quality. In practice, teams get stronger outputs by structuring repos, naming files consistently, and writing better issue context.

This workflow diagram shows the real loop most teams now run in production:

# Minimal retrieval + generation loop

query = "Refactor this endpoint to async"

chunks = vector_index.search(query, top_k=6) # embeddings-based retrieval

prompt = build_prompt(system_rules, chunks, user_request=query)

patch = llm.generate(prompt)

apply_patch_with_tests(patch)Best AI Developer Tools Compared

Quick Answer: Cursor, GitHub Copilot, and Codeium/Windsurf are the most common shortlists in 2026, but the right choice depends on team workflow, governance depth, and budget model.

Think of this decision like choosing a database engine: each option can work, but fit depends on workload and operational constraints. Cursor tends to shine for editor-native agentic workflows, Copilot for broad ecosystem and enterprise control, and Windsurf for cost-sensitive teams that still want integrated AI flows.

Official references include Cursor pricing, GitHub Copilot plans, and Windsurf pricing. The high-intent comparison page in this cluster goes deeper: Cursor vs Copilot vs Codeium.

| Tool | Best Fit | Primary Tradeoff |

|---|---|---|

| Cursor | Fast solo/team IDE workflows with agent-style edits | Requires tight prompt discipline to avoid over-editing |

| GitHub Copilot | Enterprises already standardized on GitHub tooling | Plan and governance choices need upfront policy work |

| Codeium/Windsurf | Cost-conscious teams wanting integrated editor experience | Ecosystem maturity can vary by workflow depth |

Use Cases by Developer Type (Frontend, Backend, ML, DevOps)

Quick Answer: Different developer roles should use AI differently: frontend for scaffold speed, backend for refactors, ML for experiment loops, and DevOps for pipeline policy automation.

Think of role-based AI usage like role-based access control: the same engine, different boundaries. Frontend developers usually get immediate gains from component scaffolds and test stubs, backend engineers from refactor and migration drafting, and DevOps teams from workflow guardrails and CI/CD (continuous integration and continuous delivery) templates.

GitHub and Microsoft documentation on editor and workflow integrations provide practical implementation patterns, including workflow orchestration and pipeline setup.

// Frontend example: prompt-guided React refactor request

// Prompt: Convert this component to a controlled form and add loading states.

function SignupForm({ onSubmit }) {

const [email, setEmail] = useState("");

const [loading, setLoading] = useState(false);

// ...

}# Backend example: generated migration review checklist

- Verify idempotency for each migration script

- Add rollback notes for destructive operations

- Run integration tests before applying to productionProductivity Benchmarks (Real Examples)

Quick Answer: Benchmark gains are real but uneven: the most common pattern is faster first drafts, while final quality still depends on review rigor and team conventions.

Think of AI productivity as reducing lap time in the middle of the race, not finishing the race for you. GitHub’s controlled experiment reported faster task completion for developers using Copilot, and the related paper The Impact of AI on Developer Productivity describes the same experiment design.

For broader labor evidence outside coding, the NBER summary of Generative AI at Work shows productivity gains concentrated among lower-experience workers. In engineering teams, this usually means AI accelerates onboarding when paired with strong code review.

| Technical Requirement | Potential Risk | Learner's First Step |

|---|---|---|

| Baseline metrics (cycle time, PR size) | Noisy claims of improvement | Capture 2 weeks pre-AI metrics |

| Prompt playbook by task type | Inconsistent quality across developers | Standardize prompts for refactor, tests, docs |

| Review gate in pull requests (PRs) | Hallucinated code merges to main | Require human review + test evidence |

Limitations and Risks (Security, Hallucinations)

Quick Answer: AI coding tools accelerate output, but they can still invent APIs, miss edge cases, or propose insecure defaults, so review automation and human review both matter.

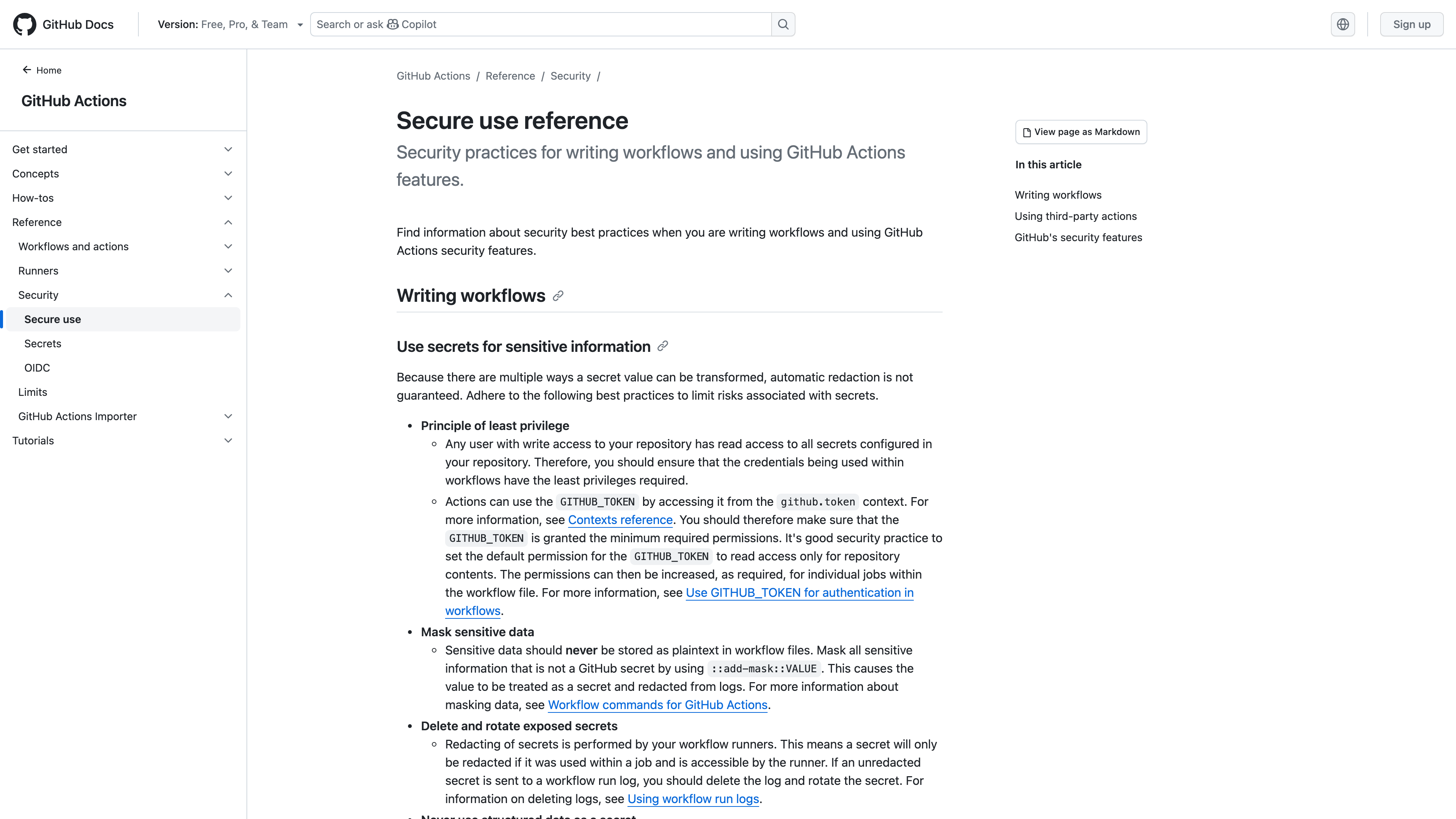

Think of assistant output like unreviewed dependency updates: useful but dangerous when merged blindly. The secure-use guidance in GitHub Actions security docs and static-analysis workflows from Semgrep are practical guardrails for AI-assisted commits.

A common gotcha is prompt leakage in logs and issue threads. Teams should keep secrets in managed vaults, avoid pasting production keys into chat, and run generated patches through automated scanning before merge.

name: AI-assisted PR guardrails

on: [pull_request]

jobs:

verify:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- run: npm ci && npm test

- run: semgrep ciPricing Comparison

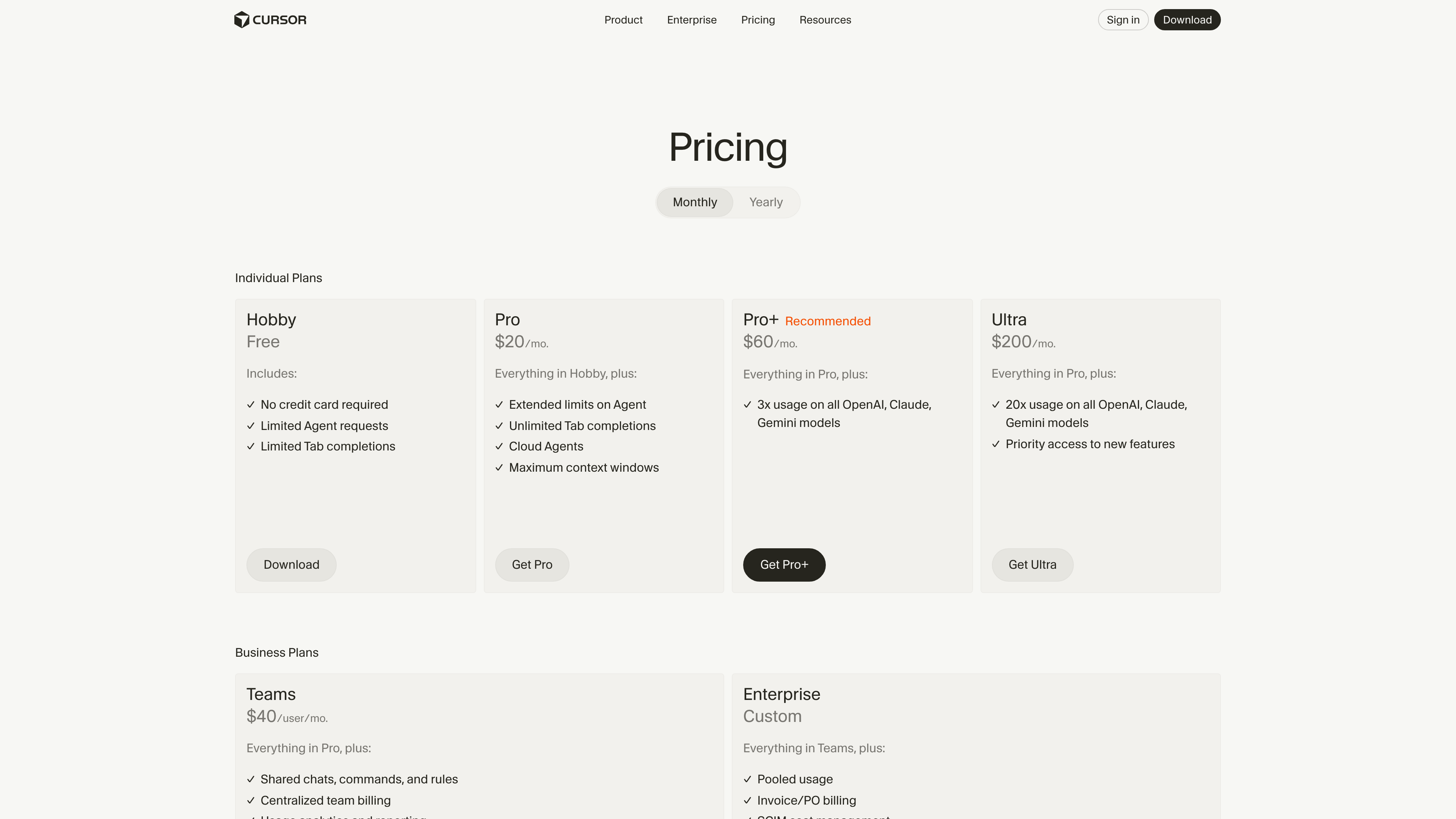

Quick Answer: As of February 19, 2026, official pricing pages position Cursor Pro around $20/month, Copilot Individual around $10/month, and Windsurf Pro around $15/month, with enterprise pricing depending on controls and volume.

Think of pricing like cloud compute: list price is only the entry point, while the total bill is driven by usage, governance, and rework cost. We verified current pricing references on Cursor, GitHub Copilot plans, and Windsurf on February 19, 2026.

| Tool | Published Entry Price | Practical Cost Driver |

|---|---|---|

| Cursor | Hobby free, Pro around $20/mo | Agent request volume and team seat count |

| GitHub Copilot | Free tier, Individual around $10/mo, Team/Enterprise higher | Policy controls, enterprise governance, usage depth |

| Windsurf | Free tier, paid plans around $15-$30/mo | Prompt credits and premium model usage |

Alternatives and Open-Source Options

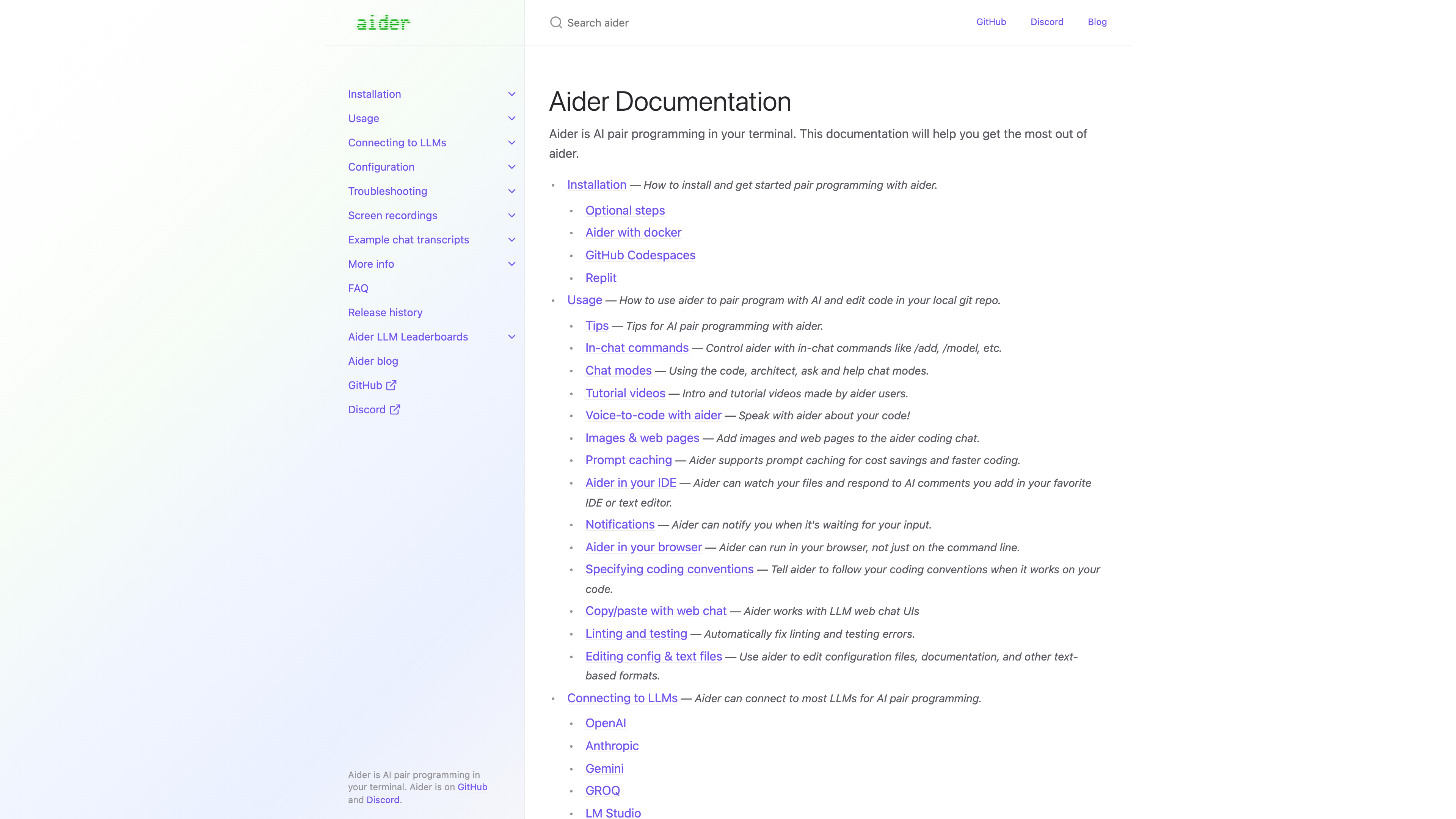

Quick Answer: Open alternatives like Aider and Sourcegraph Cody workflows can lower costs and increase control, especially when teams already run their own model endpoints.

Think of open-source options like self-hosted observability: more setup work up front, but greater flexibility and governance. Developer-facing examples include Aider and Sourcegraph Cody capabilities.

For many teams, the best strategy is hybrid: start with managed assistants for speed, then move sensitive workflows to self-hosted or policy-constrained paths where needed. If you are troubleshooting generation quality, jump to AI for Debugging: Step-by-Step Workflow and Best AI Prompts for Developers.

Verdict: Which Tool Should You Choose?

Quick Answer: Choose the tool that matches your delivery constraints, not internet hype: Cursor for fast IDE-native flows, Copilot for GitHub-centric governance, and Windsurf when credit economics matter.

Our final take is practical: most teams should pilot two tools, not one, for two weeks and compare cycle time plus review effort. The winner is the option that shortens delivery without increasing escaped defects.

If you want guided next steps, read Cursor AI Complete Guide, GitHub Copilot Deep Review, and How AI Coding Tools Actually Work to map tooling to maturity stage.

Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the practical questions developers ask before rolling an AI coding tool into real projects, teams, and delivery pipelines.

Which AI coding tool is best for a small team in 2026?

Small teams usually optimize for speed-to-value, so they often start with Cursor or Copilot Individual and validate against real sprint metrics before scaling seats.

Are AI coding tools accurate enough to skip code review?

No. They improve drafting speed, but human review is still required for edge cases, architecture fit, and security constraints.

Do AI coding tools replace junior developers?

They change junior work more than they remove it. Teams still need people who can reason about systems, debugging, and product constraints.

How should teams measure AI coding ROI?

Track lead time, pull request review time, defect escape rate, and developer satisfaction before and after rollout.

Should I pick one tool for the whole company?

Not initially. Pilot two options on the same workflows, then standardize after evidence shows clear quality and governance fit.

What is the biggest rollout mistake?

Treating AI as a magic replacement for engineering process instead of integrating it into testing, review, and security gates.

SEO Metadata

Title: Best AI Tools for Developers (2026 Complete Guide)

Meta Description: Compare the best AI tools for developers in 2026 with practical benchmarks, pricing, risks, workflow examples, and open-source alternatives.