Cross-platform AI products are no longer optional for development teams shipping customer-facing software. Users expect intelligent behaviors on web, mobile, and backend workflows at the same time.

This guide shows how to design one coherent toolchain across those surfaces, with practical patterns for architecture, testing, and scale.

The fastest way to lose momentum is to build each platform in isolation and then discover contract drift during integration. This guide helps you avoid that trap by defining shared contracts first, then layering channel-specific adaptations where they actually matter.

Read this as an implementation sequence, not a theory piece: architecture baseline, backend pattern, frontend interaction, mobile constraints, deployment discipline, and test strategy. By the end, you should have a concrete rollout backlog for the next two sprints.

Teams that run this sequence usually discover one surprising truth: most integration pain comes from missing product assumptions rather than missing model features. Use the sections below to expose those assumptions early, while changes are still cheap.

If your roadmap is crowded, prioritize one end-to-end user flow first and apply the cross-platform pattern there. A narrow but complete implementation teaches more than five disconnected prototypes.

What Cross-Platform AI Development Means

Quick Answer: Cross-platform AI development means designing one intelligence layer that serves web, mobile, and backend workloads through shared contracts and platform-specific adapters.

Cross-platform AI projects fail when teams treat each client as a separate intelligence product. The better mental model is a transit network: core routes are shared, but last-mile paths differ by platform. In engineering terms, that means shared prompt contracts, shared retrieval policies, and platform-specific rendering and caching.

For developers, the first architectural decision is where orchestration lives. API-first designs centralize policy and observability, while edge-heavy designs reduce latency for user interactions. Neither is universally better. The right answer depends on data locality, compliance boundaries, and how often model behavior changes.

Before implementation, write a one-page architecture decision record that lists platform responsibilities explicitly: what is shared, what is client-specific, and where policy checks run. This document saves weeks of rework because integration teams stop making conflicting assumptions.

| Architecture Option | Best Use Case | Primary Tradeoff |

|---|---|---|

| Central API orchestration | Strong governance and shared business logic | Higher latency for distant users |

| Edge-mediated inference | Real-time assistant UX | More distributed debugging complexity |

| Hybrid API + edge | Global apps with mixed workloads | Higher operational overhead |

To connect this workflow with your existing stack, link the rollout to the pillar guide, AI debugging workflows, AI code review controls, model behavior mechanics, and prompt templates for developers. That internal map keeps teams from treating each article as an isolated tactic.

Backend Patterns: Model APIs, RAG, and Vector Search

Quick Answer: Backend AI layers should separate generation, retrieval, and policy checks so teams can evolve each component without breaking product behavior.

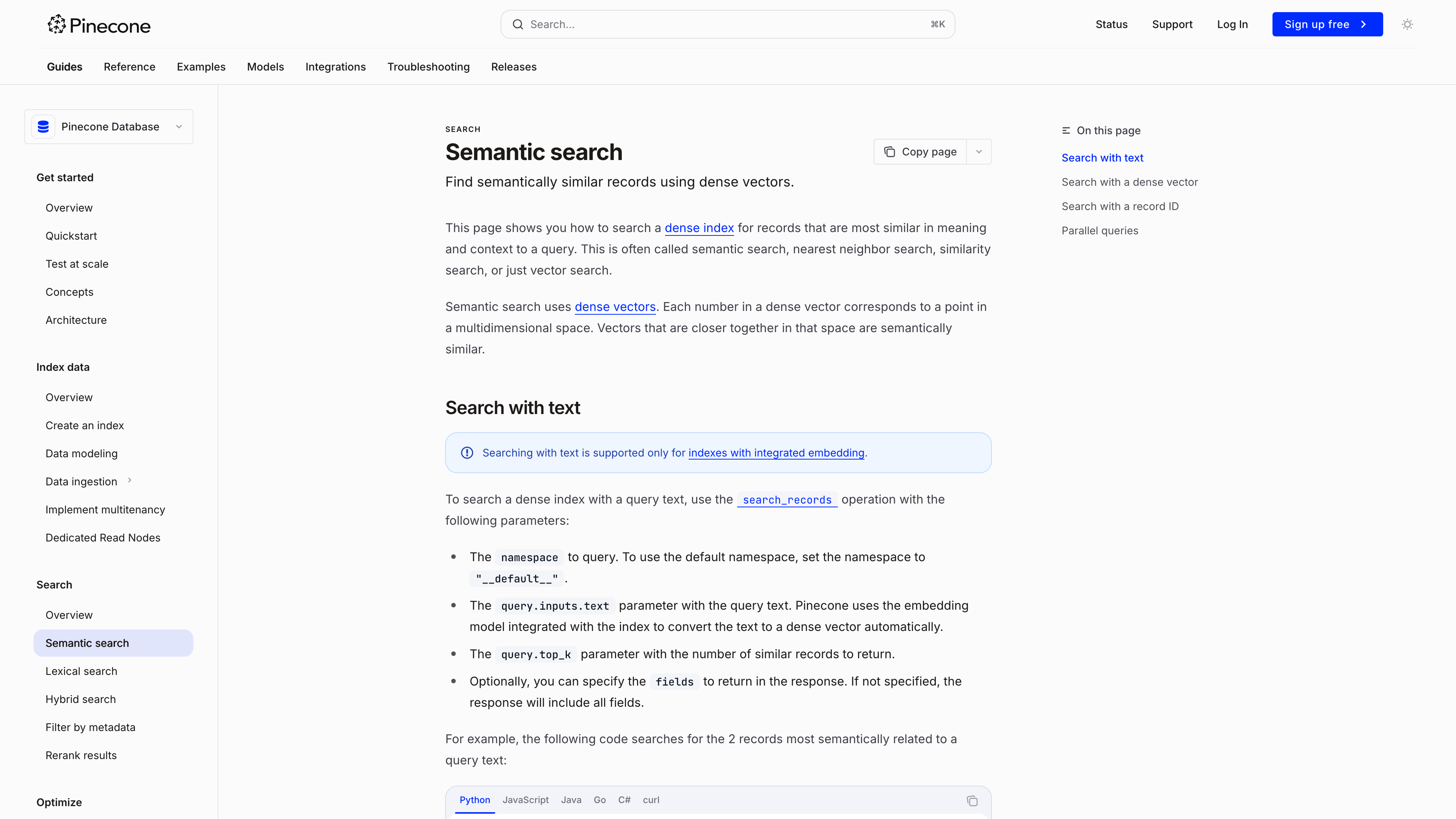

Backend design for AI apps is closer to search engineering than classic request-response APIs. Imagine a legal research assistant: answers are only useful if retrieval finds the right documents first. The same principle applies to product AI. Retrieval quality sets the ceiling on generation quality.

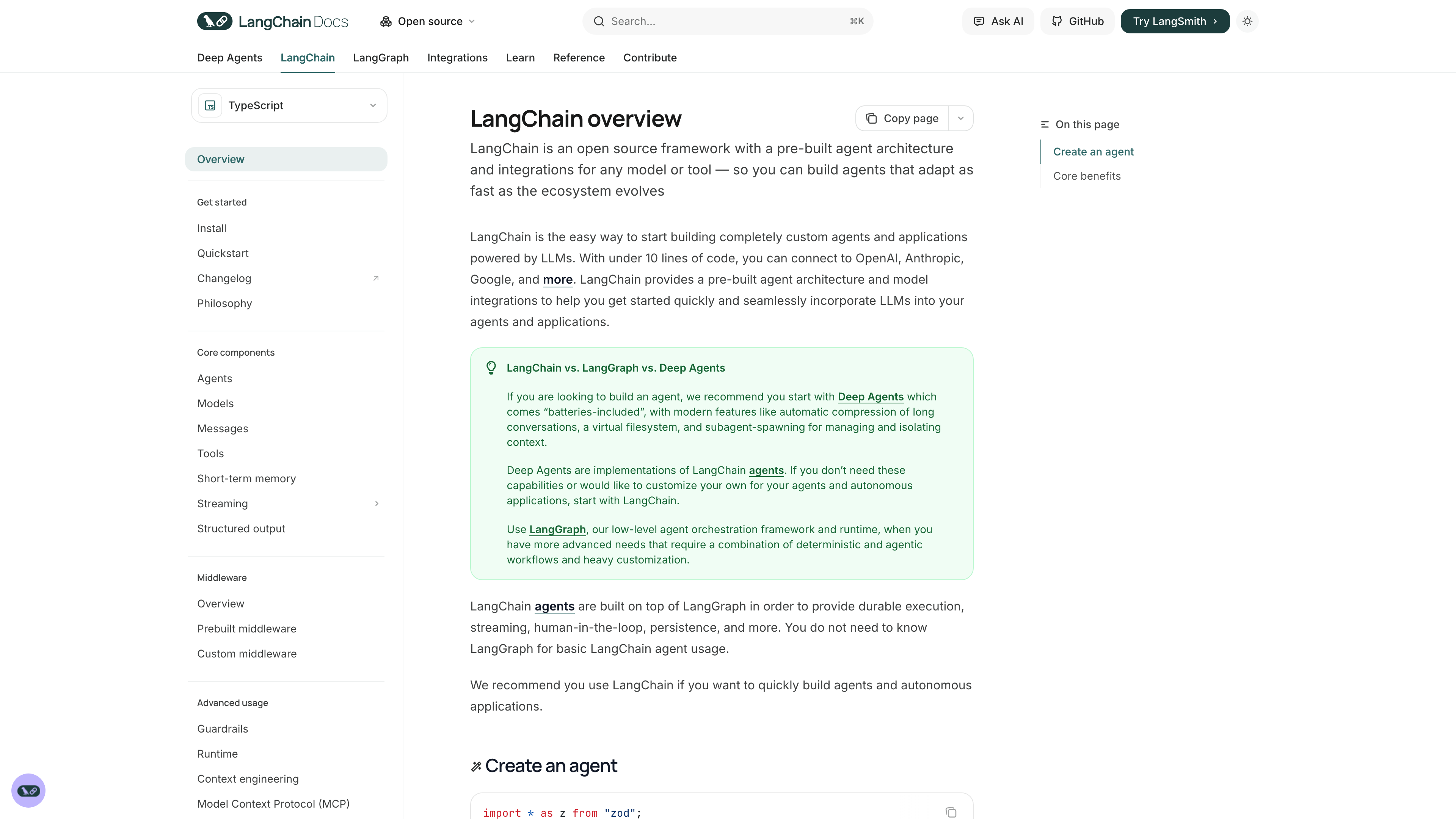

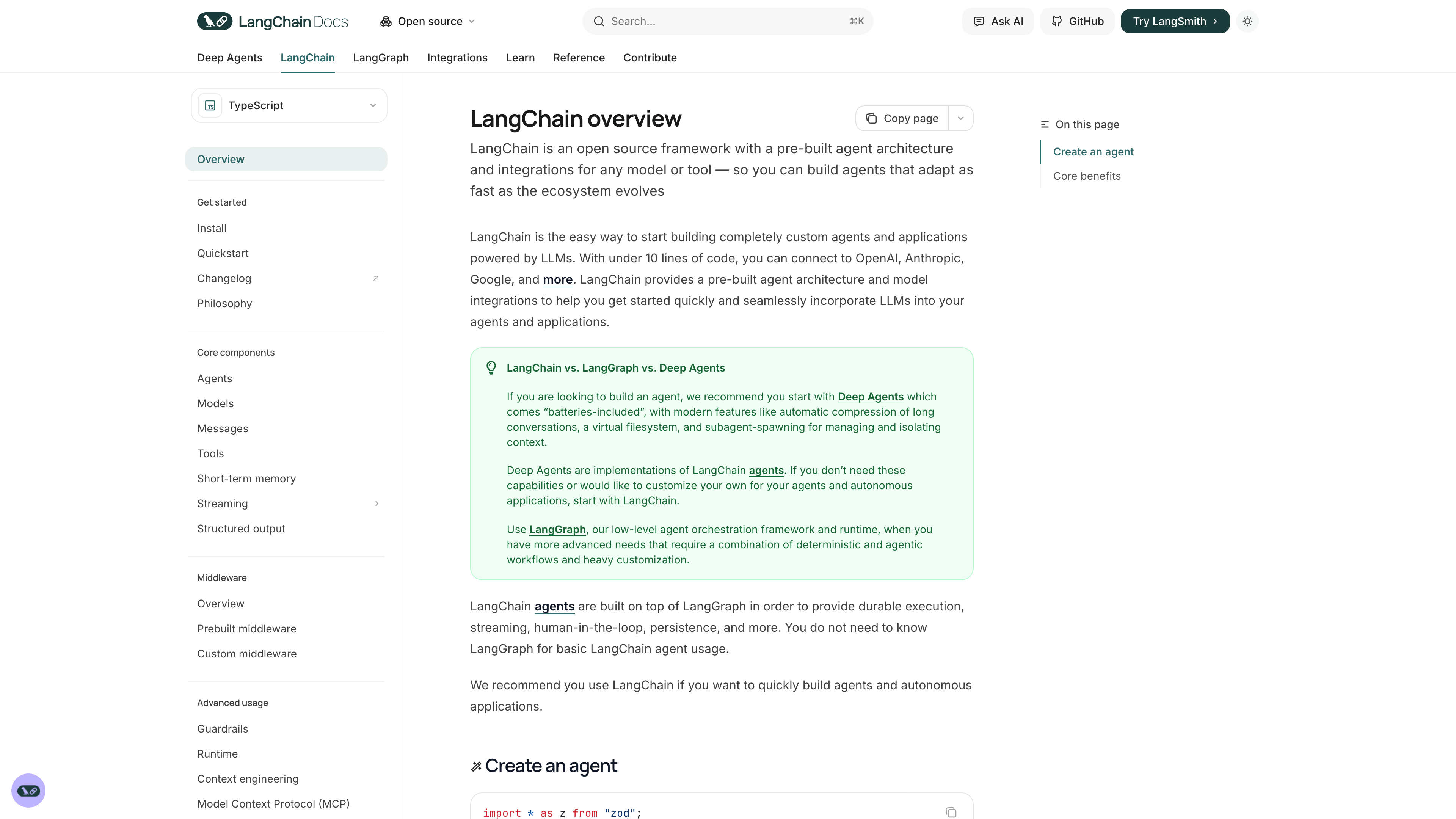

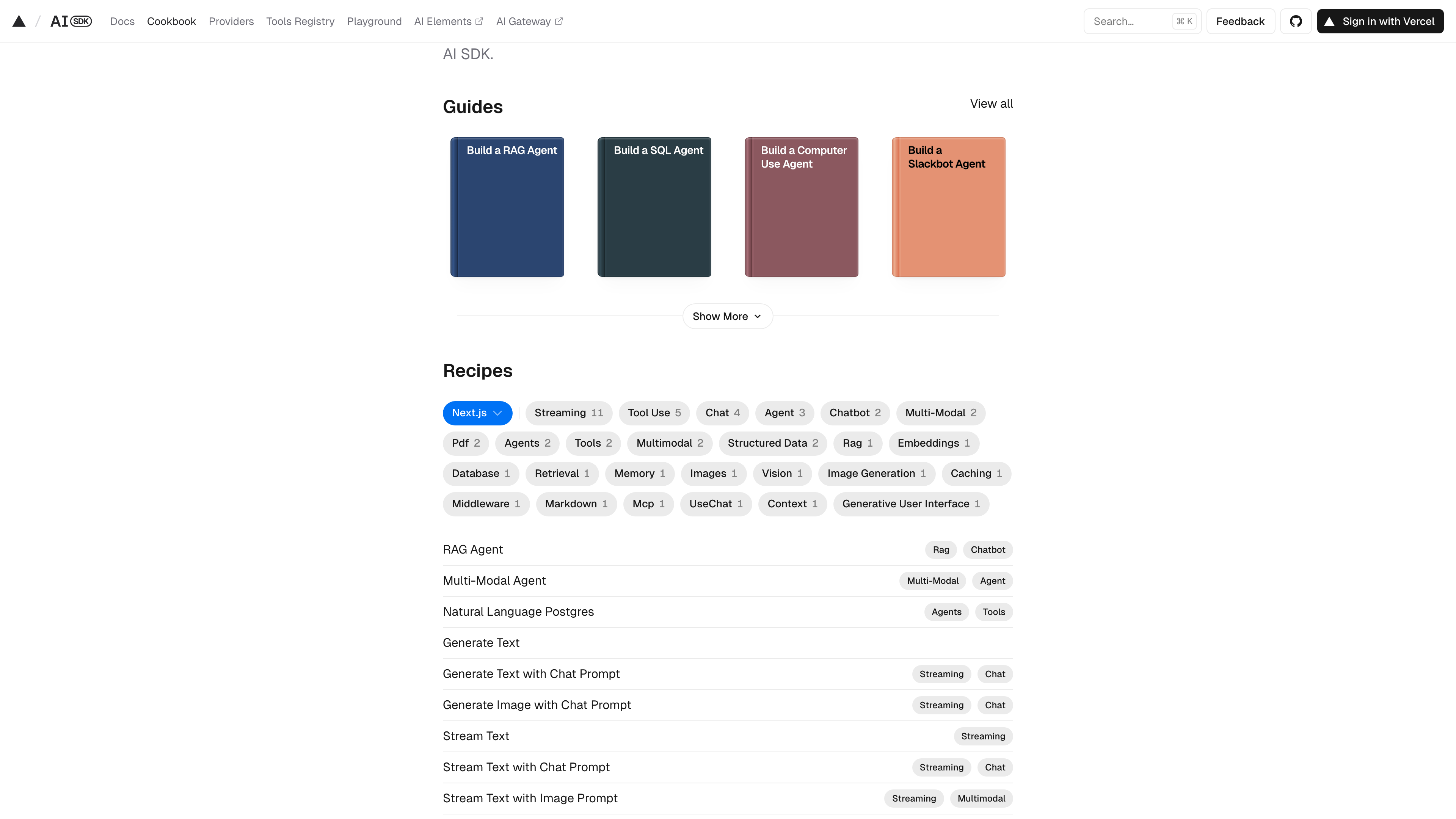

RAG (retrieval-augmented generation) pipelines should expose three explicit stages: query rewriting, retrieval, and grounded response generation. Add policy checks between retrieval and generation so sensitive fields are filtered before model calls. That separation gives you better debugging and safer incident response when outputs drift.

What should you do this week? Instrument one endpoint with retrieval hit rate, context token count, and grounded answer rate. Those three metrics reveal whether poor outputs are model issues or retrieval issues. Without them, teams guess.

A second lever is contract testing for tool calls. If your model can trigger actions, run contract tests that verify argument schemas and authorization boundaries on every backend release. This prevents subtle integration breakages that only appear under live traffic.

| Technical Requirement | Potential Risk | Learner's First Step |

|---|---|---|

| Versioned prompt contracts | Client updates break backend behavior silently | Store prompts in source control with semantic versions |

| Retrieval observability | Low-quality context goes unnoticed | Log top-k documents and relevance scores |

| Policy filter layer | Sensitive data leaks into model prompts | Enforce pre-generation content filtering |

Frontend Integration: Live Assistants and Prompt UX

Quick Answer: Frontend AI quality depends on prompt UX discipline: user intent capture, context previews, and clear confidence signals.

A live assistant can feel magical or frustrating based on interface design, not model quality alone. It is like form validation in checkout flows: if feedback is late or unclear, users lose trust quickly. AI interfaces need similarly explicit affordances around what the assistant knows and what it is guessing.

For web clients, display context chips, model action states, and fallback pathways when generation fails. This keeps users informed and reduces repeated prompt spam. Developers should also capture prompt-to-outcome telemetry in the UI layer, so product teams can see where users abandon or retry.

- Show the user what data sources were used for each answer.

- Provide one-click refine prompts instead of forcing full rewrites.

- Surface confidence and uncertainty language consistently.

- Log retries and dead-end prompts for weekly UX review.

Hidden hack: adding a short 'assumptions' line before each model answer dramatically improves user correction behavior. Users can fix assumptions quickly instead of discarding the whole response.

Mobile Integration: SDK Choices and Offline vs Online Loads

Quick Answer: Mobile AI integration succeeds when teams choose clear online-offline boundaries and optimize model usage for battery, latency, and privacy constraints.

Mobile AI design is like packing for a long trip with strict luggage limits. You can bring everything, but performance and battery will suffer. Teams need a disciplined split: lightweight on-device features for responsiveness and privacy, heavier cloud calls for complex reasoning.

React Native, iOS Core ML, and Android AI stacks each support this split differently. The operational trick is to define explicit fallback behavior when network quality drops. If the app silently degrades, users perceive randomness. If it announces mode changes and offers scoped offline capabilities, trust stays intact.

| Platform Path | Strength | Constraint |

|---|---|---|

| On-device inference | Low latency and strong privacy | Model size and battery limits |

| Cloud inference | Higher reasoning depth | Network dependency and variable latency |

| Hybrid strategy | Balanced experience | More complex synchronization logic |

Worked example: one field-service app moved intent classification on-device while leaving complex report generation in cloud endpoints. Median response time for quick actions dropped from 1.9 seconds to 0.6 seconds, while monthly model API cost fell by 23 percent because only high-complexity requests hit cloud inference.

Deployment, Scaling, and Observability Across Platforms

Quick Answer: Cross-platform AI systems need platform-specific performance budgets but one shared observability model for reliability and cost control.

Scaling AI across clients resembles running a distributed retail chain: each location has local constraints, but headquarters still needs one truthful dashboard. For engineering teams, that means unified tracing, cost telemetry, and quality signals across web, mobile, and backend services.

Set budgets by channel. For example, mobile interactions might target sub-800 millisecond assistant response for lightweight tasks, while backend automation jobs can accept longer latency for higher reasoning depth. Without channel budgets, teams over-optimize one surface and under-serve another.

- Define latency and cost budgets per platform.

- Track model usage, prompt size, and failure classes by client type.

- Set circuit breakers for cost spikes and cascading failures.

- Use trace identifiers to connect client events to backend model calls.

What should you do this week? Add one shared telemetry schema for AI requests across all clients. That single move unlocks better debugging, forecasting, and incident response.

Testing AI Apps and Managing Performance Tradeoffs

Quick Answer: AI app testing should combine deterministic checks, semantic evaluation, and human review loops to balance speed with reliability.

Traditional unit tests catch deterministic logic, but AI behavior introduces probabilistic variation. The best comparison is autocomplete ranking in search products: quality cannot be reduced to pass/fail assertions alone. You need scenario suites, semantic checks, and periodic human evaluation to detect subtle regressions.

Build a three-layer test pipeline. Layer one runs deterministic schema and contract tests. Layer two runs semantic evaluation on curated prompts with expected intent outcomes. Layer three is human review for high-impact scenarios such as billing, compliance messaging, or medical-adjacent advice. This structure keeps release velocity while reducing unexpected behavior in production.

Do not skip adversarial testing. OWASP guidance for large language model applications highlights prompt injection and data leakage risks that functional tests miss. The practical fix is to add red-team prompts to every release candidate and block deployment when policy breaches are detected.

In practice, teams also need release canaries for AI changes. Ship model or prompt updates to a small traffic slice, compare quality and latency deltas, and promote only when drift is acceptable. This canary habit catches regressions earlier than weekly incident review.

Recommended Next Reads

Use these related guides to turn the workflow from this article into a team-level operating model.

aicourses.com Verdict

Quick Answer: These workflows produce the best outcomes when teams treat AI as a reliability and delivery multiplier, not a replacement for engineering judgment.

Cross-platform AI success comes from disciplined boundaries: shared intelligence contracts with platform-specific delivery choices. Teams that design those boundaries early avoid expensive rewrites later.

Start with one shared backend contract, then optimize web and mobile experiences through targeted adapters and observability-driven iteration.

After implementation, standardize team execution with AI Developer Productivity Playbooks so architecture quality scales with team growth. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the questions teams typically ask when they move from experiments to production adoption and governance.

Should I start with API-first architecture?

In most cases yes, because it centralizes policy and observability while clients iterate independently.

When should I push inference to edge or device?

When latency, privacy, or offline behavior are top priorities and model complexity is manageable.

How many model providers should one product use?

Start with one primary provider and add multi-provider routing only when reliability or cost signals justify it.

What is the hardest part of cross-platform AI?

Keeping behavior consistent while each platform has different latency and UX constraints.

How should I test AI features before launch?

Use deterministic contract tests, semantic scenario tests, and human review for high-impact flows.

Do mobile and web need separate prompt systems?

Not usually. Use shared prompt contracts with platform-specific context adapters.

Sources

The guidance above is grounded in primary documentation and engineering references:

SEO Metadata

Title: Cross-Platform AI Development Toolchains (API, Mobile, Web)

Meta Description: Learn cross-platform AI app architecture patterns, from API and RAG backends to mobile integration, testing, and scaling tradeoffs.