This is the high-intent page: if you are deciding between Cursor, Copilot, and Codeium/Windsurf right now, this section is designed to reduce decision time.

We focus on the dimensions that change real engineering outcomes: feature fit, latency behavior, accuracy discipline, pricing economics, and team-vs-solo suitability.

Feature Table

Quick Answer: All three tools cover inline completion and chat, but they differ in workflow depth, governance controls, and cost mechanics.

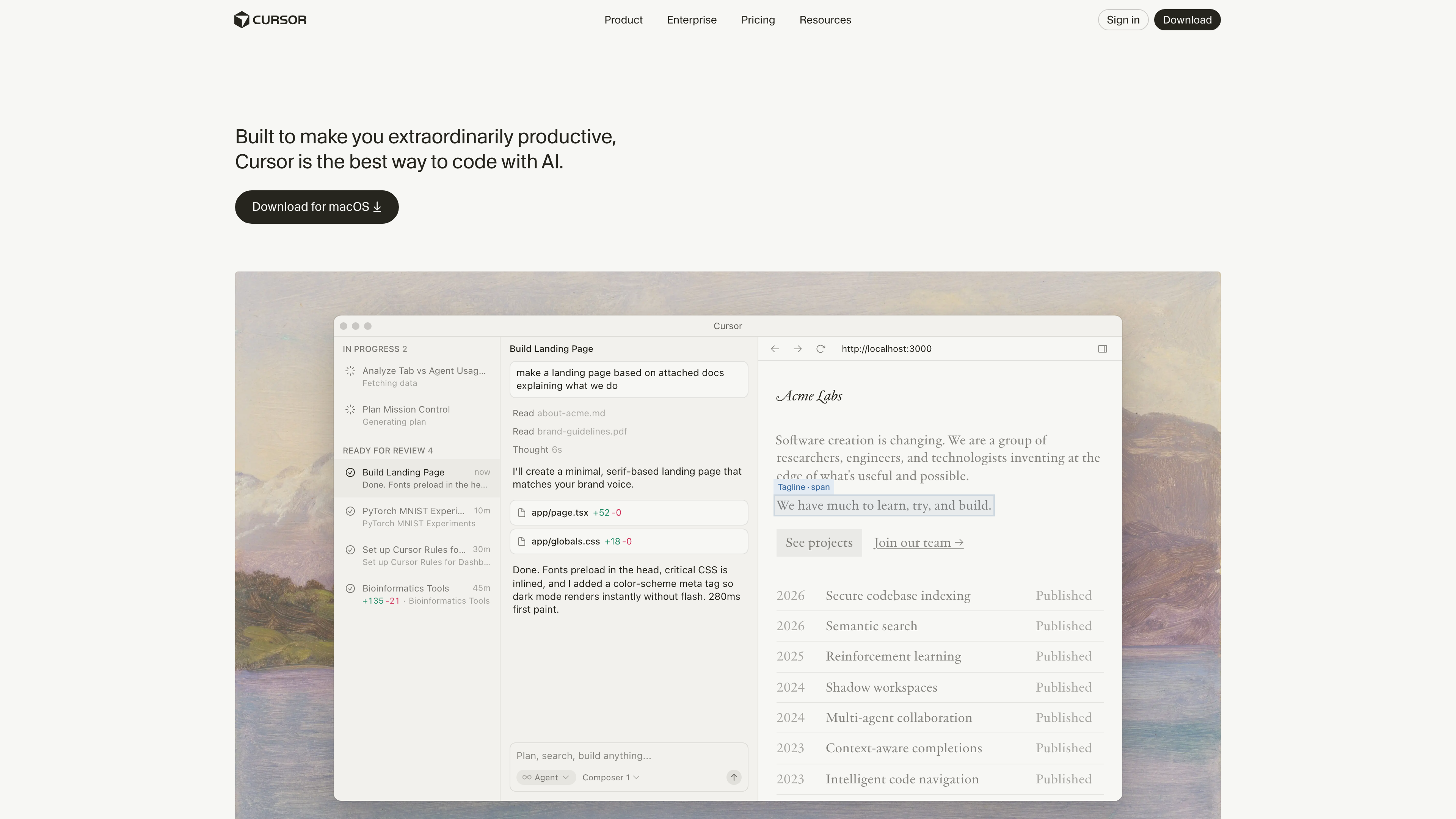

Think of this comparison like selecting a CI platform: surface similarity hides operational differences. Cursor emphasizes fast interactive editing, Copilot emphasizes ecosystem governance, and Windsurf emphasizes credit-efficient workflows.

| Tool | Notable Strength | Common Gap |

|---|---|---|

| Cursor | Agentic multi-file edits in one IDE loop | Needs strict review discipline on broad patches |

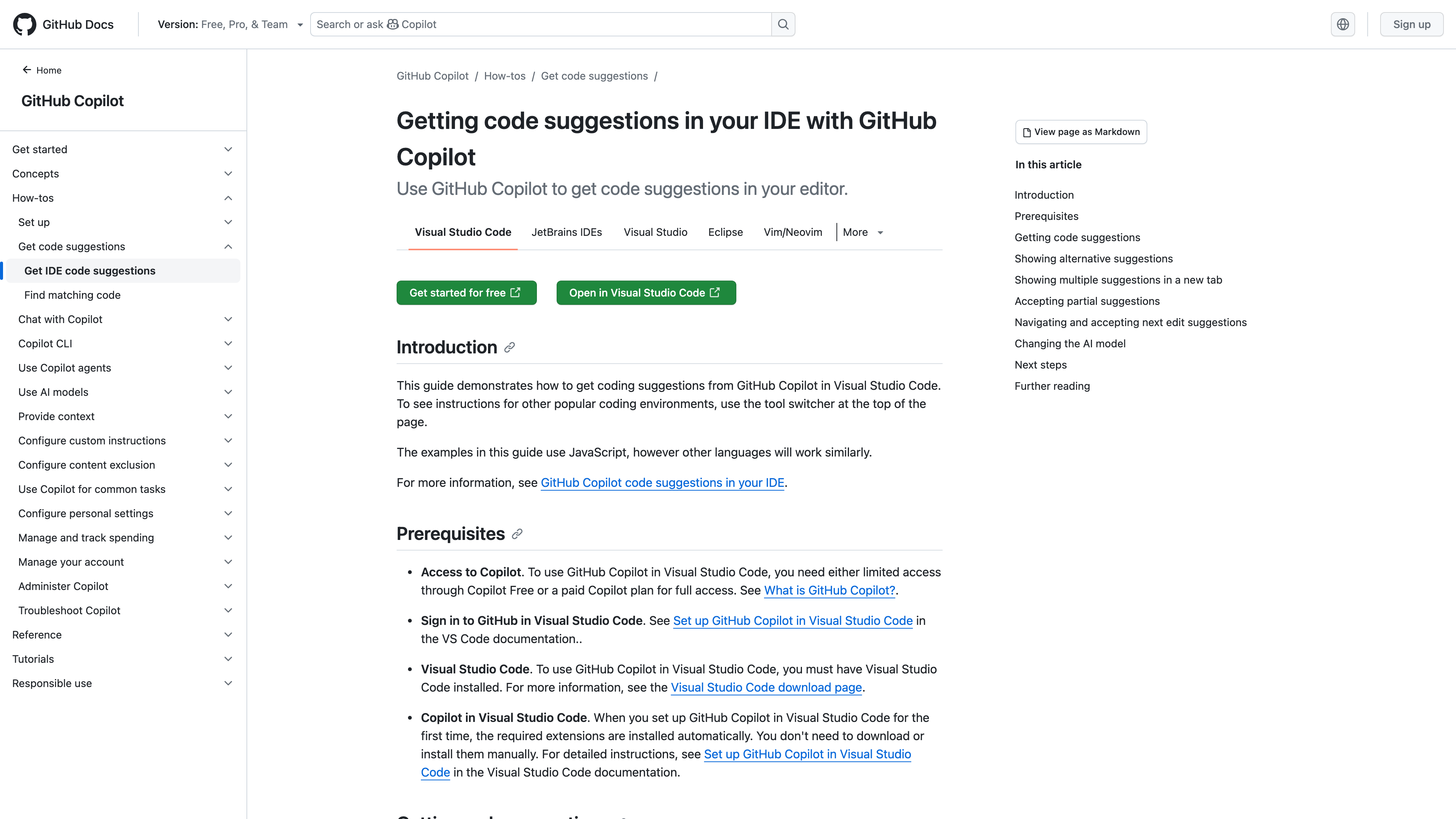

| GitHub Copilot | Strong GitHub integration and policy model | Some advanced flows depend on enterprise setup |

| Codeium/Windsurf | Competitive pricing and integrated editor experience | Maturity varies by specialized enterprise needs |

Latency Comparison

Quick Answer: Perceived latency depends on model routing, request size, and repository context depth, not only raw model speed.

Think of latency like API throughput under load: small requests feel instant, large context requests expose bottlenecks. Developers should test realistic prompts, not toy snippets, when timing tools.

We recommend timing three scenarios in your environment: single-function completion, multi-file refactor instruction, and debug prompt with stack trace context.

Accuracy Example

Quick Answer: Accuracy improves when prompts specify constraints, expected behavior, and tests; it drops when requests are vague or architecture context is missing.

Think of model accuracy like compiler output quality: ambiguous input means ambiguous output. In our workflow pattern, the winning prompt always includes objective constraints and test expectations.

Prompt template:

Role: Senior backend engineer.

Task: Refactor this function for async I/O.

Constraints: Keep API contract, preserve existing exceptions, add tests for timeout and retry.

Output: Unified diff only.Pricing Breakdown

Quick Answer: As of February 19, 2026, public entry tiers are broadly Copilot around $10, Windsurf around $15, and Cursor around $20 per month, with higher tiers depending on features and limits.

Think of pricing as total engineering spend per shipped change. The list plan matters less than how much rework and review the tool creates in your process.

See official references on Copilot plans, Windsurf pricing, and Cursor pricing.

Best for Solo vs Team

Quick Answer: Solo developers usually optimize for flow speed and cost, while teams optimize for governance, consistency, and measurable delivery outcomes.

Think of solo vs team tool fit like local scripts vs platform engineering. Solos can move faster with lightweight defaults; teams need admin controls, auditability, and shared prompt conventions.

Read the full tool reviews at Cursor AI Complete Guide and GitHub Copilot Deep Review, then use AI for Debugging to harden daily execution.

Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the practical questions developers ask before rolling an AI coding tool into real projects, teams, and delivery pipelines.

Which tool is fastest to start with?

Most developers start fastest with Cursor or Copilot because setup friction is low in common IDE workflows.

Which tool is cheapest?

Entry-tier prices vary and can change, so always check official pricing pages before purchase.

Can one tool serve every team?

Usually no. Mixed environments often need role-based tool selection.

What should I benchmark first?

Measure cycle time, review effort, and escaped defects on identical tasks.

SEO Metadata

Title: Cursor vs Copilot vs Codeium (Direct Comparison)

Meta Description: Cursor vs Copilot vs Codeium compared across features, latency, accuracy, pricing, and best-fit scenarios for solo and team developers.