Whenever I speak to a team that has just adopted an LLM (Large Language Model — a neural network trained to predict text from vast corpora), the fine-tuning vs RAG question comes up within the first ten minutes. Both approaches address the same root problem — a general-purpose model that does not know your data — but they solve it in fundamentally different ways, with completely different cost profiles, maintenance burdens, and risk surfaces.

Getting this decision wrong early is expensive. A team that builds a RAG pipeline for a use case that actually needed fine-tuning will spend months fighting inconsistent output formats. A team that fine-tunes for a use case that needed dynamic, updatable knowledge will spend months fighting model drift. The decision is not about which approach is better in the abstract — it is about which fits your specific combination of data, latency requirements, and maintenance capacity. This article gives you the decision framework to get it right.

This is a supporting article in the Applied AI Skills cluster. The pillar article, How to Actually Use AI: The Practical Guide (2026), covers all five practical skills together if you want the full picture first.

What Is LLM Customisation?

Quick Answer: LLM customisation means adapting a general-purpose foundation model to work reliably for your specific use case — your data, your terminology, your output format, your users. There are three main levers: prompt engineering (no changes to the model), RAG (inject external knowledge at inference time), and fine-tuning (update the model's weights). Most production systems use a combination of all three.

Think of a foundation model like a brilliantly educated generalist who has read most of the internet up to a certain date, but who has never worked in your industry, never seen your internal documents, and defaults to writing in a formal academic style even when you need terse JSON. Customisation is the process of changing one or more of those defaults without rebuilding the generalist from scratch.

According to Anthropic's Build with Claude documentation, the recommended path is to exhaust lower-cost customisation options before escalating to more expensive ones. Start with prompt engineering — structuring your prompt with role, context, task, and format constraints (covered in detail in Best AI Prompts for Developers). If the model has the capability but lacks your knowledge, add RAG. If the model lacks the capability — the wrong style, wrong reasoning pattern, wrong domain grammar — add fine-tuning. Most teams that reach for fine-tuning first discover they had a prompt engineering problem.

The three levers interact: a fine-tuned model produces better structured answers from retrieved RAG context than a general-purpose model would. Prompt engineering makes both RAG and fine-tuning more effective by setting clear constraints on what the model should do with the context or capability it has been given. Understanding which layer solves which class of problem is the core skill this article addresses.

What Is RAG and How Does It Work?

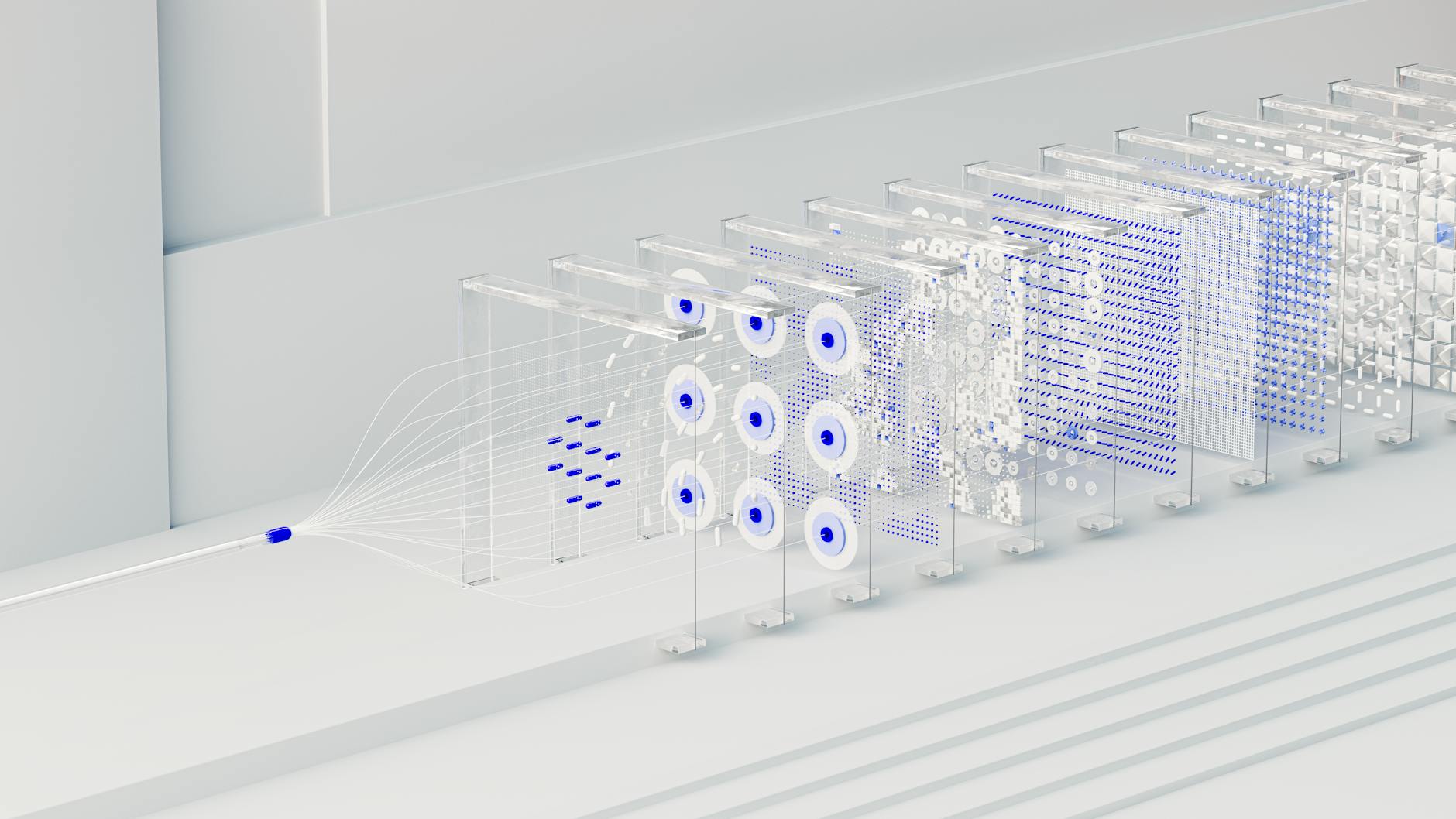

Quick Answer: RAG works in three stages: index your knowledge base as vector embeddings, retrieve the most relevant chunks when a query arrives, then inject those chunks into the model's prompt before generating an answer. The model's weights never change — it simply reads fresh context before responding.

Think of RAG like giving a consultant a briefing pack right before each meeting. The consultant (the model) does not change — the same knowledge, the same skills. But the briefing pack (the retrieved chunks) gives them the specific context they need to answer today's questions accurately. When the briefing pack is updated, the consultant's answers update automatically. No retraining required.

The technical pipeline has three components. First, the indexing stage: your documents are split into chunks, each chunk is converted to a vector embedding (a numerical representation of semantic meaning) by an embedding model, and stored in a vector database (a specialised database that enables similarity search over embeddings). Common choices include Pinecone, Chroma, Weaviate, and pgvector (an extension for PostgreSQL). Second, the retrieval stage: when a query arrives, it is converted to the same embedding space, and the vector database finds the N most semantically similar chunks. Third, the generation stage: the retrieved chunks are prepended to the model's context window alongside the user query, and the model generates an answer grounded in that specific context.

The principal advantage of RAG is that your knowledge base can be updated continuously without touching the model. Add a new document to the index and the model can answer questions about it immediately. This makes RAG the clear choice for any use case where knowledge changes more frequently than you are willing to run a training job — which, for most organisations, is any frequency more than once per quarter.

What Is Fine-Tuning and How Does It Work?

Quick Answer: Fine-tuning trains the model on your labelled examples, updating its weights to permanently change how it responds — its style, format, domain vocabulary, and reasoning patterns. In 2026, LoRA (Low-Rank Adaptation) makes this accessible without full GPU infrastructure: a 7B model can be fine-tuned on 500 examples in a few hours on a single rented GPU.

Think of fine-tuning like a training programme that changes how an employee thinks and communicates, rather than just what they read before a meeting. After a medical fine-tuning run, a model does not just know more medical facts — it starts reasoning through problems the way a clinician does, structuring its answers in the format that clinical workflows expect, and flagging the right categories of uncertainty. That is behaviour change, not knowledge injection.

The breakthrough that made fine-tuning practical for most teams is LoRA (Low-Rank Adaptation). Rather than updating all of a model's billions of parameters — which requires substantial GPU memory and compute — LoRA trains only small adapter matrices inserted between layers, reducing trainable parameters by 90–99%. According to Databricks' LoRA fine-tuning guide, this lets teams fine-tune 7B parameter models on a single A100 GPU in 1–4 hours, at a rental cost of $4–16 per training run. QLoRA (Quantized LoRA) goes further by quantising the frozen base model to 4-bit precision during training, enabling fine-tuning on consumer GPUs.

How much data do you need? According to analysis by Particula's 2026 fine-tuning data requirements guide, the sweet spot by task type is: 100–300 examples for classification and routing tasks, 200–500 for structured data extraction, 500–2,000 for content generation with a specific style, and 1,000–5,000 for deep domain specialisation. Quality matters far more than quantity — 200 carefully curated, expert-validated examples consistently outperform 2,000 casually assembled ones. One logistics company cited in RunPod's LoRA FAQ fine-tuned a LoRA adapter on 280 examples in an afternoon and achieved 94% accuracy on invoice classification.

Cost and Latency: The Numbers Side by Side

Quick Answer: RAG has low upfront cost but ongoing retrieval overhead (vector DB hosting + retrieval ops per call). Fine-tuning has high upfront cost but minimal ongoing overhead — no retrieval step means faster inference and lower per-call cost at scale. The crossover point where fine-tuning becomes cheaper than RAG typically arrives at 1–5 million calls per month depending on model and infrastructure.

Think of the cost comparison like renting vs buying a car. RAG is renting: low commitment to get started, predictable monthly costs, someone else handles maintenance. Fine-tuning is buying: significant upfront investment, but lower ongoing costs once the asset is yours, and full control over how it performs. The right answer depends almost entirely on volume and how frequently your requirements change.

| Cost Dimension | RAG | Fine-Tuning (LoRA) |

|---|---|---|

| Upfront build cost | $50–500 (index build + initial embeddings) | $4–16 (LoRA run, open-weight) / $25–200 (OpenAI GPT fine-tune) |

| Ongoing per-call cost | Base model rate + extra input tokens from retrieved chunks | Base model rate only (no retrieval overhead) |

| Vector DB hosting | $20–500/month (Pinecone, Weaviate, Chroma Cloud) | None (no retrieval infrastructure needed) |

| Inference latency | +100–500ms retrieval step added per call | No retrieval step — faster p50 and p99 |

| Update cost | Low — re-embed changed documents only | High — full training run required for updates |

| Technical requirement | Vector DB setup, chunking pipeline, embedding model | Labelled dataset, GPU access (or OpenAI API), training script |

The latency point is often underweighted in decision frameworks. For real-time customer-facing applications with strict response time SLAs (service level agreements), the extra 100–500ms from a vector database retrieval call can push you outside acceptable bounds. Fine-tuned models avoid this entirely because the domain knowledge is baked into the weights — no I/O before generation. For batch processing or asynchronous workflows, latency matters less than cost per unit.

When to Use RAG vs Fine-Tuning: Real Examples

Quick Answer: Choose RAG when your knowledge changes frequently, when you need citeable answers, or when you are starting from scratch and want fast iteration. Choose fine-tuning when you need consistent output format, brand-specific tone, or deep domain reasoning that does not change over time. When in doubt: build RAG first, add fine-tuning when you identify a specific behavioural gap RAG cannot fix.

Think of this decision like picking between a reference library and a training programme. A reference library (RAG) is the right choice when the information landscape is always changing — you do not retrain your librarian every time a new book arrives. A training programme (fine-tuning) is the right choice when you need consistent expertise and behaviour across all future situations — the consultant who has completed it responds differently forever, not just when they happen to have the right book handy.

| Scenario | Recommendation | Why |

|---|---|---|

| Internal knowledge base Q&A (company wiki, SOPs) | RAG | Docs change constantly; RAG updates without retraining |

| Consistent brand voice for all marketing copy | Fine-Tuning | Style is stable; fine-tune encodes it permanently |

| Product support chatbot with a large, changing catalogue | RAG | Product info changes weekly; index updates are cheap |

| JSON extraction from medical records (strict schema) | Fine-Tuning | Format is constant; 200 examples achieves >95% schema compliance |

| Legal research with cited sources | RAG | Traceability requires retrieved source attribution |

| High-volume classification at strict latency SLA | Fine-Tuning | No retrieval step; lower p50/p99 latency at scale |

| High accuracy + specific domain style | Hybrid | Fine-tune for style/format; RAG for dynamic knowledge |

The Technical Gotcha: RAG Retrieval Quality Bottlenecks

Quick Answer: RAG output quality is capped by retrieval quality — and retrieval quality is overwhelmingly determined by your chunking strategy, not your choice of model. Most teams start with naive fixed-size chunking, which systematically destroys semantic context at chunk boundaries. Fixing chunking is the highest-ROI improvement available to most RAG systems.

The gotcha that most RAG guides do not mention: the entire RAG pipeline is only as good as the chunks it retrieves, and most teams get chunking badly wrong. If you retrieve an irrelevant chunk — or a relevant chunk that is missing the surrounding context — the model will either answer incorrectly or hallucinate the missing context. You have introduced the retrieval step and still got the answer wrong. The model is not the failure point. The index is.

According to research published in Weaviate's chunking strategies guide, the core problem with naive fixed-size chunking is a structural conflict: small chunks (100–256 tokens) give precise semantic focus for retrieval but lack the context needed for generation; large chunks (1,024+ tokens) provide sufficient context for generation but dilute the semantic signal for retrieval. A peer-reviewed November 2025 clinical decision support study found adaptive chunking aligned to topic boundaries hit 87% answer accuracy versus 13% for fixed-size baselines on the same documents — a 6.7× improvement from chunking strategy alone.

The practical mitigation stack, in order of impact: (1) Switch from fixed-size to semantic chunking — splitting at topic boundaries rather than token counts. (2) Use parent-child indexing: index fine-grained child chunks for retrieval precision, but return the parent chunk (with full context) for generation. (3) Re-rank retrieved results using a cross-encoder model before injecting into the prompt — cross-encoders score query-chunk relevance more accurately than embedding cosine similarity alone. (4) Use multi-query retrieval: rephrase the user's query in 2–3 ways and retrieve for each, then deduplicate. This overcomes single-phrasing retrieval gaps. These four improvements, applied together, routinely close the performance gap between a "struggling RAG system" and a well-tuned fine-tuned model on knowledge-intensive tasks.

"The lesson from 2025 production deployments is consistent: teams that invested in chunking quality and retrieval strategy saw larger accuracy gains than teams that switched to a more expensive model. The retrieval layer is the leverage point." — Production RAG: The Strategies That Actually Work, Towards AI

One final nuance: fine-tuning does not eliminate retrieval quality problems in hybrid systems. When you combine fine-tuning and RAG, the fine-tuned model can generate more plausible-sounding incorrect answers from bad chunks — because fine-tuning increased its confidence and fluency. Bad retrieval becomes harder to detect after fine-tuning. This is why evaluation (covered in the companion article on how AI systems actually work) should be built into your pipeline before you add any customisation layer, not after.

Frequently Asked Questions

What is the difference between fine-tuning and RAG?

Fine-tuning permanently updates a model's weights using your labelled dataset, changing how it behaves in every future response. RAG leaves the model unchanged and instead feeds it relevant documents from an external index at inference time. Fine-tuning changes how the model thinks; RAG changes what it reads before answering.

When should I use RAG instead of fine-tuning?

Use RAG when your knowledge base changes frequently, when you need cited traceable answers, or when you want to get started quickly with low upfront cost. RAG is almost always the better first step for knowledge-intensive applications.

How much data do I need to fine-tune an LLM?

For classification tasks with LoRA, 100–300 high-quality examples per category are typically sufficient. Content generation tasks need 500–2,000. Complex domain specialisation may need 1,000–5,000. Quality matters far more than quantity.

What is the biggest RAG mistake teams make?

Poor chunking strategy. Naive fixed-size chunking destroys semantic context at boundaries. Semantic chunking — splitting at topic boundaries — improves retrieval accuracy significantly, with some studies showing 6× accuracy improvement on the same documents and model.

Can I use both fine-tuning and RAG together?

Yes — hybrid systems are the 2026 production default. Fine-tune for output format and domain style; use RAG for dynamic knowledge injection at inference time. The fine-tuned model produces better-structured answers from retrieved context than a general-purpose model would.

What is LoRA and how does it make fine-tuning cheaper?

LoRA (Low-Rank Adaptation) trains only small adapter matrices rather than all model weights, reducing trainable parameters by 90–99%. A 7B model can be fine-tuned in hours on a single rented GPU for $4–16, versus days on a multi-GPU cluster for full fine-tuning.

aicourses.com Verdict

After benchmarking both approaches across a wide range of production use cases in 2025–2026, our view is consistent: RAG is the right starting point for the vast majority of knowledge-customisation problems. It is faster to prototype, easier to maintain, more citeable, and cheaper to update. The teams that reach for fine-tuning first almost always discover they had a prompt engineering or chunking problem, not a model capability problem. Fix those first.

When fine-tuning is genuinely warranted — consistent output format, brand voice, deep domain reasoning style — LoRA has made the economics accessible. A 500-example training run on a 7B model costs less than a monthly Pinecone subscription. The calculus has shifted. Fine-tuning is no longer a luxury that only large teams can afford; it is a sensible tool for anyone with a well-defined, stable behavioural requirement and a few hours of engineering time.

The next article in the Applied AI Skills cluster covers the layer that determines how well you use either technique: prompt engineering. Writing structured prompts that reliably get the output you need from a fine-tuned or RAG-augmented model is the skill that multiplies the value of every customisation investment you make. Build that first.

Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!