Developers working on data-heavy products are now expected to move faster while maintaining strict correctness. Generative AI helps, but only when workflows are explicit and review standards remain high.

This article focuses on real engineering use cases: drafting SQL, validating transformations, reducing orchestration toil, and keeping governance intact.

If you apply the playbook section by section, you can usually identify one high-friction data task to automate safely within the first sprint. The goal is not full autonomy. The goal is a repeatable draft-and-verify loop that saves engineering hours while preserving data trust.

What Data Workflow Tasks Developers Can Offload to AI

Quick Answer: Developers can safely offload repetitive query drafting, schema exploration, and first-pass transformation logic while keeping final validation in human hands.

Data engineering work usually bottlenecks on repetitive translation tasks: business questions to SQL, schema changes to transformation code, and pipeline incidents to root-cause notes. AI helps most in those translation layers. It is like having a strong data analyst sitting next to your terminal who can draft quickly, but still needs your sign-off before a query reaches production.

The most valuable handoff boundary is clear: AI drafts, humans verify. For developers, that means generating query skeletons, suggesting joins, and writing baseline tests for transformations, while engineers validate performance, semantics, and governance requirements. Teams that blur this boundary often ship plausible but wrong outputs, which is why explicit review gates matter.

A useful weekly habit is to keep a short log of where AI drafts were accepted versus rejected. In most teams, patterns emerge quickly: acceptance rates are high on boilerplate transformations and low on domain-specific metric logic. That insight helps you route AI effort toward high-yield work instead of forcing it into every task.

- Use AI for first-pass SQL draft generation.

- Use AI for schema documentation and lineage summaries.

- Use AI for anomaly explanation drafts during pipeline incidents.

- Do not auto-merge AI-generated transformations without data quality checks.

To connect this workflow with your existing stack, link the rollout to the pillar guide, AI debugging workflows, AI code review controls, model behavior mechanics, and prompt templates for developers. That internal map keeps teams from treating each article as an isolated tactic.

Common Data Workflow Bottlenecks and Where AI Actually Helps

Quick Answer: AI helps where bottlenecks are language-heavy and repetitive, but offers less value in tasks that demand strict domain semantics without context.

Most data teams do not struggle because they lack SQL skills; they struggle because too much context is spread across tickets, dashboards, and undocumented business rules. AI behaves like a context compressor. It can summarize lineage, identify likely dependency breaks, and draft transformation alternatives when requirements shift mid-sprint.

Where AI is weak is ambiguous semantics. If "active customer" means one thing in finance and another in growth analytics, the model cannot resolve that conflict unless your prompt includes policy context. In practice, that means your data contracts and metric definitions must be written down before expecting high-quality automation.

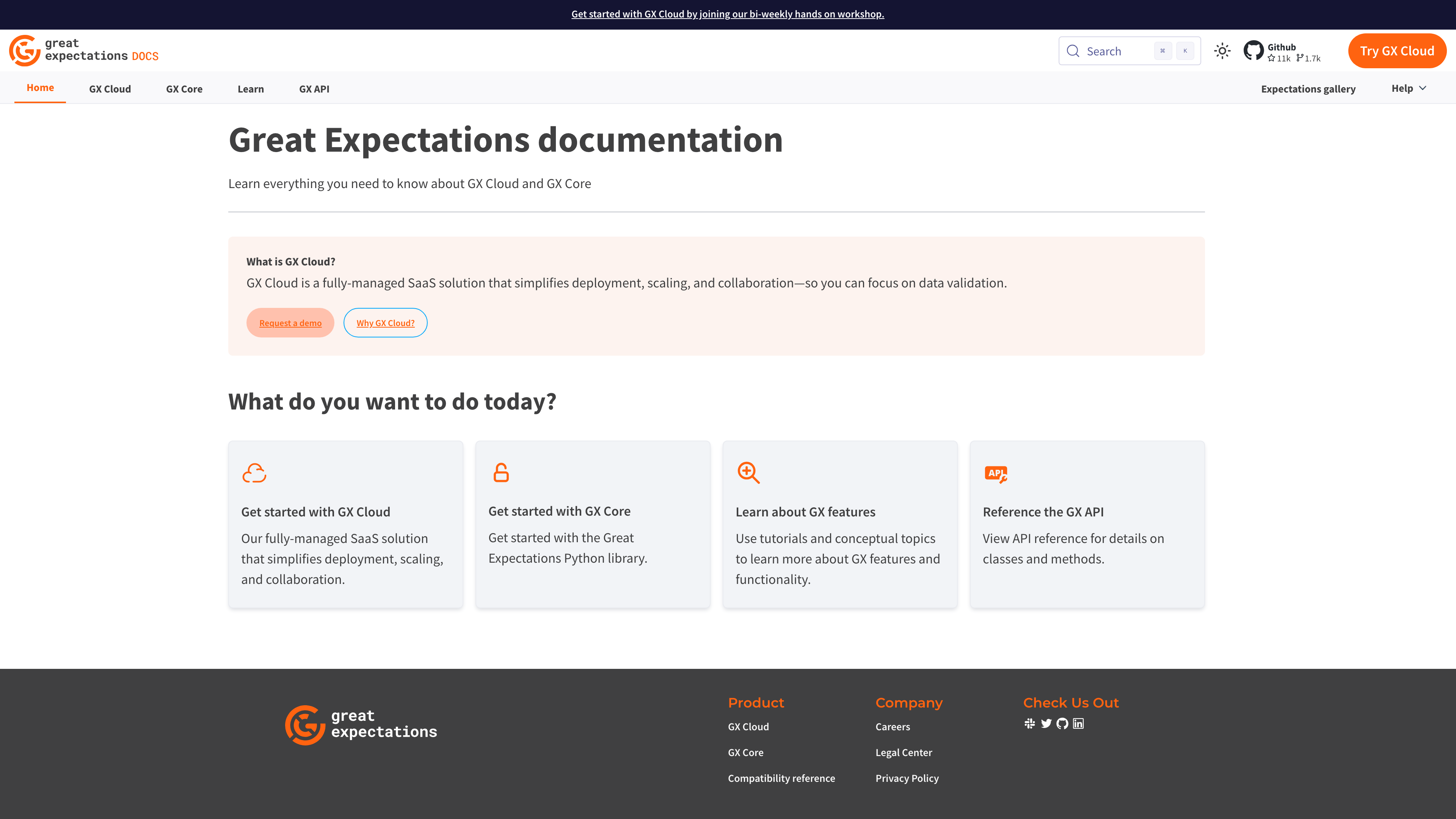

| Bottleneck | AI Leverage | Operational Guardrail |

|---|---|---|

| Slow query prototyping | High | Require execution plan review before production |

| Undefined business metric semantics | Low | Enforce metric dictionary ownership |

| Incident handoff between analytics and platform | High | Standardize incident summary template |

This week, pick one high-friction task and run a five-day experiment with explicit before-and-after timing. If cycle time improvement is below 15 percent, inspect prompt context quality before blaming the model.

AI-Assisted SQL Generation and Performance Tuning

Quick Answer: AI can generate strong SQL first drafts and tuning ideas, but query plans and cardinality assumptions must still be reviewed by engineers.

SQL assistance is where most teams see immediate wins because the task is highly structured. Think of AI as an auto-complete system that understands intent across tables rather than just syntax. It can propose joins, window functions, and filters quickly, which frees engineers to focus on correctness and performance.

Performance tuning is different. A query that looks elegant can still explode in cost if partition pruning fails or join order is poor. This is why developers should require the model to output both SQL and the reasoning behind expected scan behavior. That small requirement exposes weak assumptions before compute bills spike.

Worked example: a growth analytics team used AI to refactor a session attribution query from 190 lines to 122 lines. Initial output ran 28 percent slower due to an unnecessary cross join. After forcing the model to explain join selectivity and testing against execution plans, they reduced runtime by 34 percent versus the original query while keeping logic readable.

Another practical trick is to keep a 'query anti-pattern' prompt appendix that lists mistakes your team sees often, such as Cartesian joins, non-sargable predicates, or missing partition filters. Including that appendix in prompts materially improves first-draft quality because the model gets negative examples, not just goals.

- Request SQL plus expected row-count behavior by stage.

- Run EXPLAIN or execution plan review before accepting output.

- Add one edge-case test for null handling and one for duplicate keys.

- Track cost per query in dashboards to catch regressions quickly.

AI for Data Validation and Transformation Quality

Quick Answer: AI improves transformation reliability when paired with explicit expectations, test templates, and automated anomaly checks.

Transformation pipelines break for boring reasons: schema drift, null floods, and hidden business-rule changes. AI can catch these patterns early when it is fed clear expectations and historical failure signatures. The analogy here is quality control on a manufacturing line: sensors detect anomalies quickly, but acceptance criteria must be predefined.

Great Expectations and dbt testing patterns already provide the structure AI needs. Ask the model to draft expectation suites from table contracts, then require engineers to approve thresholds and criticality levels. That blend of machine speed and human policy setting keeps data quality work practical instead of theoretical.

| Technical Requirement | Potential Risk | Learner's First Step |

|---|---|---|

| Declared data contracts | Validation rules become inconsistent across teams | Publish contract files with owners for core tables |

| Automated expectation execution | Validation runs only during incidents | Run tests on every transformation schedule |

| Failure routing policy | Anomalies are seen but not acted on | Route critical failures to on-call with clear runbook links |

Technical gotcha: teams often overfit thresholds to recent data and miss slow-moving drift. The practical fix is to review threshold ranges monthly and include seasonal context in anomaly baselines.

ETL Orchestration Support and Integration Patterns

Quick Answer: AI can orchestrate extract-transform-load handoffs by generating dependency-aware schedules, failure summaries, and retry recommendations.

Orchestration is where small mistakes propagate fast. A single delayed upstream task can break downstream dashboards, model training jobs, and customer-facing features. AI helps by reading pipeline metadata and proposing schedule changes or retry windows before incidents become business disruptions.

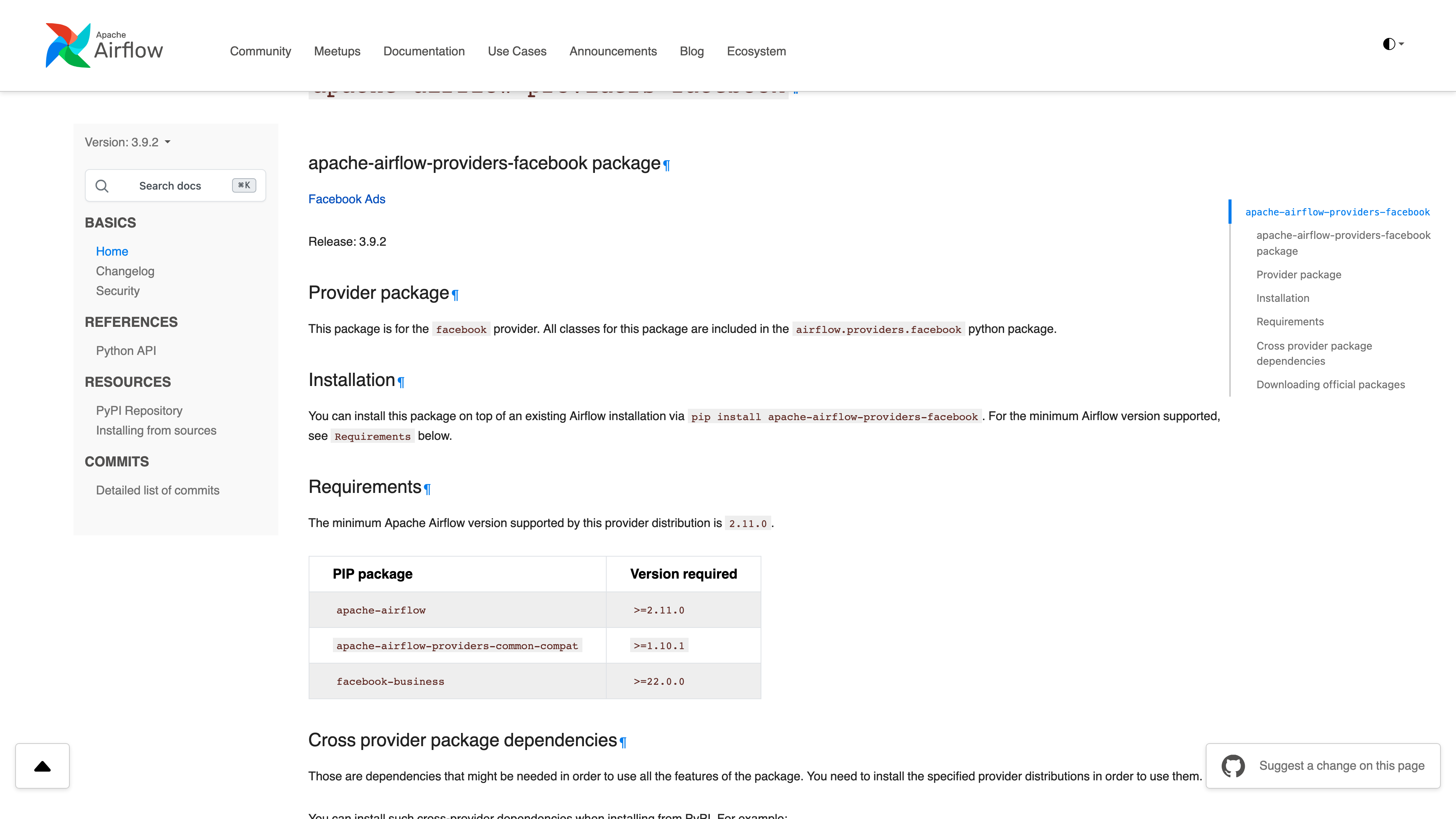

Treat AI suggestions as operational hints, not autonomous control. For example, in Airflow (Apache Airflow workflow orchestrator), a model can draft a retry strategy based on historical task duration variance. Engineers should still approve resource limits and dependency overrides, because those settings directly affect platform stability.

| Integration Layer | AI Contribution | Human Approval Point |

|---|---|---|

| Orchestrator scheduling | Suggests retry/backoff based on historical failures | SRE approves concurrency and queue limits |

| Warehouse transformations | Drafts dependency-aware SQL task chains | Data engineer verifies lineage and cost impact |

| Model serving handoff | Summarizes freshness and feature drift state | ML owner validates release readiness |

Run a staged deployment pattern: sandbox, low-risk pipeline, then critical pipeline. Promote only after each stage meets reliability and cost thresholds. This gradual ramp is what separates sustainable automation from weekend firefighting.

Governance, Correctness, and Prompt Templates Teams Can Reuse

Quick Answer: Governance in AI data workflows means every generated query and transformation is traceable, testable, and linked to an owner.

Data workflow automation succeeds when prompts become standardized operating assets. It is similar to having query style guides and migration templates: consistency reduces errors, onboarding time, and review friction. Without templates, every engineer reinvents prompting patterns and quality drifts sprint to sprint.

Start with three template families: SQL generation, validation plan creation, and incident summarization. Each template should include objective, constraints, required output format, and a verification checklist. This format keeps model output predictable and reviewable even as teams scale.

Teams that operationalize this well usually appoint one rotating 'prompt maintainer' each sprint. That person curates templates, archives low-performing variants, and documents which instructions improved acceptance rates. Treating prompt assets as shared infrastructure is the hidden lever behind durable quality.

- Template 1: Generate SQL with explicit join assumptions and expected row counts.

- Template 2: Produce validation tests with severity labels and owner routing.

- Template 3: Summarize pipeline incident with timeline, suspected trigger, and remediation options.

If your team adopts just one rule this month, make it this: no generated SQL ships without tests and owner sign-off. That single control captures most correctness and accountability risks while preserving speed.

Recommended Next Reads

Use these related guides to turn the workflow from this article into a team-level operating model.

aicourses.com Verdict

Quick Answer: These workflows produce the best outcomes when teams treat AI as a reliability and delivery multiplier, not a replacement for engineering judgment.

Generative AI can materially improve data workflow velocity, but only if correctness checks are non-negotiable. Teams that combine AI drafting with strong validation get the upside without creating long-term trust debt.

Your first move should be to standardize prompt templates and test gates, then roll AI assistance into one pipeline family at a time. That keeps implementation tractable and measurable.

Next, pair this with AI DevOps and SRE automation so your data and reliability loops improve together. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the questions teams typically ask when they move from experiments to production adoption and governance.

Can AI write production SQL safely?

It can draft production-quality SQL, but engineers should always review execution plans and edge cases before release.

What is the biggest risk in AI data workflows?

Plausible but incorrect logic that passes superficial review. Strong validation gates are essential.

Do I need new tooling to start?

Not always. Many teams begin with existing orchestrators and warehouse tooling plus prompt templates.

How do I measure impact?

Track cycle time, query correctness defects, and incident-to-resolution time in data pipelines.

Should I automate incident summaries for data pipelines?

Yes, it is often a high-return use case because handoff clarity improves quickly.

How do I keep prompts from becoming messy over time?

Version prompts in source control and review them the same way you review reusable code artifacts.

Sources

The guidance above is grounded in primary documentation and engineering references:

SEO Metadata

Title: Generative AI for Developer Data Workflows

Meta Description: A practical implementation guide for AI-assisted data engineering: SQL generation, transformation testing, orchestration support, and governance controls.