This technical deep dive explains what actually happens under the hood when an AI coding assistant returns a suggestion.

If you understand these mechanics, you can improve quality, reduce cost, and avoid the common hallucination traps that hurt production teams.

Context Windows Explained

Quick Answer: A context window is the maximum amount of text and code a model can consider in one generation pass, which directly affects complex multi-file tasks.

Think of context windows like RAM for reasoning. If key files do not fit, the assistant sees an incomplete system and suggestions become brittle.

In practice, teams should minimize irrelevant prompt noise and provide concise architecture context before requesting large refactors.

Token Limits and Costs

Quick Answer: Token usage is the real unit of cost for most AI coding interactions, so long prompts and repeated retries can dominate spend.

Think of tokens as metered compute. Better prompt structure lowers token waste and speeds up response time.

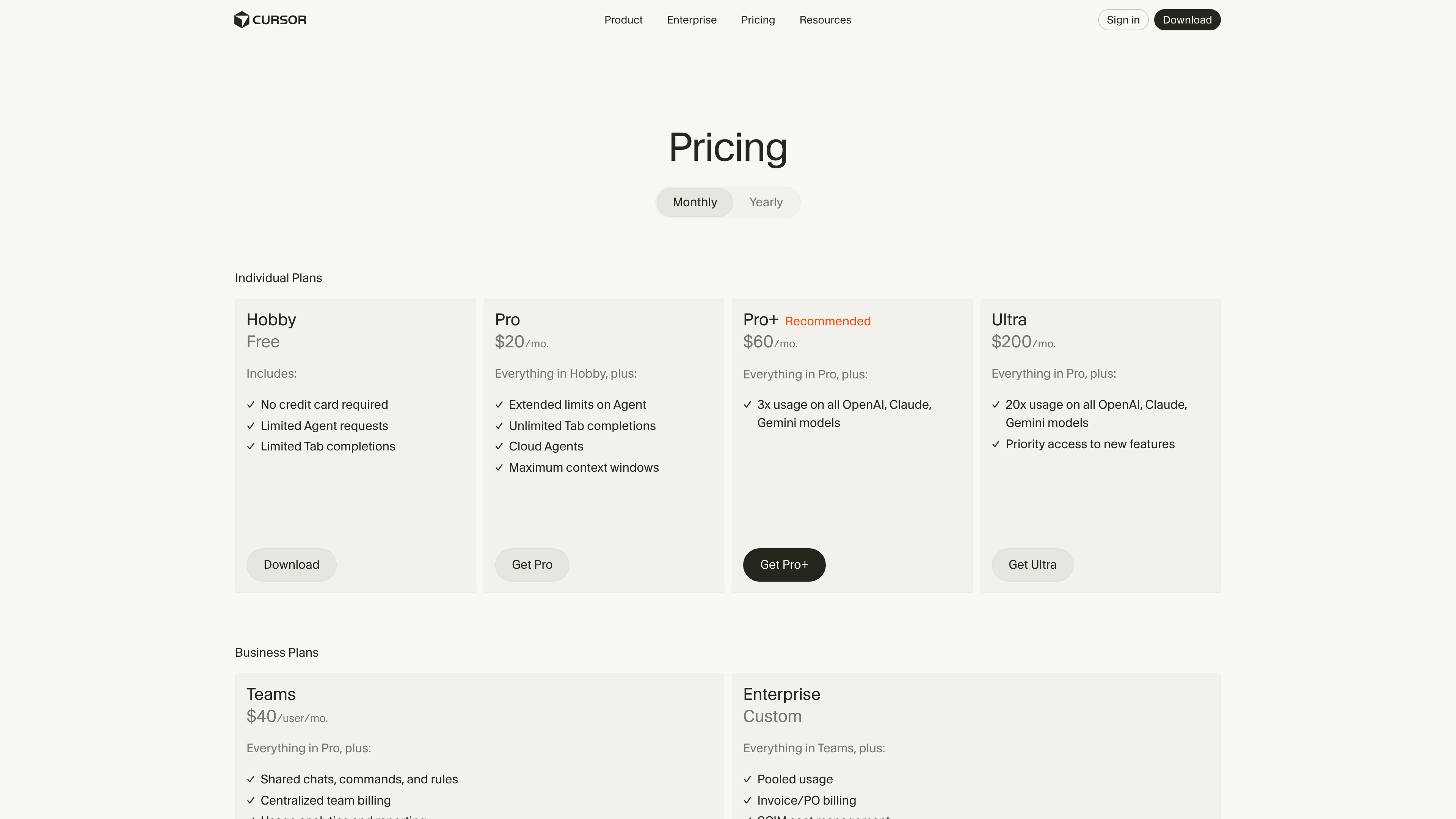

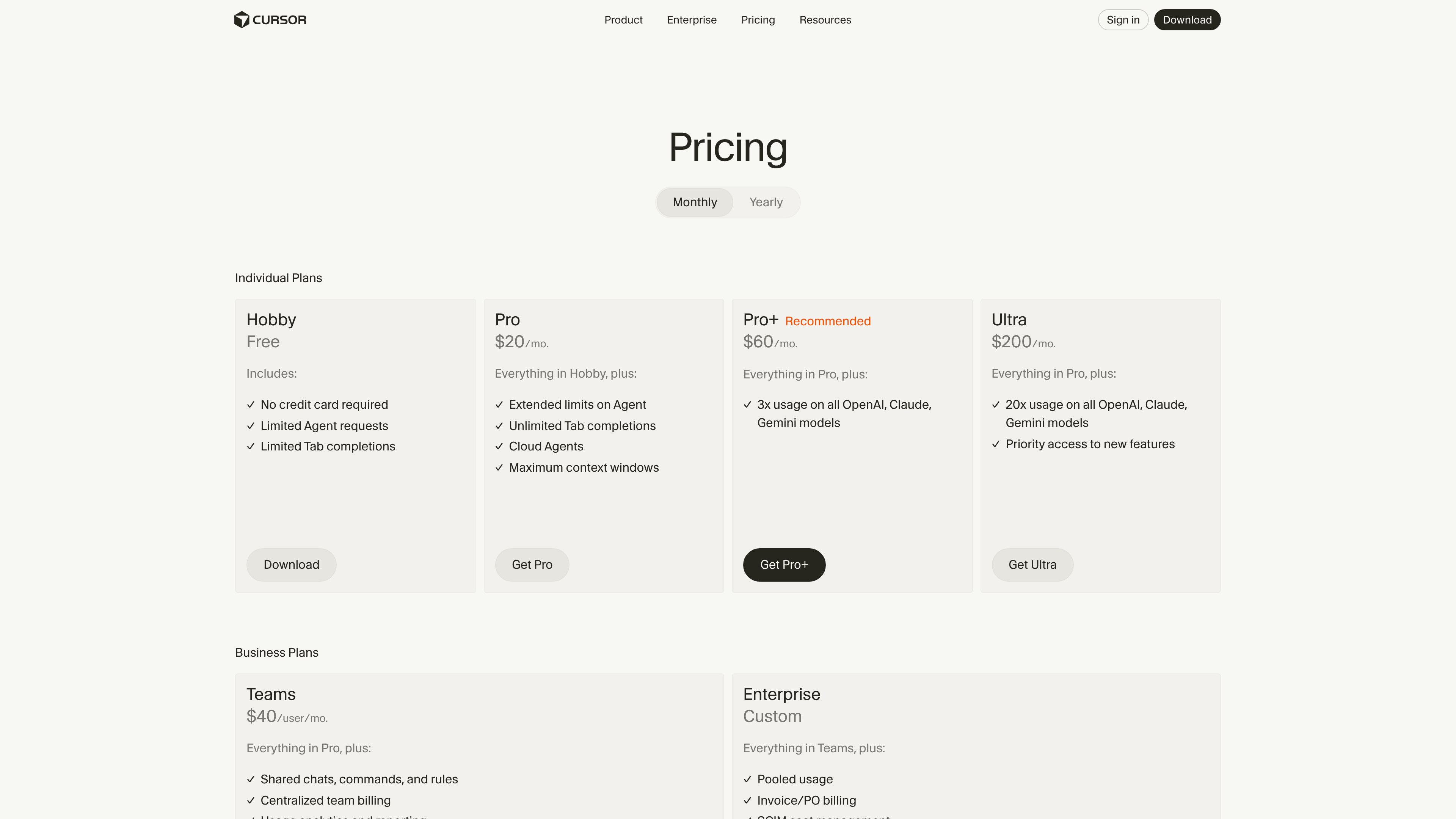

Pricing pages like Cursor and Windsurf make this visible through request limits, credits, and plan caps.

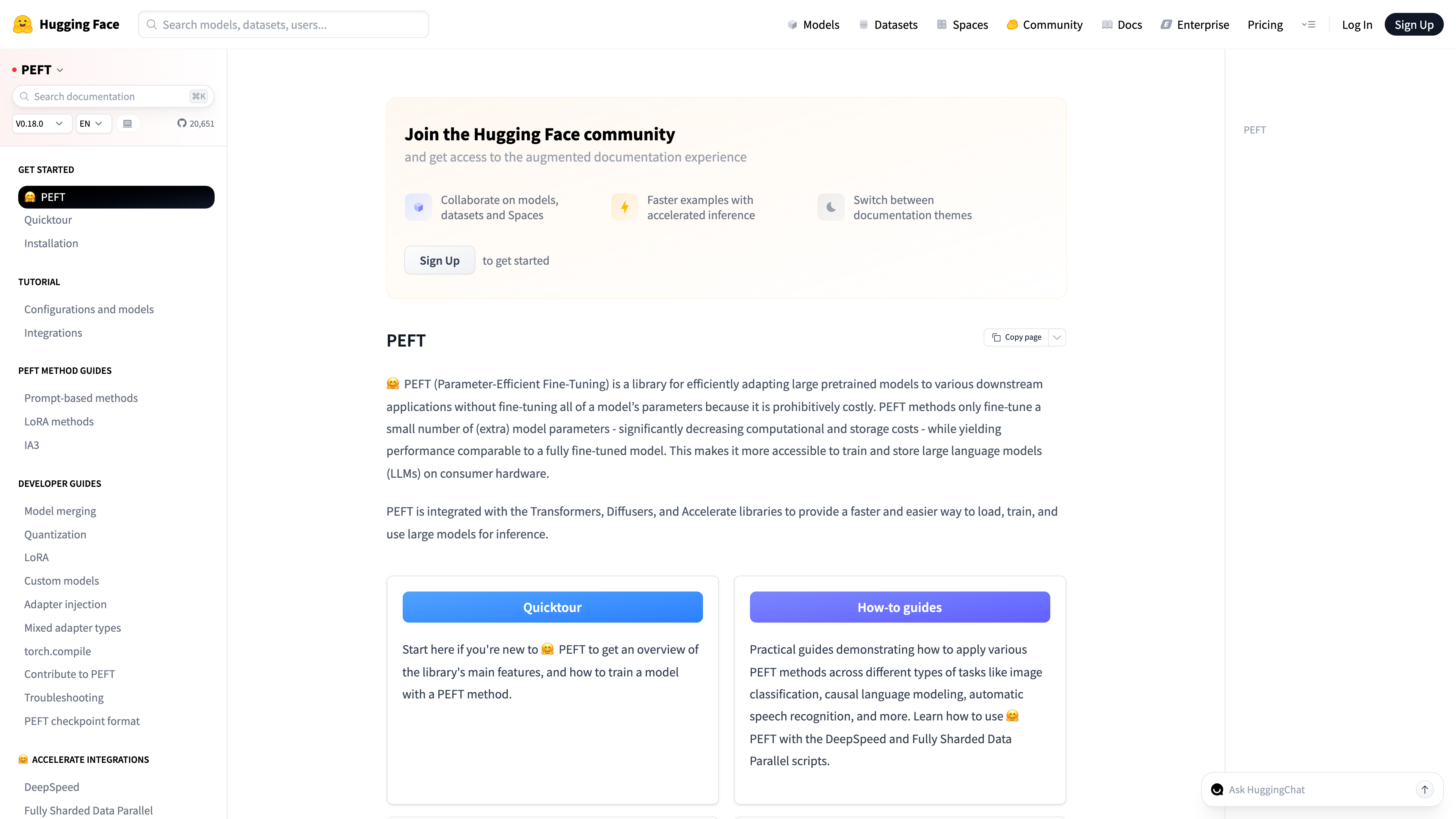

Embeddings

Quick Answer: Embeddings convert code and text into vectors so the system can retrieve semantically related context instead of relying only on keyword matching.

Think of embeddings as similarity coordinates. They allow tools to find conceptually related snippets even when identifiers differ.

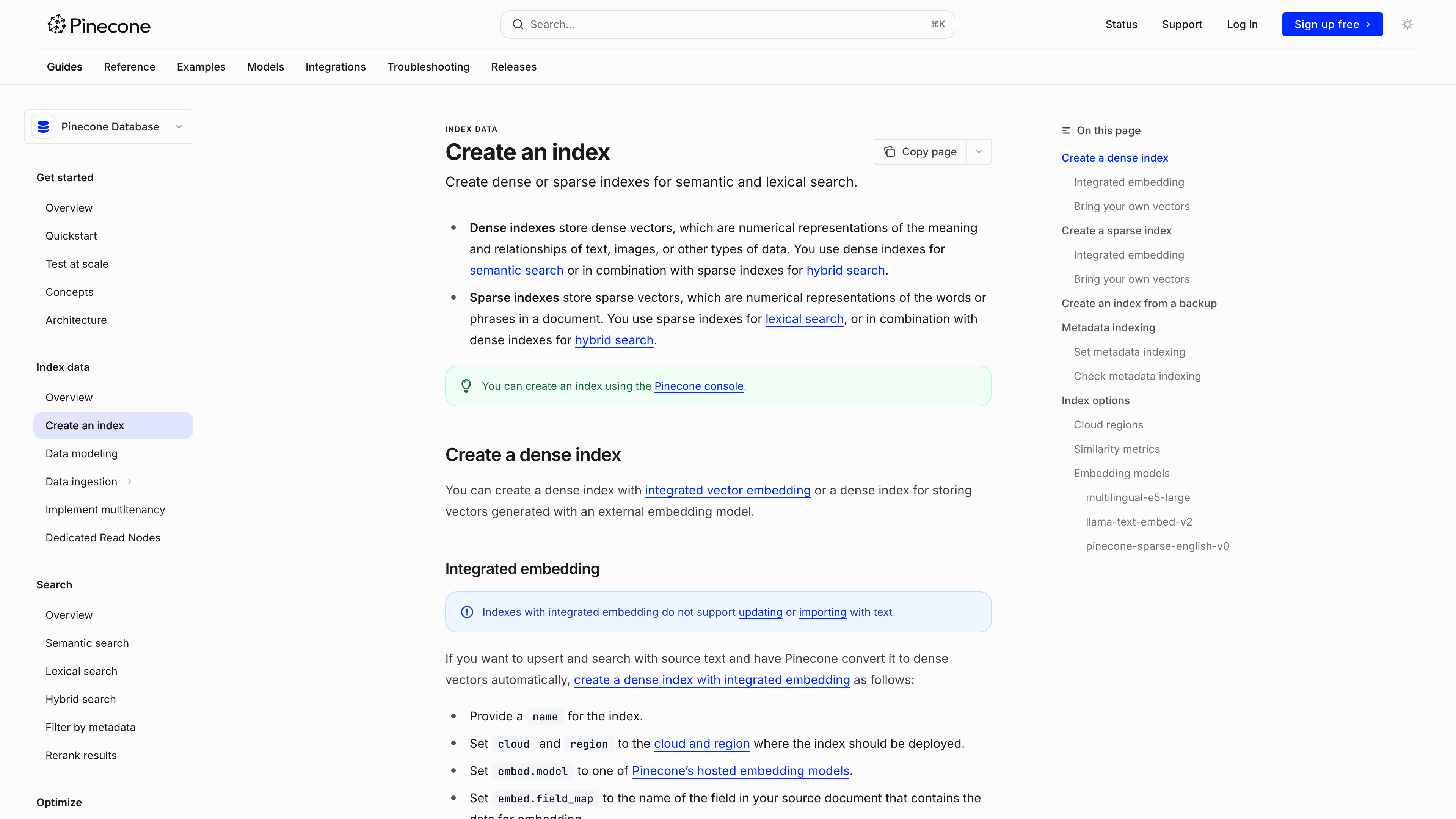

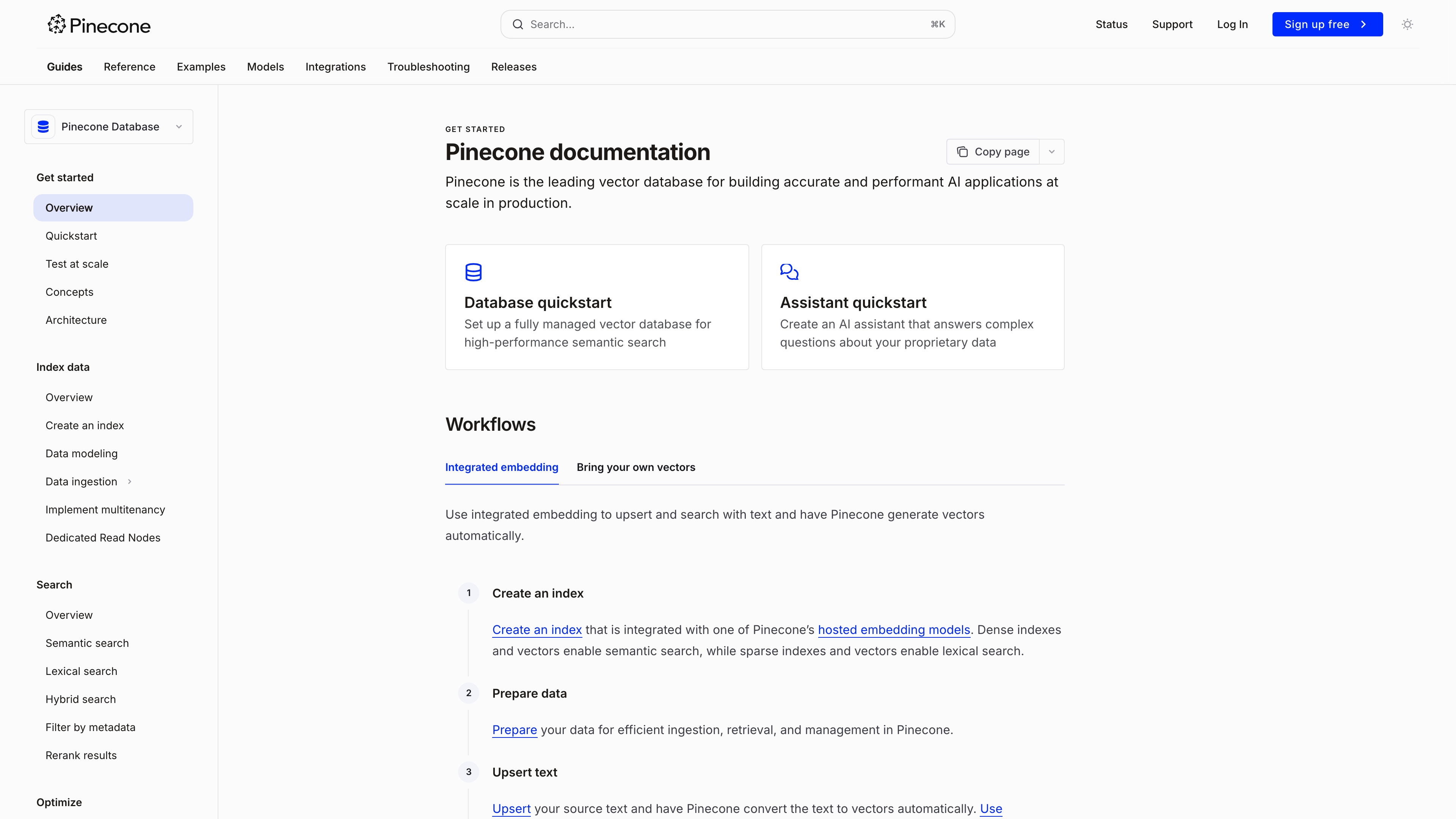

The Pinecone semantic search guide explains the retrieval mechanics behind modern coding assistants.

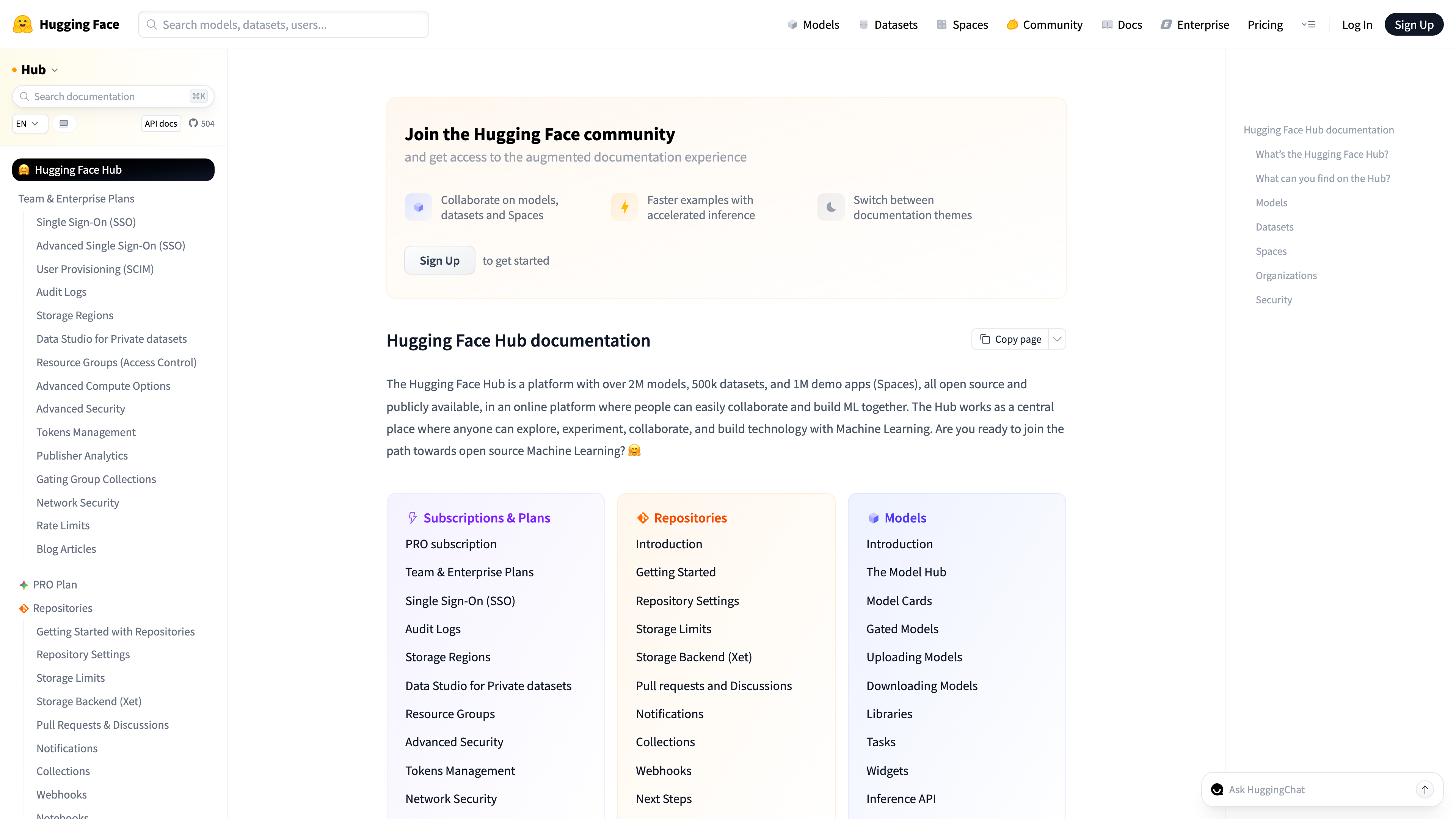

Retrieval-Augmented Generation (RAG)

Quick Answer: RAG (retrieval-augmented generation) combines document retrieval with generation so outputs are grounded in your repository and docs.

Think of RAG as giving the model a curated briefing before it writes. Without retrieval, assistants guess; with retrieval, they cite local context.

query = "add idempotency to payment endpoint"

context = vector_store.search(query, top_k=5)

response = llm.generate(system_rules + context + query)Fine-Tuning vs Prompting

Quick Answer: Prompting is usually the fastest and cheapest control layer, while fine-tuning is heavier and only justified when repeated patterns cannot be solved through prompts and retrieval.

Think of prompting as runtime configuration and fine-tuning as model surgery. Most developer teams should exhaust prompt + retrieval tactics first.

For immediate implementation, use prompt libraries in Best AI Prompts for Developers and tool-level comparisons in Cursor vs Copilot vs Codeium.

Verdict

Quick Answer: Teams that understand context limits, retrieval quality, and review gates get durable value from AI coding tools; teams that ignore these mechanics often get expensive noise.

The mechanics are not optional details. They are the operating model. If you train teams on these concepts, adoption quality improves fast.

Bridge to next article: apply these mechanics directly with Best AI Prompts for Developers. Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!

FAQ

Quick Answer: These are the practical questions developers ask before rolling an AI coding tool into real projects, teams, and delivery pipelines.

What is the most important technical concept to learn first?

Context management is usually the highest-leverage concept because it directly affects output quality.

Do I need fine-tuning for coding assistants?

Usually no. Prompt engineering plus retrieval often solves the majority of practical workflows.

Why do assistants hallucinate code?

They can generate plausible but incorrect output when context is incomplete or constraints are vague.

How do I reduce AI coding cost?

Use concise prompts, reusable templates, and targeted retrieval to reduce token waste.

SEO Metadata

Title: How AI Coding Tools Actually Work (Technical Deep Dive)

Meta Description: Technical deep dive on how AI coding tools work: context windows, token limits, embeddings, RAG, and fine-tuning vs prompting.