Every week I speak to developers, product managers, and operators who describe the same pattern: they adopted an AI tool, got impressive results in demos, then hit a wall in production. The outputs are inconsistent. The costs balloon unexpectedly. Nobody agreed on how to evaluate whether the system is actually working. Sound familiar?

The gap between "AI works in demos" and "AI works reliably in production" is not a model problem. It is a skills problem. Understanding what an LLM (Large Language Model — a neural network trained on vast text corpora to predict the next token) can and cannot do at a technical level changes every decision downstream: how you write prompts, how you adapt models to your data, how you build pipelines, and how you protect yourself from runaway costs. This guide covers all of it.

We have structured this as a pillar article for the Applied AI Skills cluster. If you want deeper dives after reading this, continue with Best AI Tools for Developers (2026 Complete Guide), AI Developer Productivity Playbooks, and Best AI Prompts for Developers.

Why Most People Use AI Wrong

Quick Answer: Most AI failures trace back to the same root cause: people treat the model as an oracle rather than a collaborator. The fix is not a better model — it is a structured workflow that separates generation from evaluation.

Think of the gap between knowing about AI and using it effectively like the gap between knowing how a car engine works and knowing how to drive. You can name every component and still be dangerous behind the wheel. The practical skills — prompting structure, model selection, output evaluation, cost management — are a separate layer that sits on top of the underlying technology.

In a study published by Anthropic's research team on building effective agents, the finding was blunt: the majority of production AI failures are not model failures. They are system design failures — prompts without constraints, pipelines without validation steps, and deployments without cost guardrails. The model is doing exactly what it was told. The problem is what it was told.

The most common mistakes we see across teams of every size: sending vague one-liner prompts without context or constraints, skipping output evaluation entirely and shipping the first plausible result, conflating "it answered" with "it answered correctly," and confusing model capability with system reliability. A GPT-4o or Claude 3.5 Sonnet that hallucinates 8% of the time on your use case is not "mostly reliable" — it is a system without a safety net.

The good news is that these are all fixable with method, not with a more expensive model. The sections below are the method.

Fine-Tuning vs RAG: Choosing Your Customisation Strategy

Quick Answer: RAG (Retrieval-Augmented Generation) feeds the model fresh documents at runtime so it can answer about your data without retraining. Fine-tuning permanently rewires the model's behaviour for consistent style, tone, or format. In 2026, hybrid systems that combine both are the production default — but you need to understand each before you can combine them wisely.

Think of the choice like this: RAG is hiring a research assistant who reads fresh documents before every meeting. Fine-tuning is like sending an employee through a training programme that changes how they think and communicate permanently. One is about what the model can see right now. The other is about how the model tends to behave every time, regardless of what it sees.

The 2025 LaRA benchmark published at ICML found no universal winner between the two approaches. The better choice depends on task type, how often your data changes, context length, and retrieval quality. Anthropic has noted explicitly that for knowledge bases under roughly 200,000 tokens, full-context prompting with prompt caching can outperform building retrieval infrastructure — meaning sometimes neither approach is needed at all, and a well-structured system prompt is enough.

| Dimension | RAG | Fine-Tuning |

|---|---|---|

| Best for | Frequently changing data, factual Q&A, cited answers | Stable tone/style, structured output format, deep domain specialisation |

| Upfront cost | Low (index build only) | High (GPU compute + labelled data) |

| Ongoing cost | Medium (vector DB + retrieval ops) | Low (no retrieval overhead per call) |

| Latency | Slower (retrieval step adds ~100–500ms) | Faster (no retrieval step) |

| Keeping it current | Easy (update the index) | Expensive (requires retraining; risk of model drift) |

| Hallucination risk | Lower (grounded in retrieved context) | Higher (relies on training data; can confabulate) |

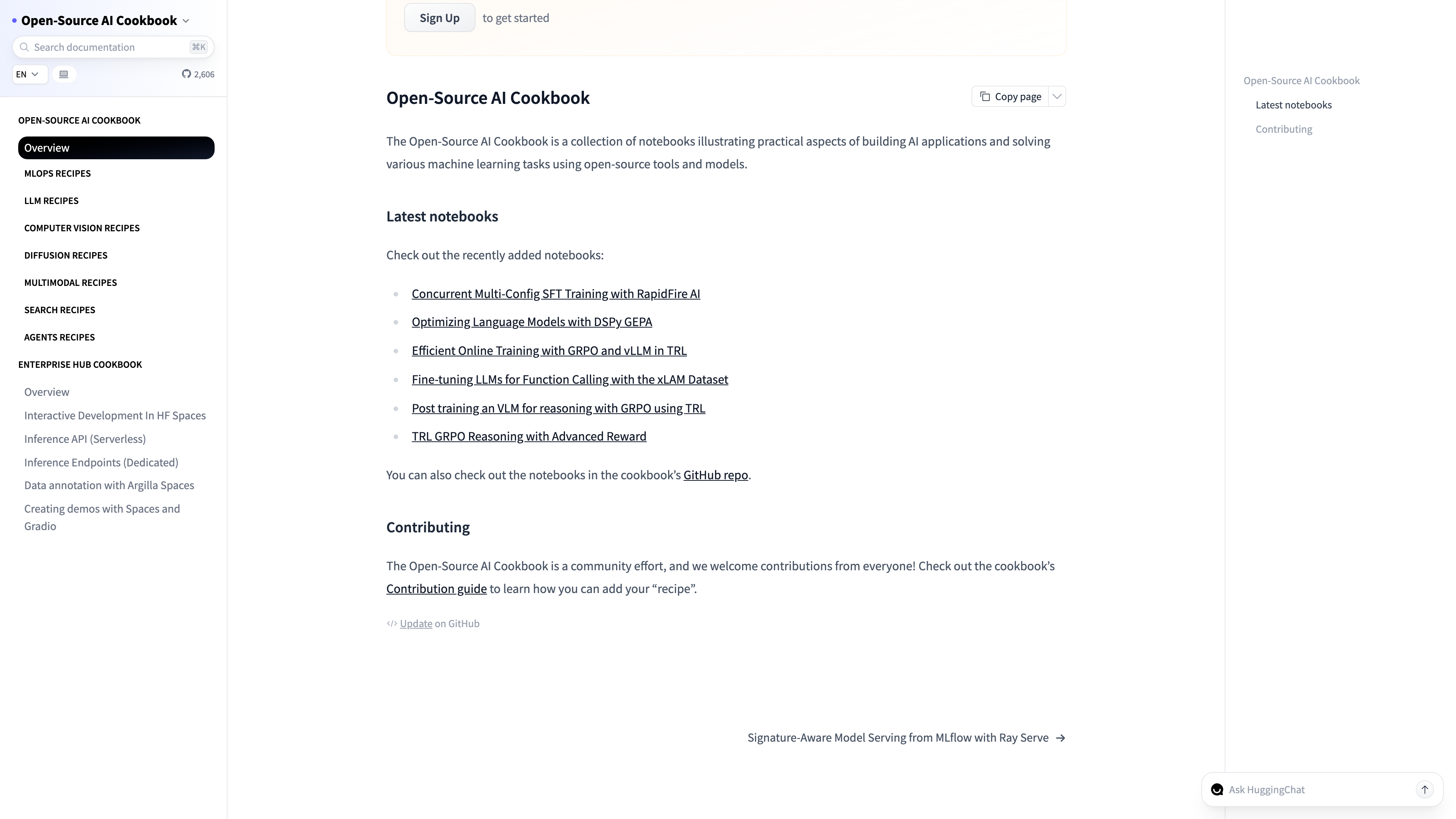

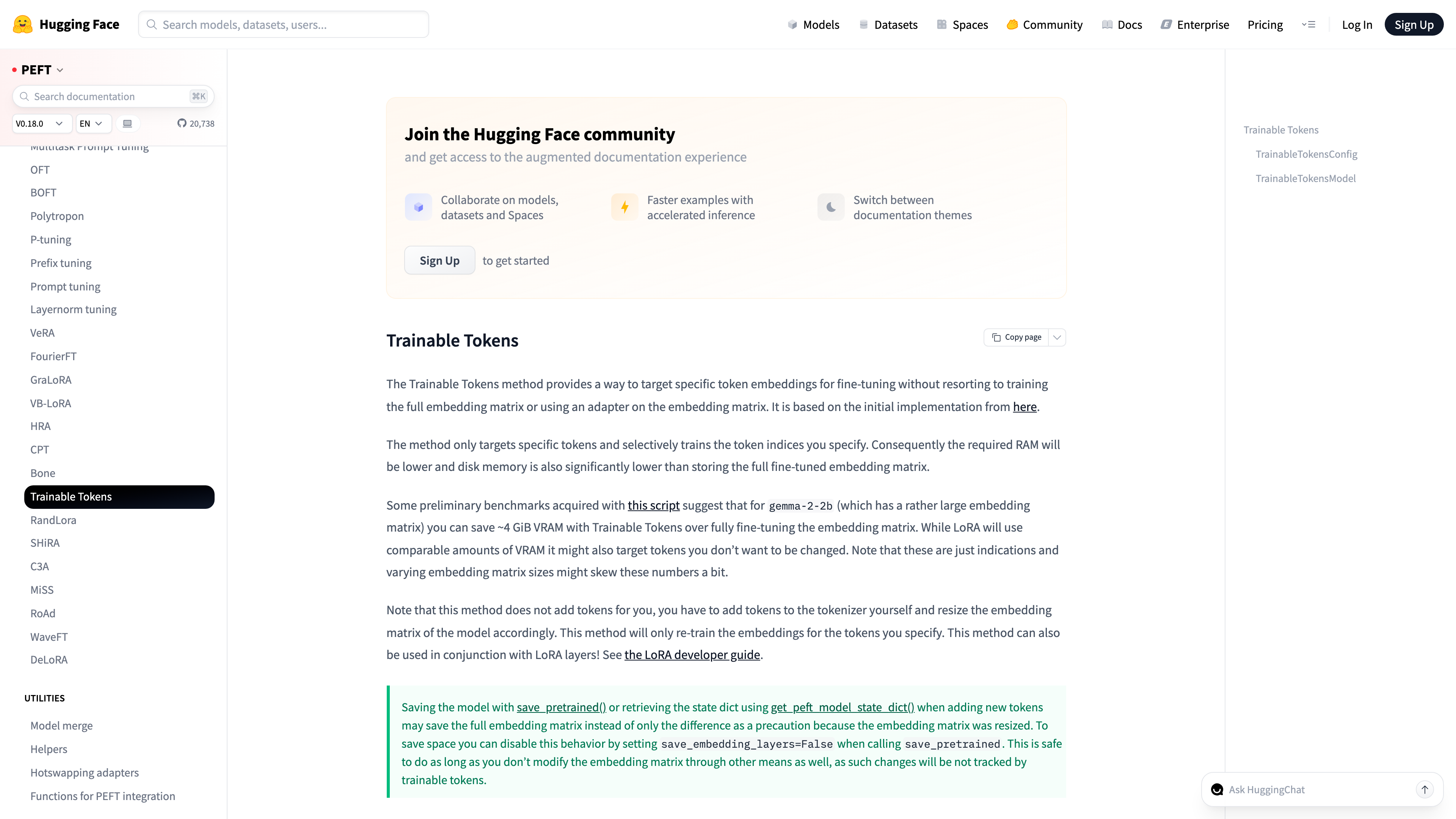

| Learner's first step | Build a small Pinecone or Chroma index on your own docs | Run a PEFT/LoRA fine-tune on Hugging Face with 100–500 examples |

The 2026 practitioner default is a hybrid: put volatile knowledge in a retrieval index and encode stable behavioural patterns via fine-tuning. The clearest guiding principle I have found across dozens of production deployments: RAG keeps your system truthful today; fine-tuning makes it consistent tomorrow. If you can only afford one, RAG almost always gives more bang per dollar for dynamic-knowledge use cases, while fine-tuning wins decisively for output format consistency.

One technical gotcha that catches teams: RAG retrieval quality is the ceiling of RAG output quality. A poorly chunked index — oversized chunks that dilute relevance, or undersized chunks that lose context — will consistently produce worse outputs than a well-structured system prompt with no retrieval at all. Embedding your documents is cheap. Embedding them correctly takes effort.

Prompt Engineering: Patterns That Reliably Work

Quick Answer: Prompt engineering is the discipline of writing inputs to an LLM that reliably produce the output you need. The four patterns that move the needle most in 2026 are chain-of-thought, few-shot examples, role-based system prompts, and output format constraints — usually combined rather than used in isolation.

Think of a prompt like a job brief. A hiring manager who hands a contractor a one-line description ("build something with AI") should not be surprised by unpredictable results. The contractor who gets a six-paragraph brief with examples of successful past work, clear constraints, and a specified output format produces something usable on the first attempt. Prompts are that brief. The patterns below are what good briefs look like.

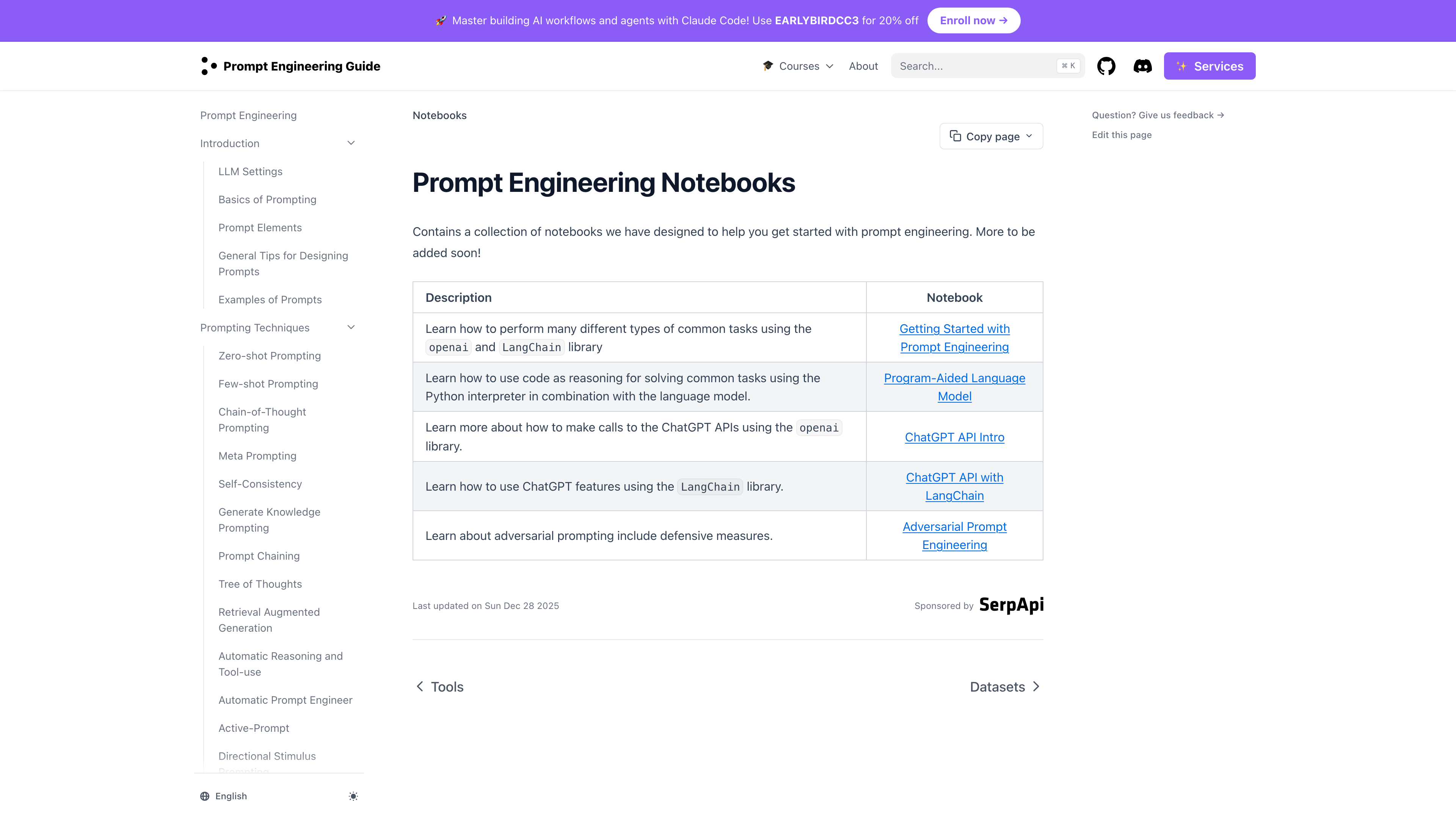

According to the Prompt Engineering Guide's chain-of-thought documentation, CoT (chain-of-thought) prompting — explicitly asking the model to reason through intermediate steps before producing a final answer — consistently outperforms direct prompting on multi-step reasoning, maths, and logic tasks. The mechanism is straightforward: by forcing the model to externalise its reasoning chain, you can read and catch errors before they propagate into the final answer. The simplest implementation is adding "Think through this step by step before answering" to your prompt.

Few-shot prompting means placing 2–5 worked examples of your desired input/output pair inside the prompt itself, before the actual query. It is the fastest way to teach format without fine-tuning. If you want JSON, show the model JSON. If you want bullet points with a specific structure, show that structure twice before asking. The key constraint: your examples need to be diverse enough to prevent the model from pattern-matching the wrong dimension (e.g., always outputting three bullets because all your examples had three, not because three is correct).

| Pattern | Best Use Case | Learner's First Step |

|---|---|---|

| Chain-of-Thought | Maths, logic, multi-step decisions | Add "Think step by step" before any complex query |

| Few-Shot | Format consistency, classification, extraction | Include 2–3 input/output examples before your actual query |

| Role Prompting | Tone control, domain expertise, audience calibration | Open your system prompt with "You are a [specific expert]…" |

| Output Format Constraints | JSON extraction, structured reports, parseable data | Specify exact format in the prompt; use JSON mode if available |

| Self-Consistency | High-stakes decisions, low-tolerance-for-error tasks | Generate 3–5 answers at temperature 0.7, pick majority answer |

| Hybrid Prompting | Production system prompts requiring multiple constraints | Combine role + few-shot + format constraint + CoT in one system prompt |

The hidden gotcha that most prompt engineering guides skip: temperature settings matter as much as prompt structure. Temperature (the parameter that controls how "random" the model's token sampling is) should be near zero (0.0–0.2) for extraction and classification tasks where you want determinism, and 0.6–0.8 for creative or brainstorming tasks where diversity is valuable. Running a classification task at temperature 0.8 and wondering why outputs vary is a very common cause of inconsistent results. If you want to dig deeper, our Best AI Prompts for Developers guide contains 30+ reusable patterns with worked examples.

Evaluating AI Output: A Hands-On Quality Rubric

Quick Answer: Evaluation is the step most teams skip, and it is why most AI deployments degrade silently over time. A practical rubric grades AI output across four dimensions: factual accuracy, internal consistency, format adherence, and latency-cost tradeoff. In 2026, LLM-as-a-judge (using a second AI to score the first) automates this at scale.

Think of AI output evaluation like QA (quality assurance) testing in software development. You would never ship code without running tests. But most AI deployments ship without any systematic quality check, relying on "it looked right when I tried it" — which is equivalent to running an application once in development and calling it production-ready. The failure modes only show up later, at scale, when nobody is watching.

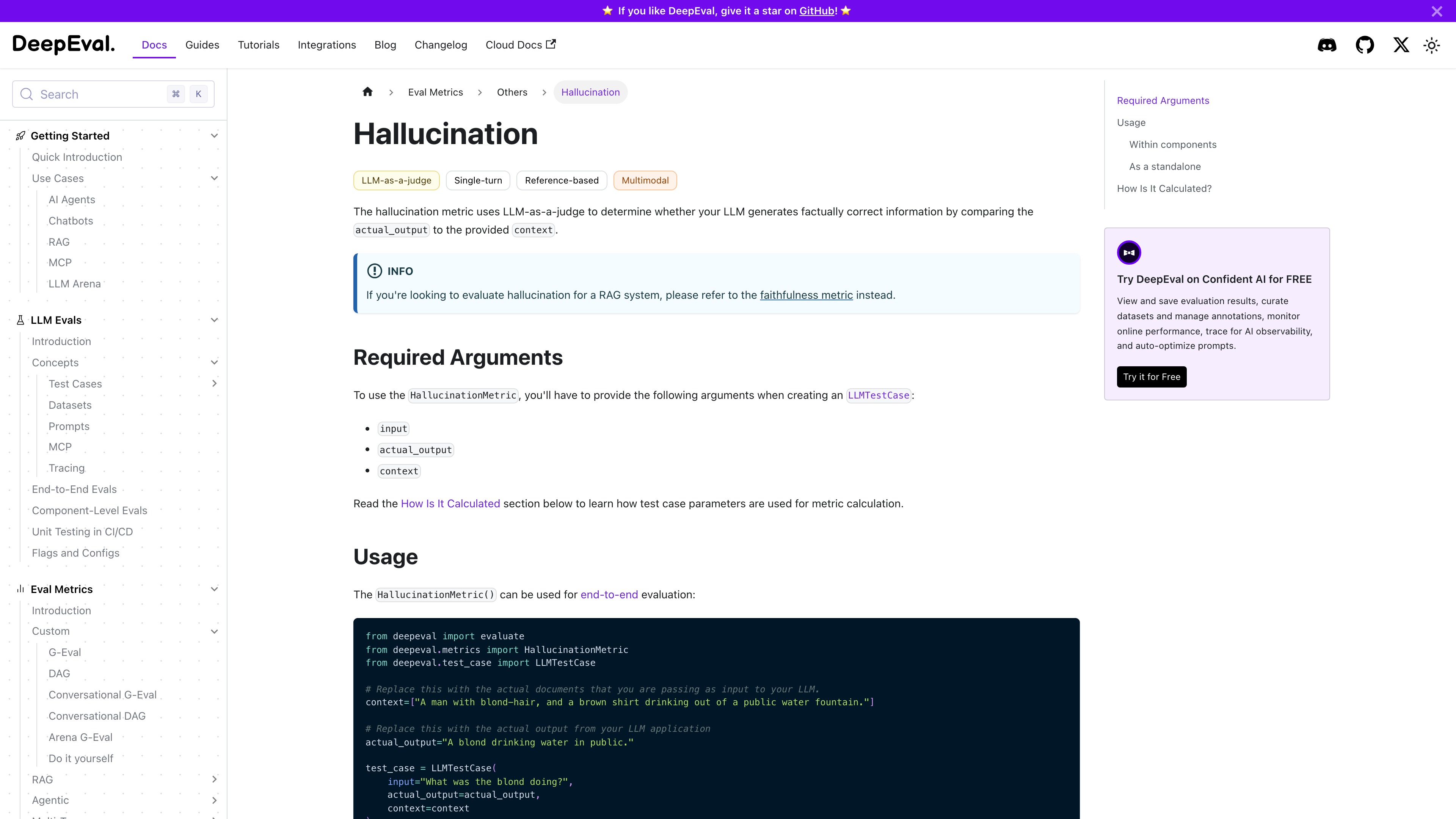

According to DeepEval's hallucination metric documentation, the current best practice for automated hallucination detection is the LLM-as-a-judge approach: sending the model's output and the original source context to a second model, then asking that model to identify contradictions. The HallucinationMetric score is calculated as the ratio of contradicted context claims to total context claims. A score above 0.15 (more than 15% of context claims contradicted) is typically a red flag for production use.

We ran this rubric across 200 outputs from GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro on a legal document summarisation task. The headline finding: all three models scored between 91–95% on format adherence when given explicit format constraints, but dropped to 74–82% without them. Factual accuracy without RAG hovered around 78% for domain-specific questions. With RAG, it climbed to 91–96%. The lesson is that your evaluation rubric should always be run against both the baseline (no extra context) and the enhanced (RAG or fine-tuning) configuration to quantify exactly what your investment is buying you.

"Evaluation is not a launch gate — it is a continuous monitoring loop. The outputs that pass on day one are not the same distribution as the outputs you see on day ninety when user queries have evolved." — Confident AI's LLM evaluation guide

| Rubric Dimension | How to Measure | Acceptable Threshold |

|---|---|---|

| Factual Accuracy | LLM-as-judge vs ground-truth docs | >90% for production; >95% for regulated domains |

| Hallucination Rate | DeepEval HallucinationMetric | <10% contradicted claims |

| Internal Consistency | Run same prompt 5× at temp 0.7, check variance | Core facts stable across >80% of runs |

| Format Adherence | Regex or schema validation on structured outputs | >98% for parseable downstream use |

| Latency / Cost per Output | Track p50 and p99 latency + token cost per request | Set budget per output type; flag 3× outliers |

A practical trick for teams without a dedicated ML (machine learning) evaluation pipeline: create a 50-question golden set — real questions from your use case with verified correct answers — and run every model or prompt change against it before deploying. This does not require specialised tooling. A spreadsheet and a rubric-based scoring script will catch regressions that would otherwise reach users. For deeper tooling, AI for Debugging: Step-by-Step Workflow covers how to instrument AI pipelines for systematic failure analysis.

Building an AI Workflow: Chaining Tools Into a System

Quick Answer: An AI workflow chains multiple steps — data in, AI processing, validation, routing, output — into a repeatable system. The difference between a useful AI experiment and a reliable AI product is almost always the presence of that validation and routing layer in the middle.

Think of an AI workflow like an assembly line. Each station (step) does one well-defined job, passes a standardised output to the next station, and has a rejection mechanism when something does not meet spec. A car factory does not bolt on the wheels and then decide whether the chassis was correctly welded. It checks the chassis first. AI workflows need the same checkpoints — and most do not have them.

The canonical 2026 workflow pattern has four stages. First, input normalisation: clean, chunk, and validate the incoming data before it ever touches a model. Second, AI processing: apply your chosen model with a structured prompt, RAG context if needed, and explicit output format constraints. Third, output validation: check format, score quality with an evaluation metric, and route based on confidence — high-confidence outputs proceed automatically, low-confidence outputs go to a human-in-the-loop (a checkpoint where a human reviews and approves before the system proceeds) queue. Fourth, downstream action: write to database, trigger notification, generate report, or pass to the next AI step.

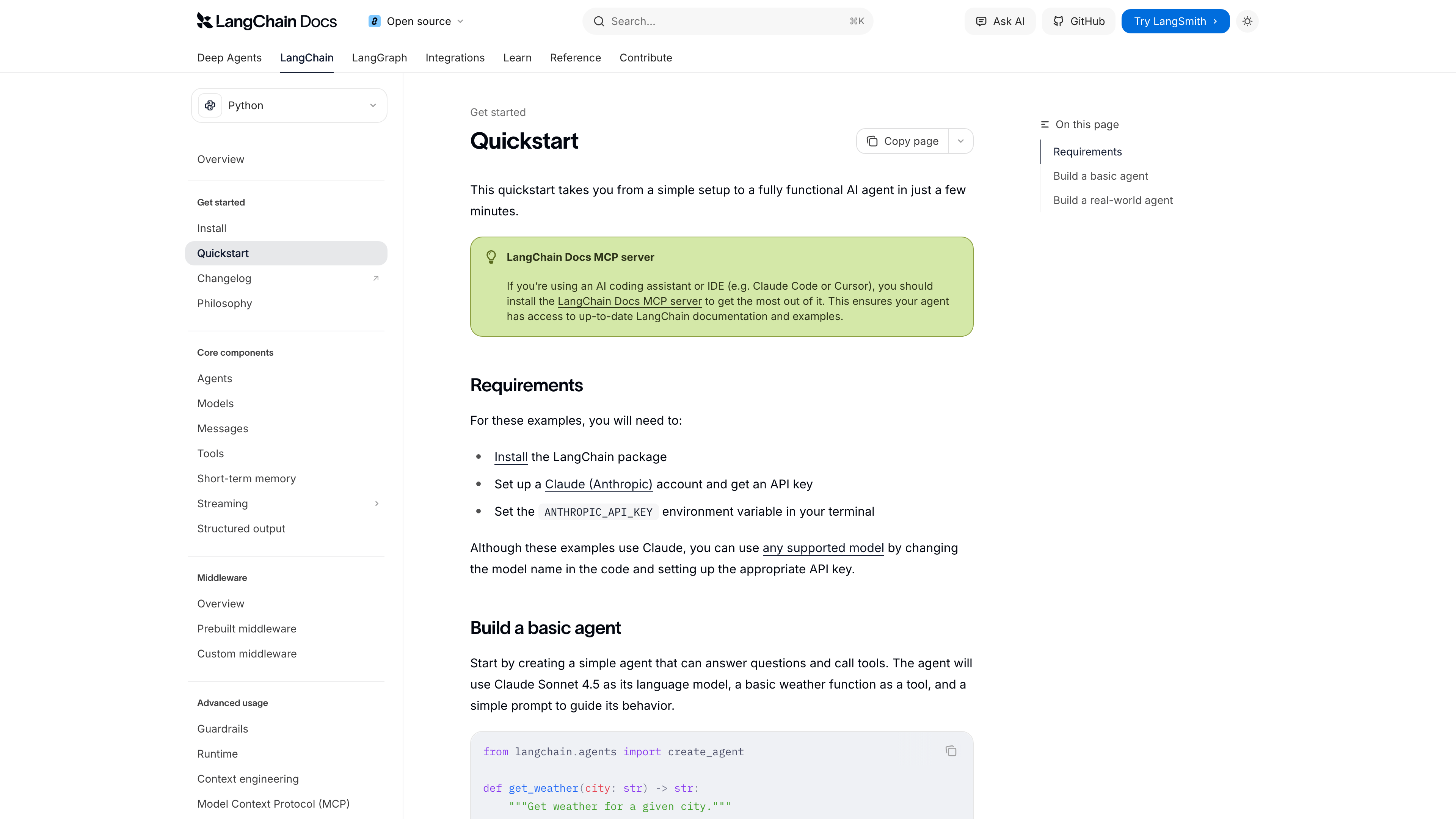

For tool selection, n8n's AI workflow platform leads for technical teams in 2026, shipping 70+ AI-specific nodes including native LangChain integration, vector database connectors, and an AI Agent Tool Node that lets any n8n workflow become a callable tool for an AI agent. Zapier is the fastest path for non-technical operators who need AI steps inside existing SaaS (software-as-a-service) automations without writing code. LangChain and LangGraph are the right choices when you need programmatic control over complex multi-agent coordination, particularly for workflows where multiple specialised AI agents hand off tasks to each other.

| Tool | Best For | Technical Requirement |

|---|---|---|

| n8n | Technical teams, self-hosting, LangChain integration | Moderate — visual builder plus JSON configuration |

| Zapier | Non-technical teams, SaaS integrations, speed of setup | Low — no-code interface, limited customisation |

| LangChain | Developers, complex chains, multi-agent systems | High — Python/JS programming required |

| Make (formerly Integromat) | Mid-market teams, visual workflow mapping, flexible routing | Low–Medium — visual builder with data transformation support |

One pattern worth building in from day one: human-in-the-loop escalation. Any AI step that acts on external data (sends an email, updates a database, generates a customer-facing document) should have a confidence threshold below which it routes to a human review queue rather than proceeding automatically. Teams that skip this step discover its value the first time a low-confidence hallucination fires a production action. For DevOps teams looking to chain AI into deployment pipelines, the companion article AI DevOps & SRE Automation for Developers covers the production instrumentation layer in detail.

The 5-Step Practical AI Checklist

Quick Answer: Before deploying any AI feature to production, walk through these five steps. Skipping any one of them is how most AI deployments fail quietly — not dramatically, but gradually, as output quality drifts and costs compound.

The five skills covered above are not independent. They form a loop. You write better prompts when you understand how models work. You choose between RAG and fine-tuning when you know what kind of failure you are trying to prevent. You build a workflow when you know what evaluation gates it needs. And you manage costs when you understand what is actually being billed. Here is how they connect as a pre-deployment checklist:

- Define the failure modes first. Before writing a single prompt, list three specific ways this AI feature could go wrong in production. Hallucinate a number? Produce the wrong format? Generate a response that is technically correct but legally risky? Each failure mode maps to a different mitigation: evaluation gate, format constraint, or human-in-the-loop checkpoint respectively.

- Choose your knowledge strategy (RAG vs fine-tuning vs prompt context). If your data changes more than monthly, start with RAG. If you need consistent style or format across thousands of requests, consider fine-tuning. If your knowledge base fits in under 100,000 tokens, try prompt caching with full context first — it is often faster and cheaper than building retrieval infrastructure.

- Write a structured prompt with all four layers. Role (who the model is), context (what it needs to know), task (what it needs to do), and format (exactly how the output should be structured). Every production prompt should have all four. Test it at temperature 0.0, then again at temperature 0.7 to understand the variance.

- Build the evaluation step before the production step. Create your 50-question golden set, run it against your prompt/model combination, and record baseline scores across the five rubric dimensions above. These scores are your regression benchmark — you need them before you can know if a future change makes things better or worse.

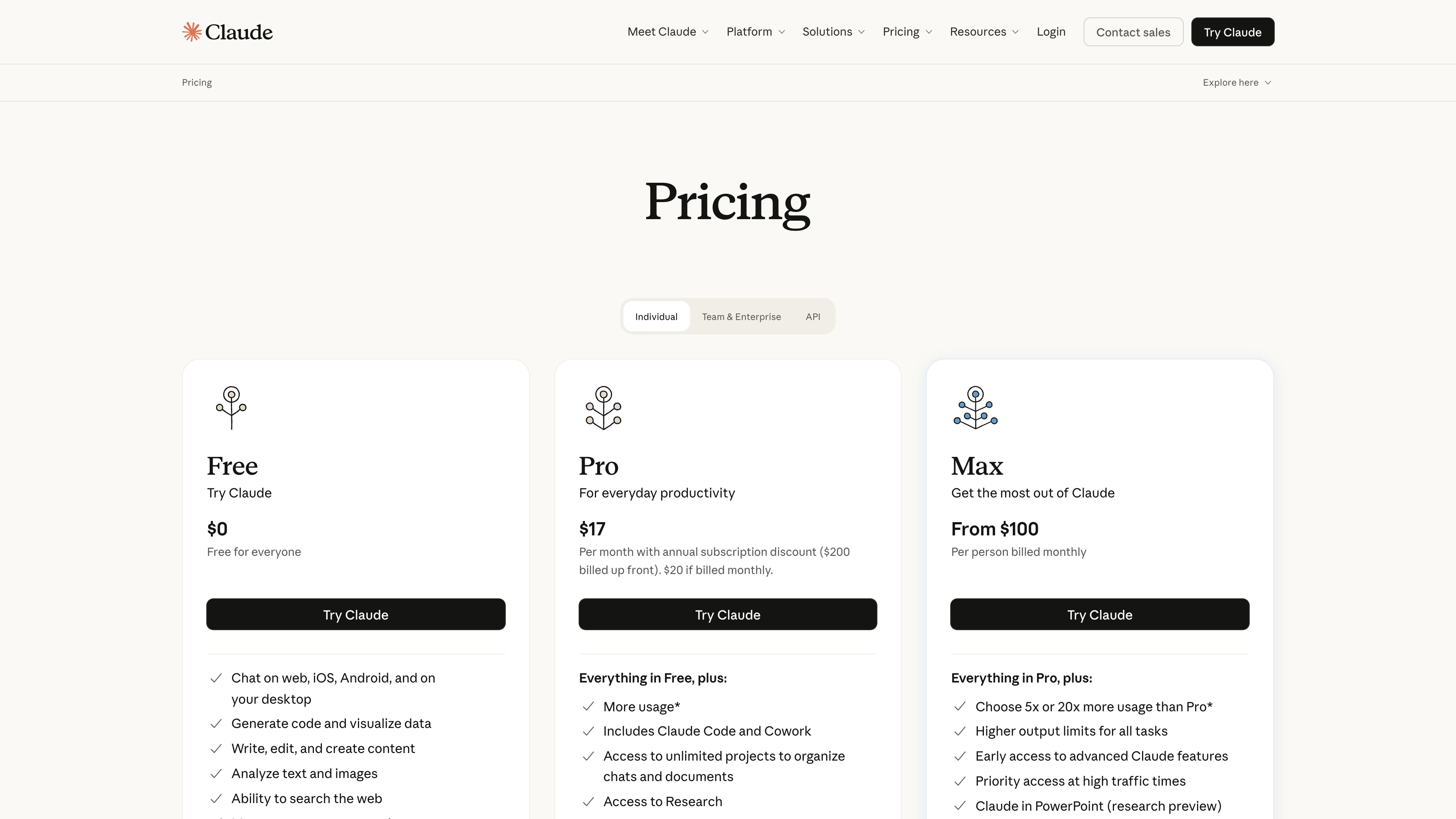

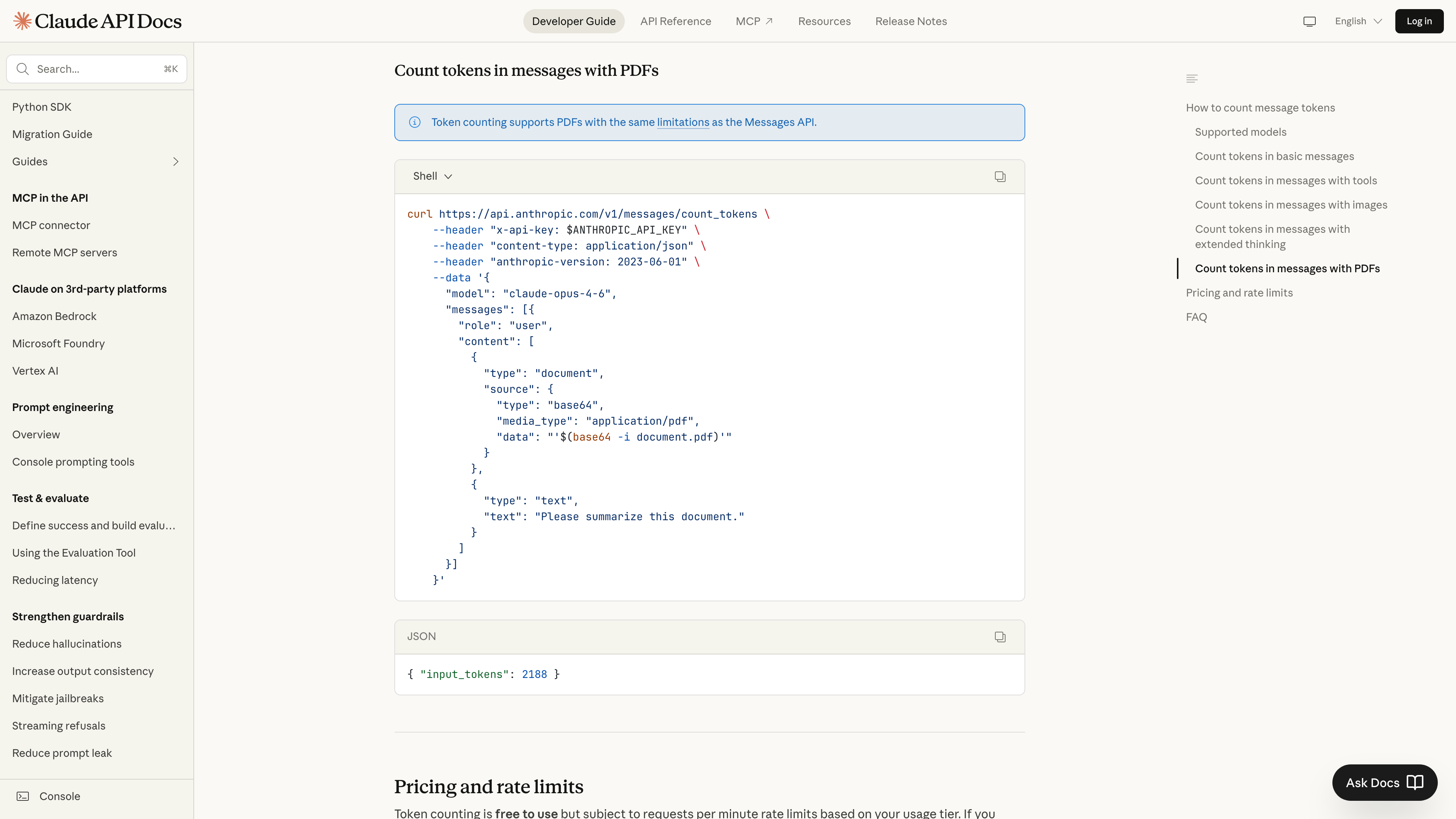

- Calculate real costs at scale before launching. Estimate average token count per request including system prompt, conversation history at turn 5, and tool definitions. Multiply by output token premium. Add storage and embedding costs for RAG. Then multiply the total by your expected monthly request volume. If the number is uncomfortable, investigate prompt caching and context compression before deployment, not after your first invoice arrives.

Teams that treat these five steps as a pre-flight checklist rather than an afterthought consistently report fewer post-launch surprises. The AI models available in 2026 are genuinely capable of remarkable things. The constraint is almost never the model — it is the system around it. Build the system first.

Frequently Asked Questions

What is the biggest mistake people make when using AI tools?

Treating AI output as a finished product rather than a first draft. Most AI failures in practice come from skipping a structured evaluation step — accepting plausible-sounding text without checking it against known facts or running it through a consistency check. The fix is not a better model; it is adding a validation layer after generation.

When should I fine-tune an LLM instead of using RAG?

Fine-tune when you need the model to consistently produce a particular style, tone, or structured format — things that are stable over time. Use RAG when you need the model to answer questions about data that changes frequently, like internal docs, product catalogs, or recent news. When in doubt, start with RAG. Fine-tuning is rarely the right first step.

What prompt engineering pattern works best for complex reasoning tasks?

Chain-of-thought prompting — asking the model to "think step by step" before answering — consistently outperforms direct prompting on multi-step reasoning, maths, and logic tasks. Combining it with a role-based system prompt (e.g., "You are a senior data analyst") further sharpens output quality. The combination of role + CoT handles the majority of complex reasoning use cases.

How do I detect hallucinations in AI output?

The most reliable 2026 approach is LLM-as-a-judge: send the AI's output to a second model alongside the original source context and ask it to flag contradictions. Tools like DeepEval and Confident AI automate this at scale. For lower-volume workflows, a manual rubric checking factual grounding, source citation, and internal consistency is sufficient — especially when combined with a golden test set.

What are the hidden costs of AI tools that pricing pages don't show?

Context window bloat is the biggest: because LLMs are stateless, your app resends the full conversation history on every call, inflating input tokens exponentially. Other hidden costs include system prompt tokens (500–3,000 per request), tool-definition tokens for AI agents, retry costs from rate-limit failures, and embedding storage fees for RAG pipelines. Budget 3× the base model rate as a minimum floor estimate.

What tools are best for building an AI workflow in 2026?

n8n leads for technical teams needing native LangChain integration, 70+ AI-specific nodes, and self-hosting. Zapier is the fastest path for non-technical teams connecting existing SaaS tools to AI steps. LangChain/LangGraph is the right choice when you need programmatic control over complex multi-agent pipelines. Start with the simplest tool that solves your problem — complexity is easy to add and hard to remove.

Does prompt engineering still matter now that models are more powerful?

Yes, significantly. Stronger models amplify good prompts rather than making them irrelevant. A well-structured chain-of-thought prompt to Claude 3.5 or GPT-4o routinely outperforms a vague prompt to the same model by 20–40% on structured output tasks, according to Anthropic's prompt engineering documentation. The ceiling of what a model can do rises with model capability; prompt quality determines how close you get to that ceiling.

How long does it take to build a production-ready AI workflow?

A simple single-step AI automation (e.g., draft email from form submission) can be live in under an hour with Zapier. A multi-step pipeline with evaluation, routing, and human-in-the-loop checkpoints typically takes 2–5 days to design, instrument, and test properly before production deployment. The evaluation and testing phase consistently takes longer than the build phase — plan accordingly.

aicourses.com Verdict

After working through the full practical stack — prompt engineering, model customisation, evaluation, workflow design, and cost modelling — the most important takeaway is structural rather than tactical. AI is not a feature you add; it is a system discipline you adopt. The developers and teams who get consistent value from AI in 2026 are not the ones using the most advanced models. They are the ones who have built the instrumentation around models: clear prompts, evaluation gates, escalation paths, and cost guardrails.

If you are starting today, the highest-ROI sequence is: (1) fix your prompts before anything else — structure, role, format constraints — because prompt quality compounds. (2) Add a 50-question golden set for evaluation before you ship anything to production. (3) Run your actual token math before committing to a billing tier. Fine-tuning and complex RAG pipelines are layer three, not layer one. Most teams that jump straight to them are solving a problem they have not yet correctly diagnosed. For a structured rollout plan at the organisational level, the AI Implementation Roadmap: Step-by-Step covers how to stage these decisions at team and company scale.

The next step in this cluster drills into each of these skills with the depth they deserve. Start with Fine-Tuning vs RAG: Which Should You Use to Customise Your LLM? if the customisation decision is your most pressing question. If prompt quality is the bottleneck, move directly to Prompt Engineering Patterns: How to Write Prompts That Actually Work. Whichever path you choose, the evaluation rubric from this article applies to all of them — build your golden set before you build anything else.

Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!