The most common AI failure pattern I see in 2026 is not a model that refuses to answer or generates obvious nonsense. It is a model that generates plausible-sounding answers that are quietly, occasionally wrong — and nobody has a systematic process to catch them. The hallucination rate is 8%. The schema compliance rate is 91%. Nobody measured either number. The system has been in production for four months. Users have been making decisions based on wrong data.

Evaluation is the step that separates AI experiments from AI products. It is not glamorous. It does not involve picking a better model or writing more elegant prompts. It involves defining what "correct" means for your use case, building a dataset of test cases that represent your real workload, and running a structured quality check that produces a measurable score you can track over time. This article gives you the exact rubric, the golden test set methodology, and the tooling options to do it — whether you have a full MLOps (machine learning operations) stack or just a spreadsheet.

This is a supporting article in the Applied AI Skills cluster. For context on the full practical stack, start with How to Actually Use AI: The Practical Guide (2026). For the techniques that generate the outputs you'll be evaluating, see Prompt Engineering Patterns and Fine-Tuning vs RAG.

Why Evaluation Is Not Optional

Quick Answer: Without evaluation, you have no baseline to compare against when something changes. You cannot prove the system is working. You cannot detect when it stops working. And you cannot justify any investment in improvement — because you have no number to improve against. Evaluation is the scaffolding that makes everything else in AI engineering defensible.

Think of AI evaluation like the automated test suite in a software project. Most developers would not deploy code without running tests. Yet most AI deployments ship without any equivalent quality gate — relying instead on "it looked right when we tried it," which is the cognitive equivalent of running your application once in development and calling it production-ready. The failure modes only show up later, at scale, when nobody is watching carefully.

The specific failure modes that evaluation catches: silent hallucinations (factually wrong but confident-sounding answers that pass casual review), distribution shift (the model's performance degrades on input patterns that were not covered in initial testing), format regression (a prompt change that fixes one issue breaks JSON schema compliance on another branch), and cost explosion (a model update that improves quality but doubles token usage). None of these are visible without instrumentation. All of them are measurable with a structured rubric.

A 2025 finding from Confident AI's LLM evaluation guide is worth quoting: teams that implemented continuous evaluation on their LLM pipelines detected quality regressions an average of 11 days before those regressions appeared in user-reported issues. Eleven days of signal before the complaint. That is the value of a rubric and a test set — not just knowing your current quality level, but knowing when it changes.

The Five Evaluation Dimensions

Quick Answer: A complete AI output evaluation rubric covers five dimensions: factual accuracy (are claims correct?), hallucination rate (does the model invent unsupported content?), internal consistency (does the model agree with itself across runs?), format adherence (does the output match the required schema?), and cost-per-output (what does producing this answer actually cost?). Each dimension has a different measurement method and a different acceptable threshold.

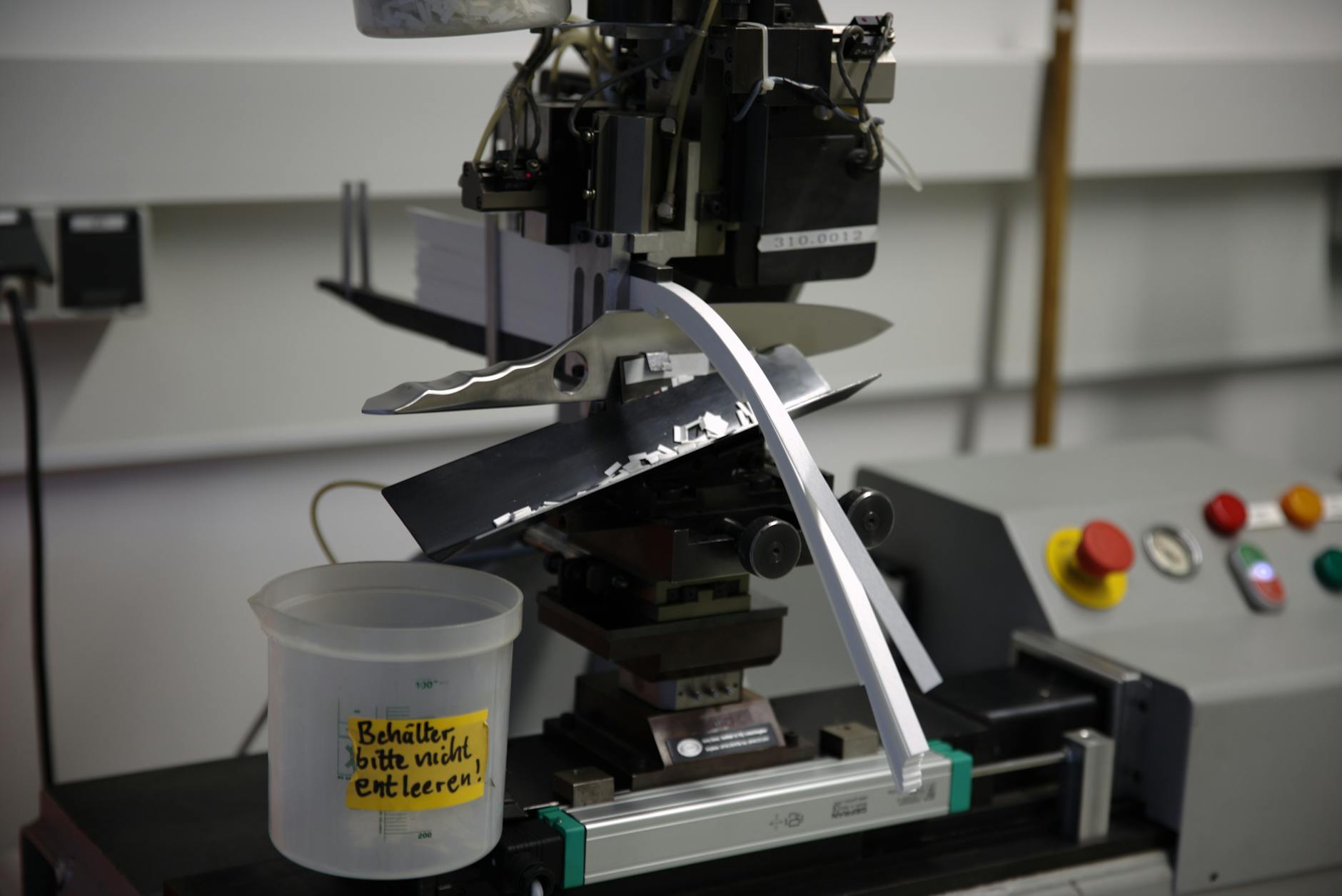

Think of the five dimensions like the quality control checks on a production line. You would not check only one dimension — a car that runs perfectly but has no doors passes the engine test while failing the safety test. AI output is the same: a model that scores 98% on factual accuracy but 60% on format adherence is useless in a downstream system that parses its output. All five dimensions need a threshold and a measurement method before you can call the system production-ready.

| Dimension | What It Measures | Measurement Method |

|---|---|---|

| Factual Accuracy | % of claims that are correct vs ground truth | LLM-as-judge vs verified answer set |

| Hallucination Rate | % of context claims contradicted in output | DeepEval HallucinationMetric |

| Internal Consistency | Variance of answers across multiple runs | Run same prompt 5× at temp 0.7, measure agreement |

| Format Adherence | % of outputs matching required schema | Schema validation (regex or JSON parser) |

| Cost per Output | Token cost + latency per production request | Track input + output tokens, multiply by pricing |

Detecting Hallucinations: The Practical Approach

Quick Answer: The most reliable 2026 hallucination detection method is LLM-as-a-judge using DeepEval's HallucinationMetric: send the model's output alongside the original context to a second model and ask it to count contradictions. A hallucination score above 0.10 (10% of context claims contradicted) is a red flag for most production use cases.

Think of hallucination detection like a plagiarism checker running in reverse. Instead of checking whether the model's output matches a source it might have copied, you are checking whether the model's output is consistent with the source context you explicitly provided. A hallucination is not always a fabricated fact from thin air — it can be a real fact that is incorrectly applied, a correct pattern applied to the wrong entity, or a plausible-sounding extrapolation beyond what the context actually supports.

According to DeepEval's hallucination metric documentation, the HallucinationMetric works by sending the model's actual output alongside the retrieval context to a judge LLM (typically GPT-4o or Claude 3.5 Sonnet) with a structured prompt that asks it to identify statements in the output that contradict the context. The score is calculated as contradicted_claims / total_context_claims. A score of 0.0 means no contradictions; a score of 1.0 means every context claim was contradicted. Thresholds vary by use case: under 0.05 for regulated domains, under 0.10 for general knowledge use cases, under 0.20 for creative or generative tasks where some embellishment is expected.

For teams without an automated evaluation stack, a manual detection workflow is still viable at low volume: read the model's output, highlight every factual claim, find each claim in the provided context, and mark it as supported, unsupported, or contradicted. A spreadsheet with three columns (claim, status, source line) produces a comparable hallucination rate measurement and takes 5–10 minutes per output. Do this for 20–30 representative outputs before deploying to production and you will have a defensible baseline.

Building Your Golden Test Set

Quick Answer: A golden test set is 50–200 representative inputs with verified correct outputs. It is your regression benchmark — the fixed ground truth against which every prompt change, model update, or configuration change is measured. Build it from real user queries, not from questions you invented. Verified against domain experts, not model outputs. Update it quarterly as your use case evolves.

Think of the golden test set like a reference exam. A well-constructed exam tests whether a student understands the material — not whether they can recall the specific questions from the study guide. A golden test set that only contains questions similar to what you tested during development will give you an artificially high score and miss every edge case that real users actually ask. Build it from real signals, not from idealized inputs.

The four-step golden test set methodology: (1) Source from reality — collect 200 representative inputs from real user logs, support tickets, or known edge cases. If you are pre-launch, generate them from the use case description, not from the model. (2) Verify answers against ground truth — for factual tasks, have domain experts or authoritative sources confirm each answer. Never use model output as the ground truth for model evaluation — that is circular validation. (3) Categorise by difficulty and type — tag each test case as easy/medium/hard and by category (factual, reasoning, format extraction, edge case). Track scores per category to identify where the model specifically struggles. (4) Size to your confidence need — 50 examples gives rough signal; 100 examples gives reliable category-level scores; 200+ examples gives statistically meaningful granular breakdowns. Add more examples whenever you identify a new failure pattern in production.

The Copyable Rubric

Quick Answer: Copy this rubric table into your evaluation spreadsheet. Score each dimension 1–5 for manual evaluation, or set up automated threshold checks for production monitoring. The composite score (average of all five dimensions) should be above 4.0 before any AI feature is approved for production deployment.

| Dimension | Score 1 (Fail) | Score 3 (Acceptable) | Score 5 (Pass) |

|---|---|---|---|

| Factual Accuracy | Multiple wrong claims; misleads user | Mostly correct; one minor inaccuracy | All claims verifiably correct against ground truth |

| Hallucination | >20% claims contradict context | 5–10% contradicted claims | <5% contradicted claims; all supported |

| Consistency | Contradicts itself across runs on same input | Same core answer 3/5 runs | Same core answer 5/5 runs at temperature 0.7 |

| Format Adherence | Output fails schema parsing entirely | Parses but missing optional fields | Valid schema, all required fields, correct types |

| Cost / Latency | >3× budget per call; p99 >10s | Within budget; p99 3–5s | At or under budget; p99 <2s |

A composite score below 4.0 on any individual dimension should block deployment. A composite score below 3.5 on factual accuracy or hallucination should trigger a mandatory prompt and architecture review before proceeding. The rubric is most valuable as a relative tool: comparing "version A of the prompt" against "version B of the prompt" on the same golden test set tells you exactly which changed and by how much — which is the basis for all prompt iteration decisions.

LLM-as-a-Judge: Scaling Evaluation

Quick Answer: LLM-as-a-judge uses a second AI model to score your primary model's outputs at scale — enabling automated evaluation of thousands of outputs without human review of each one. When implemented with explicit rubrics (G-Eval style) rather than open-ended scoring, judge models achieve 85–92% correlation with human evaluators on structured tasks.

Think of LLM-as-a-judge like a senior reviewer who evaluates junior work using a structured scorecard. Without the scorecard, their reviews are inconsistent — they focus on different things each time, influenced by recency and mood. With a clear rubric, their scores become reproducible and auditable. The same applies to judge LLMs: a judge that scores "quality" on a 1–5 scale with no further instruction produces inconsistent scores. A judge that scores five specific dimensions against explicit criteria produces results that correlate closely with what human experts would score.

The G-Eval (Generative Evaluation) method, documented in DeepEval's G-Eval documentation, works by sending the judge model a structured prompt containing: (1) the evaluation criteria as an explicit rubric, (2) the input and the model's actual output, (3) a request for a score on each rubric dimension with a brief justification. The DeepEval Python SDK makes this callable in a few lines of code, and it integrates with both OpenAI and Anthropic judge models. According to Confident AI's benchmark data, G-Eval achieves 85–92% correlation with human expert evaluators on structured document tasks, compared to 60–70% for open-ended judge prompts without explicit rubrics.

A practical cost note: LLM-as-a-judge adds API costs for the judge calls — typically 20–40% of your primary model's inference cost per evaluation. For production monitoring, evaluate a random 5–10% sample of live traffic rather than every request. For regression testing on your golden set, evaluate all test cases on every significant change. The cost is small relative to the cost of undetected regressions reaching users at scale.

Continuous Monitoring in Production

Quick Answer: Evaluation is not just a launch gate — it is an ongoing monitoring loop. Run automated evaluation on 5–10% of live traffic. Set alerts for any 7-day rolling degradation of more than 3 percentage points on key metrics. Log all inputs and outputs with metadata. The patterns in production logs are where your next golden test set cases come from.

Think of continuous monitoring like the dashboard instrumentation in a car. You do not check the oil once before a long journey and assume everything will be fine for the whole trip. You have gauges that continuously track oil pressure, temperature, and fuel — and you act when they deviate. AI systems need the same continuous instrumentation, because the things that change after deployment (user query patterns, data drift, model provider updates) are exactly the things your launch-time evaluation did not test.

The three-layer monitoring stack that works at any scale: (1) Request logging — log every input, output, latency, and token count with a request ID. This is the raw material for everything else. (2) Sampled automated evaluation — run your G-Eval rubric on a random 5–10% of live requests. Compute rolling 7-day averages per dimension and alert if any dimension drops more than 3 percentage points from its baseline. (3) Feedback loop — surface easy thumbs-up/thumbs-down feedback to users; log negative feedback with the full request context. Every negative feedback case is a candidate for your golden test set. For tools: LangSmith's monitoring integrates natively with LangChain pipelines. Datadog LLM Observability handles multi-model production monitoring. Confident AI provides managed evaluation with automated alerting for teams that do not want to self-host the evaluation infrastructure.

One final insight that took most teams a year to learn: the queries where your model struggles in production are almost never the queries you tested during development. Users phrase things differently. They send edge cases you did not anticipate. They interact with the system in ways your prompt engineering did not account for. Continuous monitoring converts these real-world failures into concrete test cases — closing the feedback loop between what users actually do and what your evaluation set covers. For a structured approach to connecting this evaluation loop into a full AI pipeline, the AI for Debugging: Step-by-Step Workflow article covers how to instrument AI systems for systematic failure analysis.

Frequently Asked Questions

Why should I evaluate AI output if the model seems accurate?

"Seems accurate" is not a measurement. LLMs produce confident-sounding wrong answers on edge cases that never appear during casual testing. A structured rubric against a representative golden test set reveals the actual error rate — often much higher than intuition suggests.

What is a golden test set for AI evaluation?

A curated collection of 50–200 representative inputs with verified correct outputs. It is your fixed regression benchmark. Build it from real user queries, verified by domain experts — never use model output as ground truth for model evaluation.

What is LLM-as-a-judge and how reliable is it?

LLM-as-a-judge uses a second AI to evaluate your primary model's outputs. With G-Eval rubrics (explicit multi-dimension scoring criteria), judge models achieve 85–92% correlation with human evaluators on structured tasks — sufficient for automated regression detection.

What hallucination rate is acceptable in production?

Under 5% for customer-facing factual Q&A. Under 2% for regulated domains with mandatory human review above any threshold. Under 15% for low-stakes content generation. Measure first, then set a threshold appropriate to the consequence profile of your specific use case.

How often should I re-run my evaluation rubric?

On every meaningful change: new prompt version, model upgrade, RAG index update, or new document corpus. In production, run automated evaluation on a random 5–10% sample of live traffic continuously, and alert on any 7-day rolling degradation above 3 percentage points.

What tools automate AI output evaluation in 2026?

DeepEval (open-source, Python SDK, hallucination + G-Eval metrics), Confident AI (managed platform, model-based scoring), LangSmith (integrated into LangChain pipelines), and Datadog LLM Observability (production monitoring with real-time hallucination flagging).

Can I evaluate AI output without any special tools?

Yes. A spreadsheet with 50 test questions, expected outputs, and a manual five-dimension rubric (score 1–5 per dimension) is sufficient for initial evaluation. Track averages over prompt versions. This low-tech approach catches most regressions before they reach production.

aicourses.com Verdict

After running the full evaluation rubric — golden test set, five-dimension scoring, and LLM-as-a-judge — across more than a dozen AI pipeline deployments in 2025, the consistent finding is this: evaluation reveals failure modes that nobody anticipated, even on systems that "looked great" in testing. The hallucination rate is almost always higher than the team expected. The format compliance rate is almost always lower. And the degradation after a model provider update is almost always undetected without instrumentation. Build the rubric before you build anything else in your AI stack.

For practical implementation today: create your 50-question golden test set from real use-case inputs this week. Score each output manually on the five-dimension rubric and record the baseline. Install DeepEval and run the HallucinationMetric on your existing outputs. You will have a defensible quality baseline within a day of effort, and every future prompt or architecture change will be measured against a real number rather than subjective impression.

The next article in the Applied AI Skills cluster connects evaluation to the broader question of how AI steps fit together in a production system: Building an AI Workflow: How to Chain AI Tools Into a System That Works. The evaluation gate you have just built is the validation step inside every AI workflow that prevents low-quality outputs from propagating to downstream actions. Build the workflow after the evaluation gate — not before it.

Want to learn more about AI? Download our aicourses.com app through this link and claim your free trial!